-

使用canal和rocketmq同步mysql数据到elasticsearch中【canal,rocketmq,elasticsearch】

在使用es过程中,我们需要将我们数据库中的数据与es进行同步

本文主要使用canal和rocketmq实现mysql和es的数据同步功能现有流程为,canal-deployer监控mysql数据,并将数据同步到rocketmq中。canal-adapter监控rocketmq队列中的数据,并将获取到的数据同步到es中

本文使用的的canal1.1.5版本

一、canal介绍及安装

1、canal主要包含:

- canal-deployer:主要用来监控mysql数据变化

- canal-adapter:主要用来将监控的数据同步到其他应用

- canal-admin:相当于操作控制台,可安可不安

2、canal安装教程

- canal安装教程现有文章很全面,直接点击链接根据步骤操作即可

二、rocketmq安装

三、配置canal-deployer

- 注意,mysql的日志模式要设置为row

- 如果想要java获取canal-deployer监控的数据,可以查看此链接教程

第一步:deployer基础配置

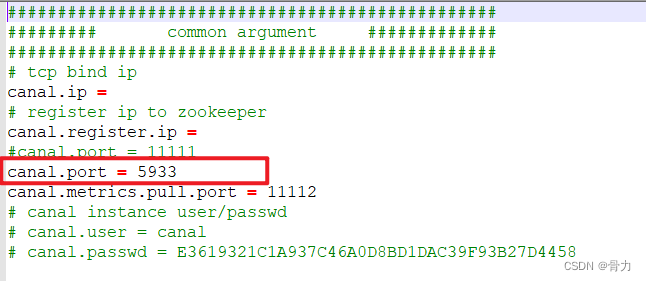

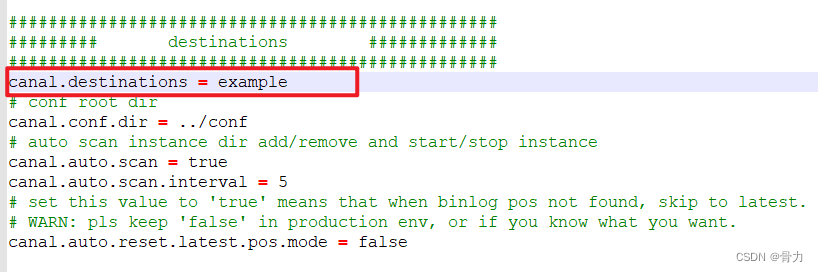

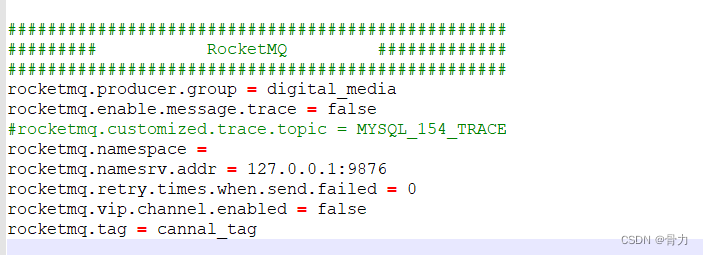

配置canal-deployer -> conf -> canal.properties配置文件内容

配置端口

这里是配置使用哪个目录下面的配置内容,默认example即可

要注意下面配置的几个内容,在canal-adapter中也要配置成一样的

如果使用的是kafka、rabbitmq,可在对应的板块进行设置

配置完成后,进入/bin/目录,执行startup.sh即可启动canal-deployer

另附上完整配置文件内容################################################# ######### common argument ############# ################################################# # tcp bind ip canal.ip = # register ip to zookeeper canal.register.ip = #canal.port = 11111 canal.port = 5933 canal.metrics.pull.port = 11112 # canal instance user/passwd # canal.user = canal # canal.passwd = E3619321C1A937C46A0D8BD1DAC39F93B27D4458 # canal admin config #canal.admin.manager = 127.0.0.1:8089 canal.admin.port = 11110 canal.admin.user = admin canal.admin.passwd = 4ACFE3202A5FF5CF467898FC58AAB1D615029441 # admin auto register #canal.admin.register.auto = true #canal.admin.register.cluster = #canal.admin.register.name = canal.zkServers = # flush data to zk canal.zookeeper.flush.period = 1000 canal.withoutNetty = false # tcp, kafka, rocketMQ, rabbitMQ, pulsarMQ canal.serverMode = rocketMQ # flush meta cursor/parse position to file canal.file.data.dir = ${canal.conf.dir} canal.file.flush.period = 1000 ## memory store RingBuffer size, should be Math.pow(2,n) canal.instance.memory.buffer.size = 16384 ## memory store RingBuffer used memory unit size , default 1kb canal.instance.memory.buffer.memunit = 1024 ## meory store gets mode used MEMSIZE or ITEMSIZE canal.instance.memory.batch.mode = MEMSIZE canal.instance.memory.rawEntry = true ## detecing config canal.instance.detecting.enable = false #canal.instance.detecting.sql = insert into retl.xdual values(1,now()) on duplicate key update x=now() canal.instance.detecting.sql = select 1 canal.instance.detecting.interval.time = 3 canal.instance.detecting.retry.threshold = 3 canal.instance.detecting.heartbeatHaEnable = false # support maximum transaction size, more than the size of the transaction will be cut into multiple transactions delivery canal.instance.transaction.size = 1024 # mysql fallback connected to new master should fallback times canal.instance.fallbackIntervalInSeconds = 60 # network config canal.instance.network.receiveBufferSize = 16384 canal.instance.network.sendBufferSize = 16384 canal.instance.network.soTimeout = 30 # binlog filter config canal.instance.filter.druid.ddl = true canal.instance.filter.query.dcl = false canal.instance.filter.query.dml = false canal.instance.filter.query.ddl = false canal.instance.filter.table.error = false canal.instance.filter.rows = false canal.instance.filter.transaction.entry = false canal.instance.filter.dml.insert = false canal.instance.filter.dml.update = false canal.instance.filter.dml.delete = false # binlog format/image check canal.instance.binlog.format = ROW,STATEMENT,MIXED canal.instance.binlog.image = FULL,MINIMAL,NOBLOB # binlog ddl isolation canal.instance.get.ddl.isolation = false # parallel parser config canal.instance.parser.parallel = true ## concurrent thread number, default 60% available processors, suggest not to exceed Runtime.getRuntime().availableProcessors() #canal.instance.parser.parallelThreadSize = 16 ## disruptor ringbuffer size, must be power of 2 canal.instance.parser.parallelBufferSize = 256 # table meta tsdb info canal.instance.tsdb.enable = true canal.instance.tsdb.dir = ${canal.file.data.dir:../conf}/${canal.instance.destination:} canal.instance.tsdb.url = jdbc:h2:${canal.instance.tsdb.dir}/h2;CACHE_SIZE=1000;MODE=MYSQL; canal.instance.tsdb.dbUsername = canal canal.instance.tsdb.dbPassword = canal # dump snapshot interval, default 24 hour canal.instance.tsdb.snapshot.interval = 24 # purge snapshot expire , default 360 hour(15 days) canal.instance.tsdb.snapshot.expire = 360 ################################################# ######### destinations ############# ################################################# canal.destinations = example # conf root dir canal.conf.dir = ../conf # auto scan instance dir add/remove and start/stop instance canal.auto.scan = true canal.auto.scan.interval = 5 # set this value to 'true' means that when binlog pos not found, skip to latest. # WARN: pls keep 'false' in production env, or if you know what you want. canal.auto.reset.latest.pos.mode = false canal.instance.tsdb.spring.xml = classpath:spring/tsdb/h2-tsdb.xml #canal.instance.tsdb.spring.xml = classpath:spring/tsdb/mysql-tsdb.xml canal.instance.global.mode = spring canal.instance.global.lazy = false canal.instance.global.manager.address = ${canal.admin.manager} #canal.instance.global.spring.xml = classpath:spring/memory-instance.xml canal.instance.global.spring.xml = classpath:spring/file-instance.xml #canal.instance.global.spring.xml = classpath:spring/default-instance.xml ################################################## ######### MQ Properties ############# ################################################## # aliyun ak/sk , support rds/mq canal.aliyun.accessKey = canal.aliyun.secretKey = canal.aliyun.uid= canal.mq.flatMessage = true canal.mq.canalBatchSize = 50 canal.mq.canalGetTimeout = 100 # Set this value to "cloud", if you want open message trace feature in aliyun. canal.mq.accessChannel = local canal.mq.database.hash = true canal.mq.send.thread.size = 30 canal.mq.build.thread.size = 8 ################################################## ######### Kafka ############# ################################################## kafka.bootstrap.servers = 127.0.0.1:9092 kafka.acks = all kafka.compression.type = none kafka.batch.size = 16384 kafka.linger.ms = 1 kafka.max.request.size = 1048576 kafka.buffer.memory = 33554432 kafka.max.in.flight.requests.per.connection = 1 kafka.retries = 0 kafka.kerberos.enable = false kafka.kerberos.krb5.file = "../conf/kerberos/krb5.conf" kafka.kerberos.jaas.file = "../conf/kerberos/jaas.conf" ################################################## ######### RocketMQ ############# ################################################## rocketmq.producer.group = digital_media rocketmq.enable.message.trace = false #rocketmq.customized.trace.topic = MYSQL_154_TRACE rocketmq.namespace = rocketmq.namesrv.addr = 127.0.0.1:9876 rocketmq.retry.times.when.send.failed = 0 rocketmq.vip.channel.enabled = false rocketmq.tag = cannal_tag ################################################## ######### RabbitMQ ############# ################################################## rabbitmq.host = rabbitmq.virtual.host = rabbitmq.exchange = rabbitmq.username = rabbitmq.password = rabbitmq.deliveryMode = ################################################## ######### Pulsar ############# ################################################## pulsarmq.serverUrl = pulsarmq.roleToken = pulsarmq.topicTenantPrefix =- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

- 88

- 89

- 90

- 91

- 92

- 93

- 94

- 95

- 96

- 97

- 98

- 99

- 100

- 101

- 102

- 103

- 104

- 105

- 106

- 107

- 108

- 109

- 110

- 111

- 112

- 113

- 114

- 115

- 116

- 117

- 118

- 119

- 120

- 121

- 122

- 123

- 124

- 125

- 126

- 127

- 128

- 129

- 130

- 131

- 132

- 133

- 134

- 135

- 136

- 137

- 138

- 139

- 140

- 141

- 142

- 143

- 144

- 145

- 146

- 147

- 148

- 149

- 150

- 151

- 152

- 153

- 154

- 155

- 156

- 157

- 158

- 159

- 160

- 161

- 162

- 163

- 164

- 165

- 166

- 167

- 168

- 169

- 170

- 171

- 172

- 173

- 174

- 175

- 176

- 177

- 178

- 179

- 180

- 181

- 182

- 183

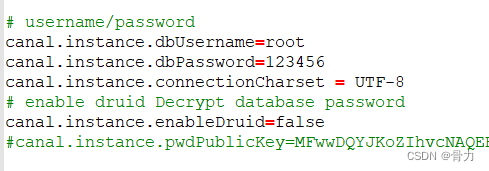

第二步:deployer连接配置

配置canal-deployer/conf/example/instance.properties配置文件内容

配置监控的数据库地址

配置访问数据库用户名密码

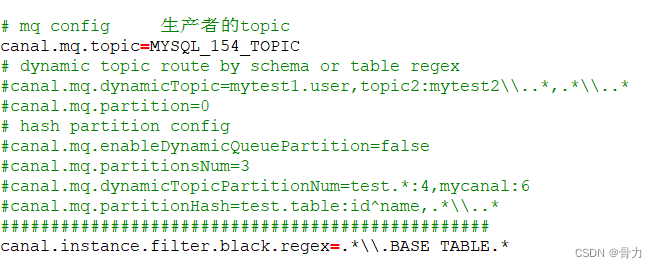

配置传输到rocketmq的topic的内容

另附上完整配置文件内容################################################# ## mysql serverId , v1.0.26+ will autoGen # canal.instance.mysql.slaveId=0 canal.instance.mysql.slaveId=1234 # enable gtid use true/false canal.instance.gtidon=false # position info canal.instance.master.address=192.168.0.154:3306 canal.instance.master.journal.name= canal.instance.master.position= canal.instance.master.timestamp= canal.instance.master.gtid= # rds oss binlog canal.instance.rds.accesskey= canal.instance.rds.secretkey= canal.instance.rds.instanceId= # table meta tsdb info canal.instance.tsdb.enable=true #canal.instance.tsdb.url=jdbc:mysql://127.0.0.1:3306/canal_tsdb #canal.instance.tsdb.dbUsername=canal #canal.instance.tsdb.dbPassword=canal #canal.instance.standby.address = #canal.instance.standby.journal.name = #canal.instance.standby.position = #canal.instance.standby.timestamp = #canal.instance.standby.gtid= # username/password canal.instance.dbUsername=root canal.instance.dbPassword=123456 canal.instance.connectionCharset = UTF-8 # enable druid Decrypt database password canal.instance.enableDruid=false #canal.instance.pwdPublicKey=MFwwDQYJKoZIhvcNAQEBBQADSwAwSAJBALK4BUxdDltRRE5/zXpVEVPUgunvscYFtEip3pmLlhrWpacX7y7GCMo2/JM6LeHmiiNdH1FWgGCpUfircSwlWKUCAwEAAQ== # table regex canal.instance.filter.regex=.*\\..* # table black regex canal.instance.filter.black.regex=mysql\\.slave_.* # table field filter(format: schema1.tableName1:field1/field2,schema2.tableName2:field1/field2) #canal.instance.filter.field=test1.t_product:id/subject/keywords,test2.t_company:id/name/contact/ch # table field black filter(format: schema1.tableName1:field1/field2,schema2.tableName2:field1/field2) #canal.instance.filter.black.field=test1.t_product:subject/product_image,test2.t_company:id/name/contact/ch # mq config 生产者的topic canal.mq.topic=MYSQL_154_TOPIC # dynamic topic route by schema or table regex #canal.mq.dynamicTopic=mytest1.user,topic2:mytest2\\..*,.*\\..* #canal.mq.partition=0 # hash partition config #canal.mq.enableDynamicQueuePartition=false #canal.mq.partitionsNum=3 #canal.mq.dynamicTopicPartitionNum=test.*:4,mycanal:6 #canal.mq.partitionHash=test.table:id^name,.*\\..* ################################################# canal.instance.filter.black.regex=.*\\.BASE TABLE.*- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

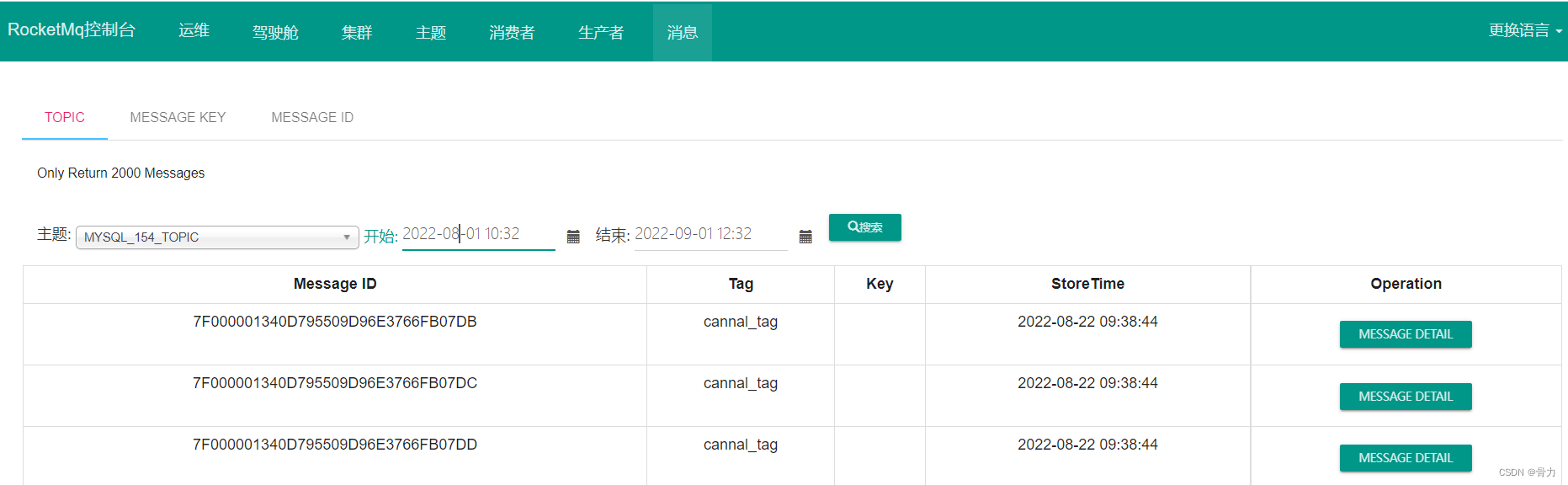

第三步:rockmq消息

查看rocketmq中是否有记录

消息中筛选此主题内容,在mysql中添加数据,这里会自动有一条数据出现即表示成功

第四步:adapter配置

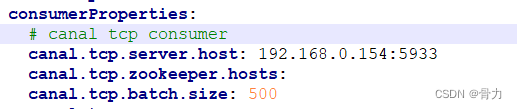

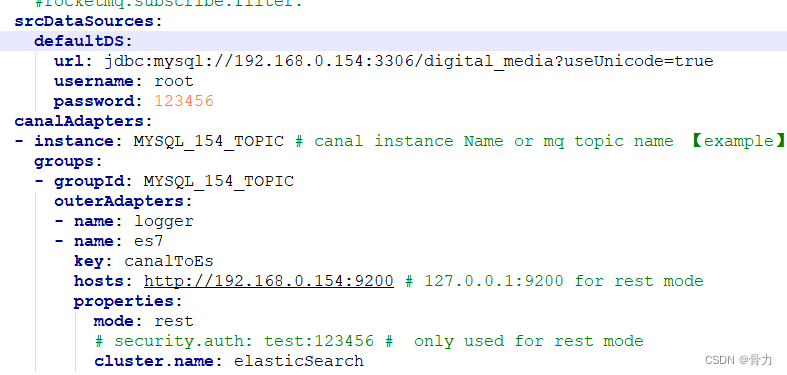

配置canal-adapter/conf/application.yml

配置adapter本身的地址

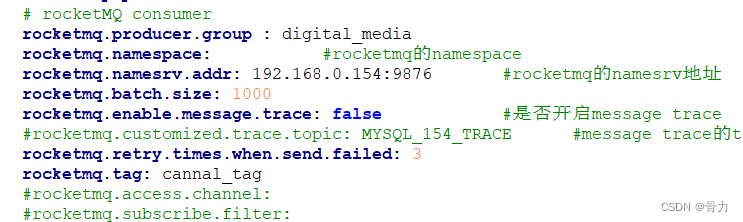

配置与rocketmq有关的相关配置,主要要和deployer中配置的对应

配置详情对接信息,包括rocketmq的topic,以及详情文件夹名es7

另附上详情配置内容

server: port: 5934 spring: jackson: date-format: yyyy-MM-dd HH:mm:ss time-zone: GMT+8 default-property-inclusion: non_null canal.conf: mode: rocketMQ #tcp kafka rocketMQ rabbitMQ flatMessage: true zookeeperHosts: syncBatchSize: 1000 retries: 0 timeout: accessKey: secretKey: consumerProperties: # canal tcp consumer canal.tcp.server.host: 192.168.0.154:5933 canal.tcp.zookeeper.hosts: canal.tcp.batch.size: 500 canal.tcp.username: canal.tcp.password: # rocketMQ consumer rocketmq.producer.group : digital_media rocketmq.namespace: #rocketmq的namespace rocketmq.namesrv.addr: 192.168.0.154:9876 #rocketmq的namesrv地址 rocketmq.batch.size: 1000 rocketmq.enable.message.trace: false #是否开启message trace #rocketmq.customized.trace.topic: MYSQL_154_TRACE #message trace的topic rocketmq.retry.times.when.send.failed: 3 rocketmq.tag: cannal_tag #rocketmq.access.channel: #rocketmq.subscribe.filter: srcDataSources: defaultDS: url: jdbc:mysql://192.168.0.154:3306/digital_media?useUnicode=true username: root password: 123456 canalAdapters: - instance: MYSQL_154_TOPIC # canal instance Name or mq topic name 【example】 groups: - groupId: MYSQL_154_TOPIC outerAdapters: - name: logger - name: es7 key: canalToEs hosts: http://192.168.0.154:9200 # 127.0.0.1:9200 for rest mode properties: mode: rest # security.auth: test:123456 # only used for rest mode cluster.name: elasticSearch- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

第五步:adapter详情配置

配置canal-adapter/conf/es7/media_digital.yml文件的内容,没有的自己新建一个

这里面主要配置如何处理需要同步到es中的数据,注意destination,outerAdapterKey,groupId等数据要和前面配置对应dataSourceKey: defaultDS destination: MYSQL_154_TOPIC outerAdapterKey: canalToEs groupId: MYSQL_154_TOPIC esVersion: es7 esMapping: _index: media_digital _id: _id _type: _doc # upsert: true #pk: id #relations: # customer_order: # name: customer sql: "select a.file_id as _id,a.file_id as fileId,a.file_type_id as fileTypeId,a.activity_id as activityId,a.r_activities_id as rActivitiesId,a.file_code as fileCode, a.file_name as fileName,a.file_sys_name as fileSysName,a.file_size as fileSize,a.file_save_place as fileSavePlace,a.file_save_place_mp4 as fileSavePlaceMp4, a.create_place as createPlace,a.create_time as createTime,a.upload_time as uploadTime,a.upload_name as uploadName,a.upload_ip as uploadIp, a.upload_id as uploadId,a.storage_period as storagePeriod,a.retrieval_status as retrievalStatus,a.encryption_status as encryptionStatus, a.thumbnail_url as thumbnailUrl,a.remark as remark,a.is_enable isEnable from file_info a" # objFields: # _labels: array:; # etlCondition: "where c.id > {}" commitBatch: 3000- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

配置完成后,启动adapter即可实现mysql同步数据到es

四、其他问题

问题一、配置完成后es中无数据

当根据上述的步骤配置完成后,rocektmq中有数据,adapter也显示获取到了数据,但是es中无数据

可能原因:

- 查看adapter中sql是否有误,canal对于sql的有很多限制,比如关联表不能多于三张等要求,详情可以查看阿里开发文档,或者看canal项目介绍

- 查看索引是否创建成功,笔者之前尝试过在head里面直接创建索引,但是无法同步成功,是因为head中创建的索引是为空的无数据结构的索引。需要调用接口创建【附上一个创建接口,供大家参考,此接口参数与上面adapter配置中的sql无关】

http://192.168.0.154:9200/media_digital { "mappings": { "properties": { "fileId": { "type": "long" } "createPlace":{ "type": "text" }, "createTime":{ "type": "date", "format": "date_optional_time||epoch_millis" } } } }- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

问题二、是否可以进行全量同步

直接调用此接口即可

注意:es7为adapter中配置的详情文件夹名,canalToEs为key的配置内容,.yml文件名为es7文件夹中的文件名http://192.168.0.154:5934/etl/es7/canalToEs/media_digital.yml- 1

-

相关阅读:

(2023,GPT-4V,LLM,LMM,功能和应用)大型多模态模型的黎明:GPT-4V(ision) 的初步探索

互联网技术岗笔试基础题目练习①

SpringMVC中的自定义注解

你和工博会观展达人也许只差一篇攻略

【课程设计|MFC】学生成绩分析系统(含课程报告+源码)

Ubuntu安装git方法

对HFish蜜罐捕捉到的样本分析

②、企业快速开发平台Spring Cloud之HTML 元素

Java_自定义实体类的列表List<T>调用remove()失败讲解

Python爬虫:爬虫所需要的爬虫代理ip是什么?

- 原文地址:https://blog.csdn.net/qq_41444892/article/details/126638332