-

深度学习(PyTorch)——多分类问题(Softmax Classifier)

B站up主“刘二大人”视频 笔记

这节课的内容,主要是两个部分的修改:

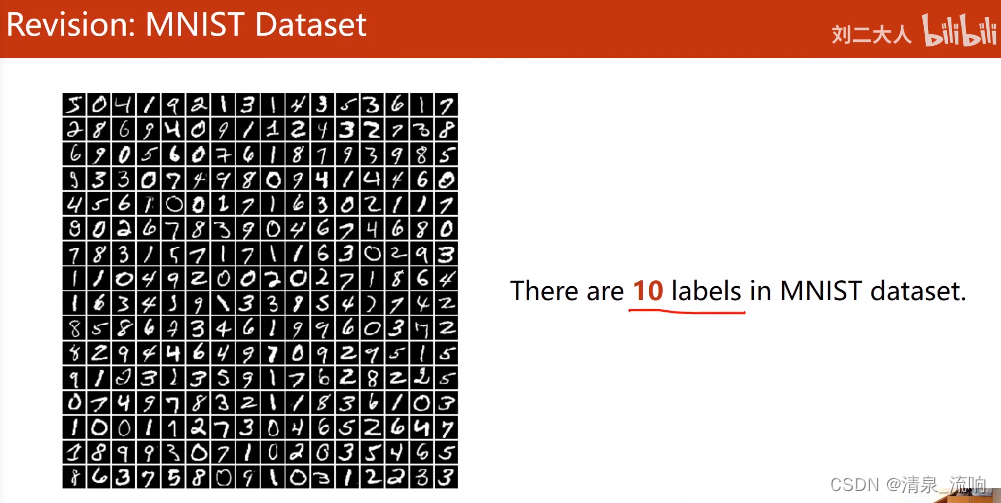

一是数据集:直接采用了内置的MNIST的数据集,那dataloader和dataset自然也是内置的,那也就不用自己写dataset再去继承Dataset类;

再有是把train和test写成了函数形式,直接在main函数当中调用即可;

除了本节课想要实现的代码,刘老师在本节课前一半讲了这些内容:

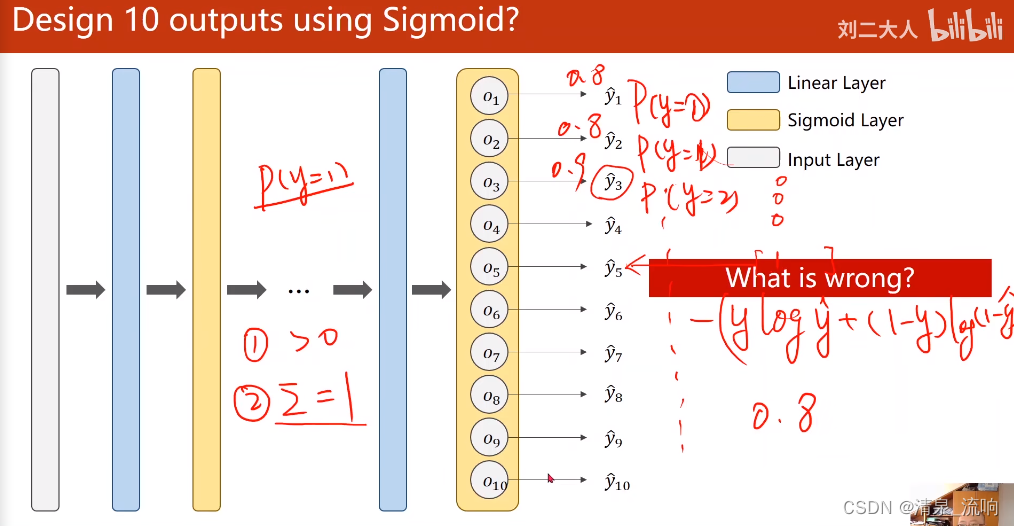

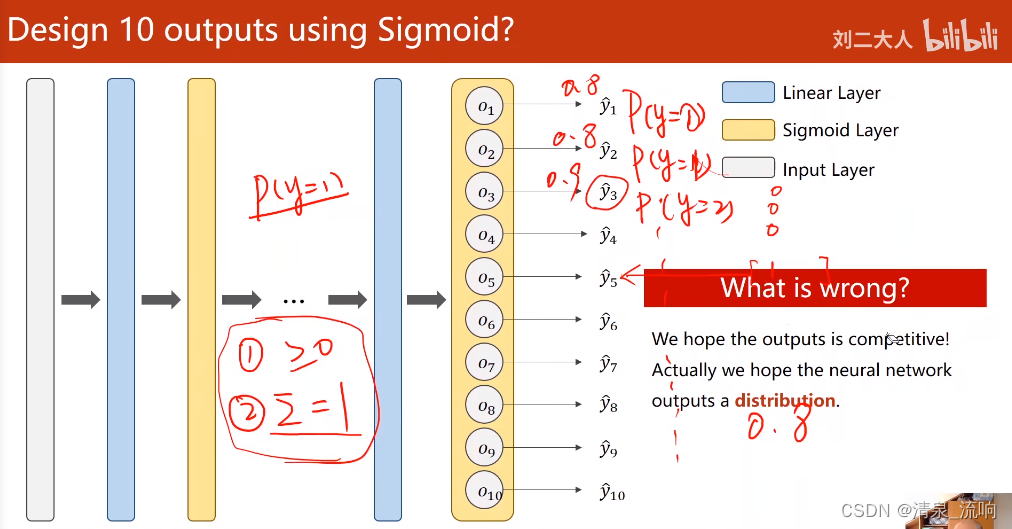

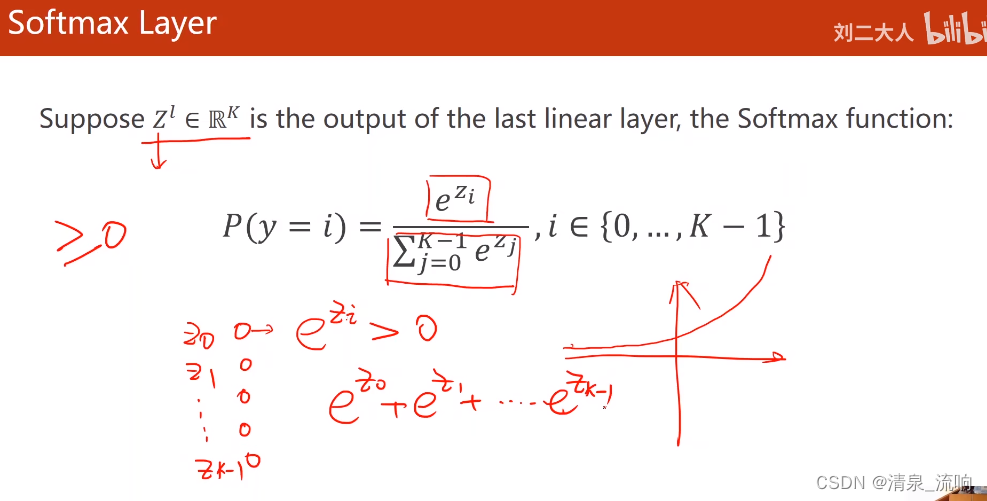

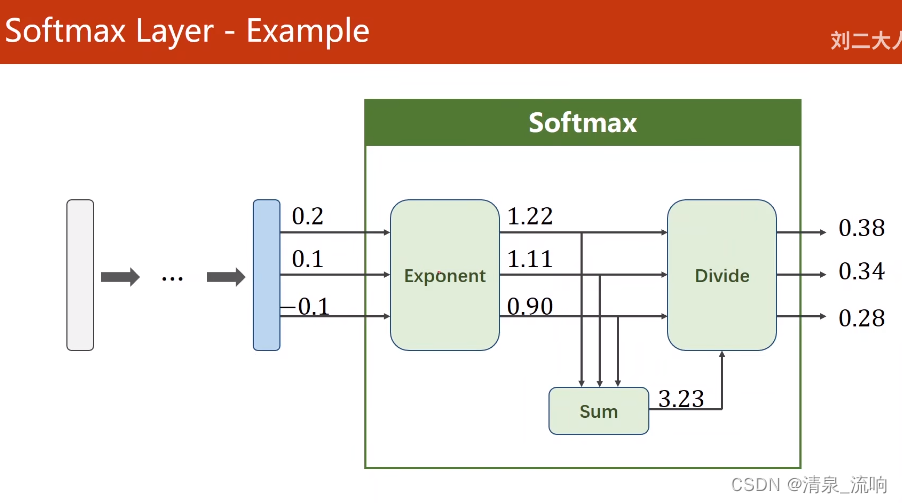

下了很大功夫讲清楚了softmax这个函数的机理:y_pred = np.exp(z) / np.exp(z).sum();

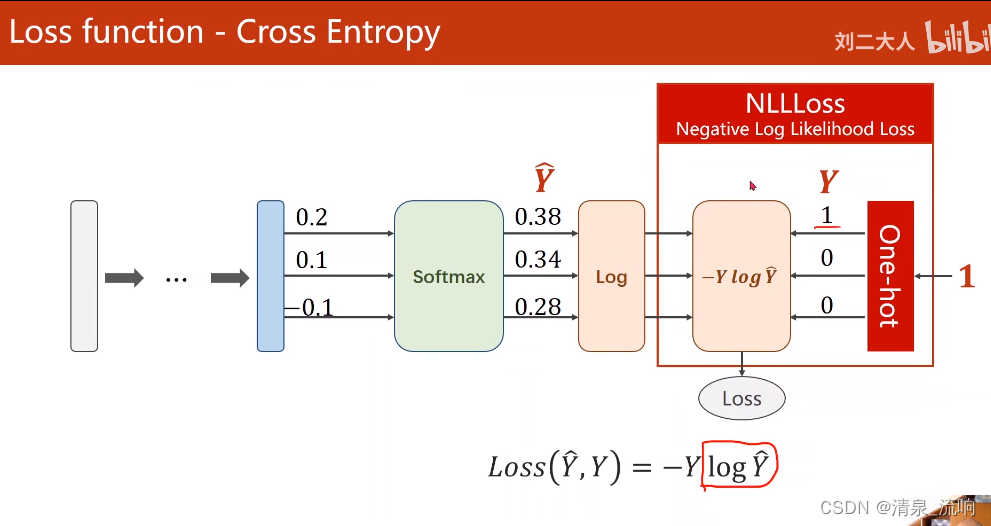

还有交叉熵损失函数是什么一回事,非常流畅简洁地给我讲懂了这个公式的意思:loss = (- y * np.log(y_pred)).sum();

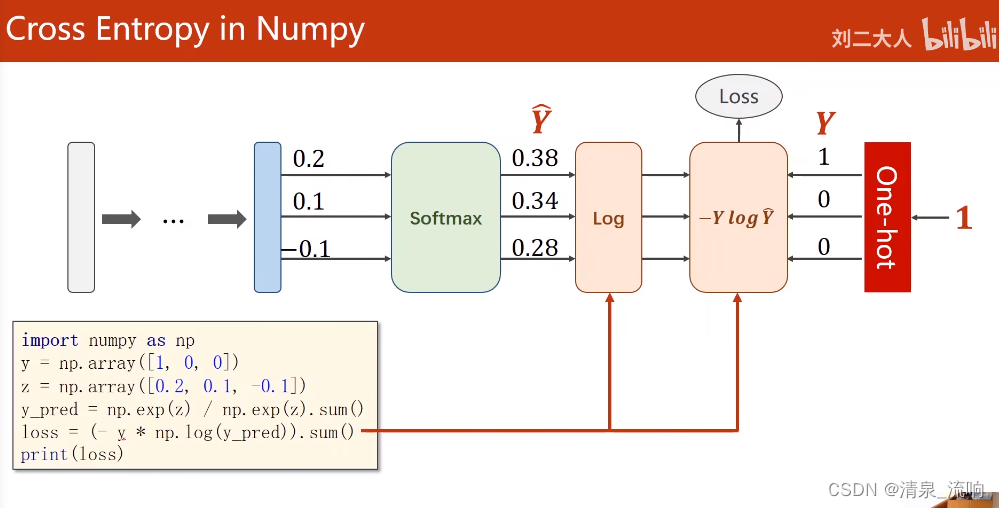

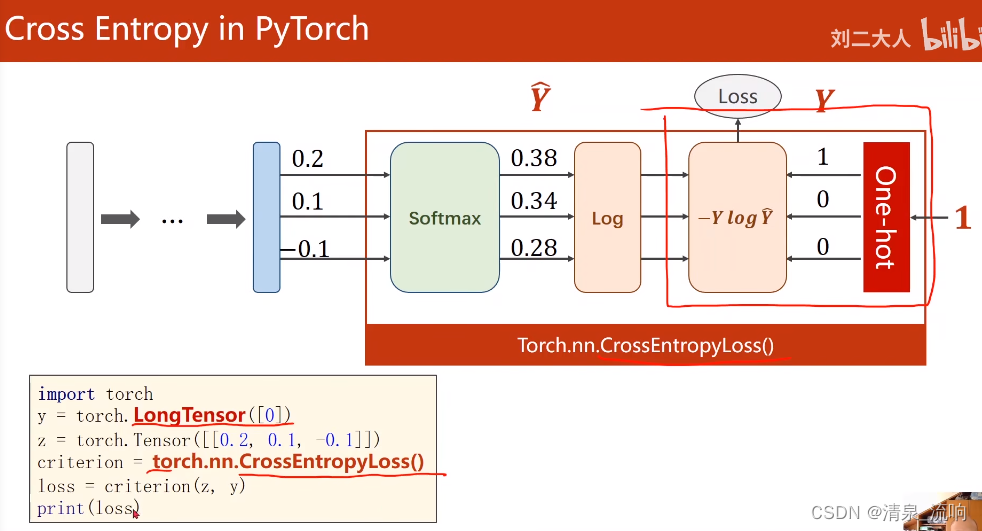

根据这两个函数的理论,用numpy的计算法则把公式实现了,之后才去调用了pytorch当中写好了的函数;

还强调了NLL-Loss的概念,并且留了思考题,为什么会:CrossEntropyLoss <==> LogSoftmax + NLLLoss?

到了pytorch当中,里面有Softmax和Softmax_log两个函数版本;程序如下:

- import torch

- from torchvision import transforms # 该包主要是针对图像进行处理

- from torchvision import datasets

- from torch.utils.data import DataLoader

- import torch.nn.functional as F

- import torch.optim as optim # 优化器的包

- # prepare dateset

- batch_size = 64

- transforms = transforms.Compose([transforms.ToTensor(), # 把输入的图像转变成张量 通道*宽度*高度,取值在(0,1)

- transforms.Normalize((0.1307,), (0.3081,))]) # 归一化,0.1307均值和0.3081方差

- train_dataset = datasets.MNIST(root='../dataset/mnist/',

- train=True,

- download=True,

- transform=transforms)

- train_loader = DataLoader(train_dataset,

- shuffle=True,

- batch_size=batch_size)

- test_dataset = datasets.MNIST(root='../dataset/mnist/',

- train=False,

- download=True,

- transform=transforms)

- test_loader = DataLoader(train_dataset,

- shuffle=False,

- batch_size=batch_size)

- # design model using class

- class Net(torch.nn.Module):

- def __init__(self):

- super(Net, self).__init__()

- self.l1 = torch.nn.Linear(784, 512) # 输入维度784,输出维度521

- self.l2 = torch.nn.Linear(512, 256)

- self.l3 = torch.nn.Linear(256, 128)

- self.l4 = torch.nn.Linear(128, 64)

- self.l5 = torch.nn.Linear(64, 10)

- def forward(self, x):

- x = x.view(-1, 784) # -1其实就是自动获取mini_batch,view可以改变张量的形状,输入层拿到了n*784的矩阵

- x = F.relu(self.l1(x)) # 用relu对每一层算出的结果进行激活

- x = F.relu(self.l2(x))

- x = F.relu(self.l3(x))

- x = F.relu(self.l4(x))

- return self.l5(x) # 最后一层不做激活,不进行非线性变换,这些工作交给交叉熵损失负责

- model = Net()

- # construct loss and optimizer

- criterion = torch.nn.CrossEntropyLoss() # 构造损失函数,交叉熵损失

- optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.5) # 构造优化器 lr为学习率,momentum为冲量来优化训练过程

- # training cycle forward, backward, update

- def train(epoch):

- running_loss = 0.0

- for batch_idx, data in enumerate(train_loader, 0):

- # 获得一个批次的数据和标签

- inputs, target = data

- optimizer.zero_grad()

- # 获得模型预测的结果(64,10)

- outputs = model(inputs)

- # 交叉熵代价函数outputs(64,10),targe(64)

- loss = criterion(outputs, target)

- loss.backward()

- optimizer.step()

- running_loss += loss.item()

- if batch_idx % 300 == 299: # 300次迭代输出一次loss

- print('[%d, %5d] loss:%.3f' % (epoch+1, batch_idx+1, running_loss/300))

- running_loss = 0.0

- def test(): # 不需要反向传播,只需要正向的

- correct = 0

- total = 0

- with torch.no_grad(): # 不需要计算梯度

- for data in test_loader:

- images, labels = data

- outputs = model(images)

- _, predicted = torch.max(outputs.data, dim=1) # dim=1 列是第0个维度,行是第1个维度,返回值是每一行最大值和每一行最大值下标

- total += labels.size(0) #labels.size是一个(N,1)的元组,labels.size(0)=N

- correct += (predicted == labels).sum().item() # 张量之间的比较运算,然后求和取标量

- print('accuracy on test set:%d %% ' % (100*correct/total))

- if __name__ == '__main__':

- for epoch in range(10):

- train(epoch)

- test()

运行结果如下:

视频截图如下:

最后一层不激活,直接给交叉熵损失

-

相关阅读:

多线程学习(C/C++)

Java12~14 switch语法

Slax Linux 获得增强的会话管理和启动参数选项

BlockingQueue

【控制】模型预测控制 model predictive control 简介

65岁以上老人“日行万步”不可取?每天走多少步更利于健康?

昨天阅读量646

基于ATX自动化测试解决方案

【652. 寻找重复的子树】

20240701给NanoPi R6C开发板编译友善之臂的Android12系统

- 原文地址:https://blog.csdn.net/qq_42233059/article/details/126559680