-

【动手学深度学习】卷积神经网络CNN的研究详情

目录

🌊1. 研究目的

- 特征提取和模式识别:CNN 在计算机视觉领域被广泛用于提取图像中的特征和进行模式识别;

- 目标检测和物体识别:CNN 在目标检测和物体识别方面表现出色;

- 图像分割和语义分析:CNN 可以用于图像分割任务,即将图像分割成不同的区域或对象,并对它们进行语义分析;

- 图像生成和样式转换:CNN 还可以用于图像生成和样式转换,例如生成逼真的图像、图像风格迁移等。

🌊2. 研究准备

- 根据GPU安装pytorch版本实现GPU运行研究代码;

- 配置环境用来运行 Python、Jupyter Notebook和相关库等相关库。

🌊3. 研究内容

启动jupyter notebook,使用新增的pytorch环境新建ipynb文件,为了检查环境配置是否合理,输入import torch以及torch.cuda.is_available() ,若返回TRUE则说明研究环境配置正确,若返回False但可以正确导入torch则说明pytorch配置成功,但研究运行是在CPU进行的,结果如下:

🌍3.1 卷积神经网络

(1)使用jupyter notebook新增的pytorch环境新建ipynb文件,完成基本数据操作的研究代码与练习结果如下:

代码实现如下:

导入必要库及实现部分

- %matplotlib inline

- import torch

- from torch import nn

- from d2l import torch as d2l

LeNet

- net = nn.Sequential(

- nn.Conv2d(1, 6, kernel_size=5, padding=2), nn.Sigmoid(),

- nn.AvgPool2d(kernel_size=2, stride=2),

- nn.Conv2d(6, 16, kernel_size=5), nn.Sigmoid(),

- nn.AvgPool2d(kernel_size=2, stride=2),

- nn.Flatten(),

- nn.Linear(16 * 5 * 5, 120), nn.Sigmoid(),

- nn.Linear(120, 84), nn.Sigmoid(),

- nn.Linear(84, 10))

- X = torch.rand(size=(1, 1, 28, 28), dtype=torch.float32)

- for layer in net:

- X = layer(X)

- print(layer.__class__.__name__,'output shape: \t',X.shape)

模型训练

- batch_size = 256

- train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size=batch_size)

- def evaluate_accuracy_gpu(net, data_iter, device=None): #@save

- """使用GPU计算模型在数据集上的精度"""

- if isinstance(net, nn.Module):

- net.eval() # 设置为评估模式

- if not device:

- device = next(iter(net.parameters())).device

- # 正确预测的数量,总预测的数量

- metric = d2l.Accumulator(2)

- with torch.no_grad():

- for X, y in data_iter:

- if isinstance(X, list):

- # BERT微调所需的(之后将介绍)

- X = [x.to(device) for x in X]

- else:

- X = X.to(device)

- y = y.to(device)

- metric.add(d2l.accuracy(net(X), y), y.numel())

- return metric[0] / metric[1]

- #@save

- def train_ch6(net, train_iter, test_iter, num_epochs, lr, device):

- """用GPU训练模型(在第六章定义)"""

- def init_weights(m):

- if type(m) == nn.Linear or type(m) == nn.Conv2d:

- nn.init.xavier_uniform_(m.weight)

- net.apply(init_weights)

- print('training on', device)

- net.to(device)

- optimizer = torch.optim.SGD(net.parameters(), lr=lr)

- loss = nn.CrossEntropyLoss()

- animator = d2l.Animator(xlabel='epoch', xlim=[1, num_epochs],

- legend=['train loss', 'train acc', 'test acc'])

- timer, num_batches = d2l.Timer(), len(train_iter)

- for epoch in range(num_epochs):

- # 训练损失之和,训练准确率之和,样本数

- metric = d2l.Accumulator(3)

- net.train()

- for i, (X, y) in enumerate(train_iter):

- timer.start()

- optimizer.zero_grad()

- X, y = X.to(device), y.to(device)

- y_hat = net(X)

- l = loss(y_hat, y)

- l.backward()

- optimizer.step()

- with torch.no_grad():

- metric.add(l * X.shape[0], d2l.accuracy(y_hat, y), X.shape[0])

- timer.stop()

- train_l = metric[0] / metric[2]

- train_acc = metric[1] / metric[2]

- if (i + 1) % (num_batches // 5) == 0 or i == num_batches - 1:

- animator.add(epoch + (i + 1) / num_batches,

- (train_l, train_acc, None))

- test_acc = evaluate_accuracy_gpu(net, test_iter)

- animator.add(epoch + 1, (None, None, test_acc))

- print(f'loss {train_l:.3f}, train acc {train_acc:.3f}, '

- f'test acc {test_acc:.3f}')

- print(f'{metric[2] * num_epochs / timer.sum():.1f} examples/sec '

- f'on {str(device)}')

- lr, num_epochs = 0.9, 10

- train_ch6(net, train_iter, test_iter, num_epochs, lr, d2l.try_gpu())

🌍3.2 练习

1.将平均汇聚层替换为最大汇聚层,会发生什么?

LeNet网络使用的是平均汇聚层(Average Pooling),将其替换为最大汇聚层(Max Pooling)会对网络的性能产生一些影响。

具体而言,将平均汇聚层替换为最大汇聚层会使网络更加注重图像中的突出特征。最大汇聚层在每个汇聚窗口中选择最大的值作为输出,而平均汇聚层则是取汇聚窗口中的平均值作为输出。因此,最大汇聚层更容易捕捉到图像中的显著特征,如边缘、纹理等。

从实验结果来看,使用最大汇聚层可能会导致网络的准确率提高。然而,这也取决于具体的数据集和任务。有时平均汇聚层在某些情况下可能更适用,因为它能够提供更平滑的特征表示。

总之,将LeNet网络中的平均汇聚层替换为最大汇聚层可能会改善网络的性能,特别是在突出图像中显著特征的任务中。但对于其他任务和数据集,可能需要进行实验和调整以确定最佳的汇聚方式。

当将LeNet网络中的平均汇聚层替换为最大汇聚层时,只需要将nn.AvgPool2d替换为nn.MaxPool2d即可。以下是修改后的代码:

- import torch

- from torch import nn

- from d2l import torch as d2l

- net = nn.Sequential(

- nn.Conv2d(1, 6, kernel_size=5, padding=2), nn.Sigmoid(),

- nn.MaxPool2d(kernel_size=2, stride=2), # 替换为最大汇聚层

- nn.Conv2d(6, 16, kernel_size=5), nn.Sigmoid(),

- nn.MaxPool2d(kernel_size=2, stride=2), # 替换为最大汇聚层

- nn.Flatten(),

- nn.Linear(16 * 5 * 5, 120), nn.Sigmoid(),

- nn.Linear(120, 84), nn.Sigmoid(),

- nn.Linear(84, 10)

- )

- X = torch.rand(size=(1, 1, 28, 28), dtype=torch.float32)

- for layer in net:

- X = layer(X)

- print(layer.__class__.__name__, 'output shape: \t', X.shape)

- batch_size = 256

- train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size=batch_size)

- def evaluate_accuracy_gpu(net, data_iter, device=None):

- """使用GPU计算模型在数据集上的精度"""

- if isinstance(net, nn.Module):

- net.eval() # 设置为评估模式

- if not device:

- device = next(iter(net.parameters())).device

- # 正确预测的数量,总预测的数量

- metric = d2l.Accumulator(2)

- with torch.no_grad():

- for X, y in data_iter:

- if isinstance(X, list):

- # BERT微调所需的(之后将介绍)

- X = [x.to(device) for x in X]

- else:

- X = X.to(device)

- y = y.to(device)

- metric.add(d2l.accuracy(net(X), y), y.numel())

- return metric[0] / metric[1]

- def train_ch6(net, train_iter, test_iter, num_epochs, lr, device):

- """用GPU训练模型(在第六章定义)"""

- def init_weights(m):

- if type(m) == nn.Linear or type(m) == nn.Conv2d:

- nn.init.xavier_uniform_(m.weight)

- net.apply(init_weights)

- print('training on', device)

- net.to(device)

- optimizer = torch.optim.SGD(net.parameters(), lr=lr)

- loss = nn.CrossEntropyLoss()

- animator = d2l.Animator(xlabel='epoch', xlim=[1, num_epochs],

- legend=['train loss', 'train acc', 'test acc'])

- timer, num_batches = d2l.Timer(), len(train_iter)

- for epoch in range(num_epochs):

- # 训练损失之和,训练准确率之和,样本数

- metric = d2l.Accumulator(3)

- net.train()

- for i, (X, y) in enumerate(train_iter):

- timer.start()

- optimizer.zero_grad()

- X, y = X.to(device), y.to(device)

- y_hat = net(X)

- l = loss(y_hat, y)

- l.backward()

- optimizer.step()

- with torch.no_grad():

- metric.add(l * X.shape[0], d2l.accuracy(y_hat, y), X.shape[0])

- timer.stop()

- train_l = metric[0] / metric[2]

- train_acc = metric[1] / metric[2]

- if (i + 1) % (num_batches // 5) == 0 or i == num_batches - 1:

- animator.add(epoch + (i + 1) / num_batches,

- (train_l, train_acc, None))

- test_acc = evaluate_accuracy_gpu(net, test_iter)

- animator.add(epoch + 1, (None, None, test_acc))

- print(f'loss {train_l:.3f}, train acc {train_acc:.3f}, '

- f'test acc {test_acc:.3f}')

- print(f'{metric[2] * num_epochs / timer.sum():.1f} examples/sec '

- f'on {str(device)}')

- lr, num_epochs = 0.9, 10

- train_ch6(net, train_iter, test_iter, num_epochs, lr, d2l.try_gpu())

2.尝试构建一个基于LeNet的更复杂的网络,以提高其准确性。

2.1.调整卷积窗口大小。

- import torch

- from torch import nn

- from d2l import torch as d2l

- class ComplexLeNet(nn.Module):

- def __init__(self):

- super(ComplexLeNet, self).__init__()

- self.conv1 = nn.Conv2d(1, 6, kernel_size=5, padding=2)

- self.relu1 = nn.ReLU()

- self.maxpool1 = nn.MaxPool2d(kernel_size=2, stride=2)

- self.conv2 = nn.Conv2d(6, 16, kernel_size=3, padding=1)

- self.relu2 = nn.ReLU()

- self.maxpool2 = nn.MaxPool2d(kernel_size=2, stride=2)

- self.conv3 = nn.Conv2d(16, 32, kernel_size=3, padding=1)

- self.relu3 = nn.ReLU()

- self.maxpool3 = nn.MaxPool2d(kernel_size=2, stride=2)

- self.flatten = nn.Flatten()

- self.fc1 = nn.Linear(32 * 3 * 3, 120)

- self.relu4 = nn.ReLU()

- self.fc2 = nn.Linear(120, 84)

- self.relu5 = nn.ReLU()

- self.fc3 = nn.Linear(84, 10)

- def forward(self, x):

- out = self.maxpool1(self.relu1(self.conv1(x)))

- out = self.maxpool2(self.relu2(self.conv2(out)))

- out = self.maxpool3(self.relu3(self.conv3(out)))

- out = self.flatten(out)

- out = self.relu4(self.fc1(out))

- out = self.relu5(self.fc2(out))

- out = self.fc3(out)

- return out

- net = ComplexLeNet()

- # 打印网络结构

- print(net)

在这个网络中,增加了一个卷积层和汇聚层。第一个卷积层使用5x5的卷积窗口,第二个和第三个卷积层使用3x3的卷积窗口。这样可以增加网络的深度和复杂度,提高特征提取的能力。可以根据实际情况进一步调整网络结构和超参数,以提高准确性。

2.2.调整输出通道的数量。

- import torch

- from torch import nn

- from d2l import torch as d2l

- class ComplexLeNet(nn.Module):

- def __init__(self):

- super(ComplexLeNet, self).__init__()

- self.conv1 = nn.Conv2d(1, 10, kernel_size=5, padding=2)

- self.relu1 = nn.ReLU()

- self.maxpool1 = nn.MaxPool2d(kernel_size=2, stride=2)

- self.conv2 = nn.Conv2d(10, 20, kernel_size=3, padding=1)

- self.relu2 = nn.ReLU()

- self.maxpool2 = nn.MaxPool2d(kernel_size=2, stride=2)

- self.conv3 = nn.Conv2d(20, 40, kernel_size=3, padding=1)

- self.relu3 = nn.ReLU()

- self.maxpool3 = nn.MaxPool2d(kernel_size=2, stride=2)

- self.flatten = nn.Flatten()

- self.fc1 = nn.Linear(40 * 3 * 3, 120)

- self.relu4 = nn.ReLU()

- self.fc2 = nn.Linear(120, 84)

- self.relu5 = nn.ReLU()

- self.fc3 = nn.Linear(84, 10)

- def forward(self, x):

- out = self.maxpool1(self.relu1(self.conv1(x)))

- out = self.maxpool2(self.relu2(self.conv2(out)))

- out = self.maxpool3(self.relu3(self.conv3(out)))

- out = self.flatten(out)

- out = self.relu4(self.fc1(out))

- out = self.relu5(self.fc2(out))

- out = self.fc3(out)

- return out

- net = ComplexLeNet()

- # 打印网络结构

- print(net)

在这个示例中,我们增加了每个卷积层的输出通道数量。第一个卷积层输出10个通道,第二个卷积层输出20个通道,第三个卷积层输出40个通道。通过增加输出通道的数量,网络可以更好地捕捉和表示输入数据中的特征。你可以根据实际情况进一步调整网络结构和超参数,以提高准确性。

2.3.调整激活函数(如ReLU)。

- import torch

- from torch import nn

- from d2l import torch as d2l

- class ComplexLeNet(nn.Module):

- def __init__(self):

- super(ComplexLeNet, self).__init__()

- self.conv1 = nn.Conv2d(1, 6, kernel_size=5, padding=2)

- self.relu1 = nn.LeakyReLU()

- self.maxpool1 = nn.MaxPool2d(kernel_size=2, stride=2)

- self.conv2 = nn.Conv2d(6, 16, kernel_size=3, padding=1)

- self.relu2 = nn.LeakyReLU()

- self.maxpool2 = nn.MaxPool2d(kernel_size=2, stride=2)

- self.conv3 = nn.Conv2d(16, 32, kernel_size=3, padding=1)

- self.relu3 = nn.LeakyReLU()

- self.maxpool3 = nn.MaxPool2d(kernel_size=2, stride=2)

- self.flatten = nn.Flatten()

- self.fc1 = nn.Linear(32 * 3 * 3, 120)

- self.relu4 = nn.LeakyReLU()

- self.fc2 = nn.Linear(120, 84)

- self.relu5 = nn.LeakyReLU()

- self.fc3 = nn.Linear(84, 10)

- def forward(self, x):

- out = self.maxpool1(self.relu1(self.conv1(x)))

- out = self.maxpool2(self.relu2(self.conv2(out)))

- out = self.maxpool3(self.relu3(self.conv3(out)))

- out = self.flatten(out)

- out = self.relu4(self.fc1(out))

- out = self.relu5(self.fc2(out))

- out = self.fc3(out)

- return out

- net = ComplexLeNet()

- # 打印网络结构

- print(net)

2.4调整卷积层的数量。

- import torch

- from torch import nn

- from d2l import torch as d2l

- class ComplexLeNet(nn.Module):

- def __init__(self):

- super(ComplexLeNet, self).__init__()

- self.features = nn.Sequential(

- nn.Conv2d(1, 32, kernel_size=3, padding=1), # 卷积层1

- nn.ReLU(),

- nn.Conv2d(32, 64, kernel_size=3, padding=1), # 卷积层2

- nn.ReLU(),

- nn.MaxPool2d(kernel_size=2, stride=2), # 汇聚层1

- nn.Conv2d(64, 128, kernel_size=3, padding=1), # 卷积层3

- nn.ReLU(),

- nn.Conv2d(128, 256, kernel_size=3, padding=1), # 卷积层4

- nn.ReLU(),

- nn.MaxPool2d(kernel_size=2, stride=2), # 汇聚层2

- )

- self.fc = nn.Sequential(

- nn.Linear(256 * 7 * 7, 512), # 全连接层1

- nn.ReLU(),

- nn.Linear(512, 256), # 全连接层2

- nn.ReLU(),

- nn.Linear(256, 10) # 输出层

- )

- def forward(self, x):

- x = self.features(x)

- x = torch.flatten(x, 1)

- x = self.fc(x)

- return x

- # 创建复杂LeNet模型实例

- net = ComplexLeNet()

- # 打印网络结构

- print(net)

2.5.调整全连接层的数量。

- import torch

- from torch import nn

- from d2l import torch as d2l

- class ComplexLeNet(nn.Module):

- def __init__(self):

- super(ComplexLeNet, self).__init__()

- self.features = nn.Sequential(

- nn.Conv2d(1, 32, kernel_size=3, padding=1), # 卷积层1

- nn.ReLU(),

- nn.Conv2d(32, 64, kernel_size=3, padding=1), # 卷积层2

- nn.ReLU(),

- nn.MaxPool2d(kernel_size=2, stride=2), # 汇聚层1

- nn.Conv2d(64, 128, kernel_size=3, padding=1), # 卷积层3

- nn.ReLU(),

- nn.Conv2d(128, 256, kernel_size=3, padding=1), # 卷积层4

- nn.ReLU(),

- nn.MaxPool2d(kernel_size=2, stride=2), # 汇聚层2

- )

- self.fc = nn.Sequential(

- nn.Linear(256 * 7 * 7, 512), # 全连接层1

- nn.ReLU(),

- nn.Linear(512, 256), # 全连接层2

- nn.ReLU(),

- nn.Linear(256, 128), # 全连接层3

- nn.ReLU(),

- nn.Linear(128, 10) # 输出层

- )

- def forward(self, x):

- x = self.features(x)

- x = torch.flatten(x, 1)

- x = self.fc(x)

- return x

- # 创建复杂LeNet模型实例

- net = ComplexLeNet()

- # 打印网络结构

- print(net)

- # 训练和评估复杂LeNet模型的代码与之前的示例相似,可以根据需要进行调整

2.6.调整学习率和其他训练细节(例如,初始化和轮数)。

学习率调整为0.001。 训练轮数调整为20。 添加了权重初始化函数init_weights,使用Xavier初始化方法对线性层和卷积层的权重进行初始化。

- import torch

- from torch import nn

- from d2l import torch as d2l

- net = nn.Sequential(

- nn.Conv2d(1, 6, kernel_size=5, padding=2), nn.Sigmoid(),

- nn.AvgPool2d(kernel_size=2, stride=2),

- nn.Conv2d(6, 16, kernel_size=5), nn.Sigmoid(),

- nn.AvgPool2d(kernel_size=2, stride=2),

- nn.Flatten(),

- nn.Linear(16 * 5 * 5, 120), nn.Sigmoid(),

- nn.Linear(120, 84), nn.Sigmoid(),

- nn.Linear(84, 10))

- X = torch.rand(size=(1, 1, 28, 28), dtype=torch.float32)

- for layer in net:

- X = layer(X)

- print(layer.__class__.__name__,'output shape: \t',X.shape)

- batch_size = 256

- train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size=batch_size)

- def evaluate_accuracy_gpu(net, data_iter, device=None): #@save

- """使用GPU计算模型在数据集上的精度"""

- if isinstance(net, nn.Module):

- net.eval() # 设置为评估模式

- if not device:

- device = next(iter(net.parameters())).device

- # 正确预测的数量,总预测的数量

- metric = d2l.Accumulator(2)

- with torch.no_grad():

- for X, y in data_iter:

- if isinstance(X, list):

- # BERT微调所需的(之后将介绍)

- X = [x.to(device) for x in X]

- else:

- X = X.to(device)

- y = y.to(device)

- metric.add(d2l.accuracy(net(X), y), y.numel())

- return metric[0] / metric[1]

- #@save

- def train_ch6(net, train_iter, test_iter, num_epochs, lr, device):

- """用GPU训练模型(在第六章定义)"""

- def init_weights(m):

- if type(m) == nn.Linear or type(m) == nn.Conv2d:

- nn.init.xavier_uniform_(m.weight)

- net.apply(init_weights)

- print('training on', device)

- net.to(device)

- optimizer = torch.optim.SGD(net.parameters(), lr=lr)

- loss = nn.CrossEntropyLoss()

- animator = d2l.Animator(xlabel='epoch', xlim=[1, num_epochs],

- legend=['train loss', 'train acc', 'test acc'])

- timer, num_batches = d2l.Timer(), len(train_iter)

- for epoch in range(num_epochs):

- # 训练损失之和,训练准确率之和,样本数

- metric = d2l.Accumulator(3)

- net.train()

- for i, (X, y) in enumerate(train_iter):

- timer.start()

- optimizer.zero_grad()

- X, y = X.to(device), y.to(device)

- y_hat = net(X)

- l = loss(y_hat, y)

- l.backward()

- optimizer.step()

- with torch.no_grad():

- metric.add(l * X.shape[0], d2l.accuracy(y_hat, y), X.shape[0])

- timer.stop()

- train_l = metric[0] / metric[2]

- train_acc = metric[1] / metric[2]

- if (i + 1) % (num_batches // 5) == 0 or i == num_batches - 1:

- animator.add(epoch + (i + 1) / num_batches,

- (train_l, train_acc, None))

- test_acc = evaluate_accuracy_gpu(net, test_iter)

- animator.add(epoch + 1, (None, None, test_acc))

- print(f'loss {train_l:.3f}, train acc {train_acc:.3f}, '

- f'test acc {test_acc:.3f}')

- print(f'{metric[2] * num_epochs / timer.sum():.1f} examples/sec '

- f'on {str(device)}')

- lr, num_epochs = 0.001, 20

- train_ch6(net, train_iter, test_iter, num_epochs, lr, d2l.try_gpu())

3.在MNIST数据集上尝试以上改进的网络。

- import torch

- from torch import nn

- from d2l import torch as d2l

- # 定义更复杂的LeNet网络

- net = nn.Sequential(

- nn.Conv2d(1, 10, kernel_size=5),

- nn.ReLU(),

- nn.MaxPool2d(kernel_size=2, stride=2),

- nn.Conv2d(10, 20, kernel_size=5),

- nn.ReLU(),

- nn.MaxPool2d(kernel_size=2, stride=2),

- nn.Flatten(),

- nn.Linear(320, 120),

- nn.ReLU(),

- nn.Linear(120, 84),

- nn.ReLU(),

- nn.Linear(84, 10)

- )

- # 数据集加载和准备

- batch_size = 256

- train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size=batch_size)

- # 定义训练函数

- def train_ch6(net, train_iter, test_iter, num_epochs, lr, device):

- def init_weights(m):

- if type(m) == nn.Linear or type(m) == nn.Conv2d:

- nn.init.xavier_uniform_(m.weight)

- net.apply(init_weights)

- net.to(device)

- optimizer = torch.optim.SGD(net.parameters(), lr=lr)

- loss = nn.CrossEntropyLoss()

- animator = d2l.Animator(xlabel='epoch', xlim=[1, num_epochs], legend=['train loss', 'train acc', 'test acc'])

- timer, num_batches = d2l.Timer(), len(train_iter)

- for epoch in range(num_epochs):

- metric = d2l.Accumulator(3)

- net.train()

- for i, (X, y) in enumerate(train_iter):

- timer.start()

- optimizer.zero_grad()

- X, y = X.to(device), y.to(device)

- y_hat = net(X)

- l = loss(y_hat, y)

- l.backward()

- optimizer.step()

- with torch.no_grad():

- metric.add(l * X.shape[0], d2l.accuracy(y_hat, y), X.shape[0])

- timer.stop()

- train_loss = metric[0] / metric[2]

- train_accuracy = metric[1] / metric[2]

- if (i + 1) % (num_batches // 5) == 0 or i == num_batches - 1:

- animator.add(epoch + (i + 1) / num_batches, (train_loss, train_accuracy, None))

- test_accuracy = evaluate_accuracy_gpu(net, test_iter, device)

- animator.add(epoch + 1, (None, None, test_accuracy))

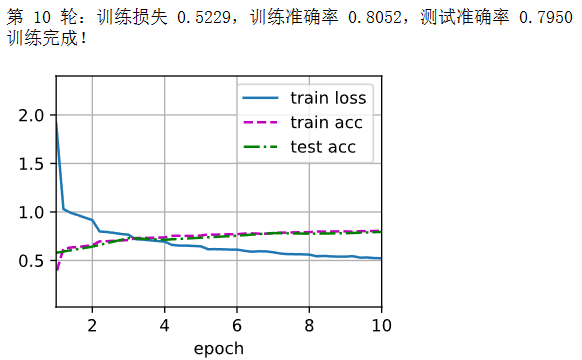

- print(f"第 {epoch+1} 轮:训练损失 {train_loss:.4f},训练准确率 {train_accuracy:.4f},测试准确率 {test_accuracy:.4f}")

- print("训练完成!")

- # 模型训练

- lr, num_epochs = 0.01, 10

- device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

- train_ch6(net, train_iter, test_iter, num_epochs, lr, device)

4.显示不同输入(例如毛衣和外套)时,LeNet第一层和第二层的激活值。

- import torch

- from torch import nn

- from d2l import torch as d2l

- # 定义LeNet网络

- net = nn.Sequential(

- nn.Conv2d(1, 6, kernel_size=5, padding=2),

- nn.Sigmoid(),

- nn.AvgPool2d(kernel_size=2, stride=2),

- nn.Conv2d(6, 16, kernel_size=5),

- nn.Sigmoid(),

- nn.AvgPool2d(kernel_size=2, stride=2),

- nn.Flatten(),

- nn.Linear(16 * 5 * 5, 120),

- nn.Sigmoid(),

- nn.Linear(120, 84),

- nn.Sigmoid(),

- nn.Linear(84, 10)

- )

- # 加载Fashion-MNIST数据集

- batch_size = 256

- train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size=batch_size)

- # 获取一个样本的输入数据

- data = next(iter(test_iter))

- X = data[0][:16] # 选择前16个样本进行展示

- # 计算第一层和第二层的激活值

- activation1 = net[0](X)

- activation2 = net[3](net[2](net[0](X)))

- # 打印激活值

- print("第一层的激活值:", activation1.shape)

- print("第二层的激活值:", activation2.shape)

上述代码将打印LeNet网络的第一层和第二层的激活值的形状。可以通过调整X的选择来查看不同样本 的激活值。这里选择了测试集中的样本作为示例输入。

🌊4. 研究体会

通过这次课程的实验,我深入学习了卷积神经网络,通过使用Python和MXNet深度学习框架进行实验,对CNN的工作原理和实际应用有了更加深入的理解。以下是我在实验过程中的一些心得体会。

首先卷积层是CNN的核心组件之一,它能够有效地捕捉图像中的局部特征。在实验中,通过调整卷积核的大小和数量,探索了不同的特征提取方式。发现较小的卷积核可以捕捉到更细粒度的特征,而较大的卷积核则可以捕捉到更宏观的特征。此外,增加卷积核的数量可以提高模型的表达能力,但也会增加计算复杂度。

其次,池化层是CNN中另一个重要的组件,它可以减小特征图的尺寸,同时保留主要特征。在实验中,我尝试了最大池化和平均池化等不同的池化方式,并观察它们对模型性能的影响。发现最大池化能够更好地保留图像中的主要特征,并在一定程度上提高了模型的鲁棒性。

此外,我还学习了卷积神经网络中的一些关键技术,如批量归一化(Batch Normalization)和残差连接(Residual Connection)。批量归一化可以加速网络的训练过程,并提高模型的稳定性;而残差连接则可以解决深层网络中的梯度消失问题,有效提高了模型的准确性。在实验中,我应用了这些技术,并发现它们确实能够改善模型的性能。

在实验过程中,我深刻认识到CNN模型在处理图像数据时具有出色的特征提取和表示能力。通过卷积层和池化层的组合,模型能够有效地捕捉图像的局部和全局特征,并通过堆叠多个卷积层来提取更高级的特征。同时,卷积神经网络的参数共享和局部感受野的设计赋予它良好的平移不变性和空间层次性,使其非常适合处理具有空间结构的图像数据。

通过这次实验,我不仅加深了对CNN的理论理解,还学会了如何将理论知识应用于实际项目中。我通过编写代码、训练模型和分析结果,逐步掌握了CNN的实际操作技巧。

-

相关阅读:

CentOS下安装及配置MySQL

FFmpeg开发笔记(二十二)FFmpeg中SAR与DAR的显示宽高比

新的Ubuntu服务器上如何安装深度学习环境的docker

Servlet的url-pattern配置

Spring MVC的⼯作流程

Pooling Revisited: Your Receptive Field is Suboptimal 论文解读和感想

图扑软件助力企业数字化转型

Web前端:渐进式Web应用程序有哪些主要功能

基于蝙蝠优化算法的电力系统经济调度研究(Matlab代码实现)

剑指offer(C++)-JZ67:把字符串转换成整数atoi(算法-模拟)

- 原文地址:https://blog.csdn.net/m0_57532432/article/details/139480662