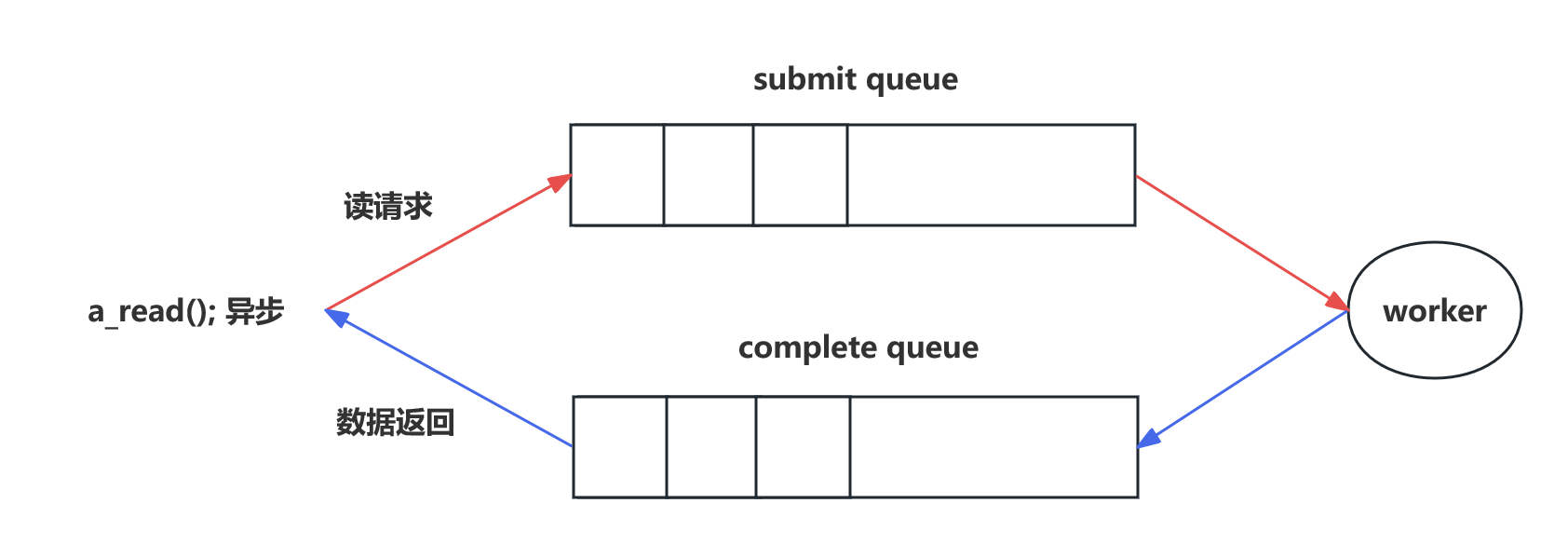

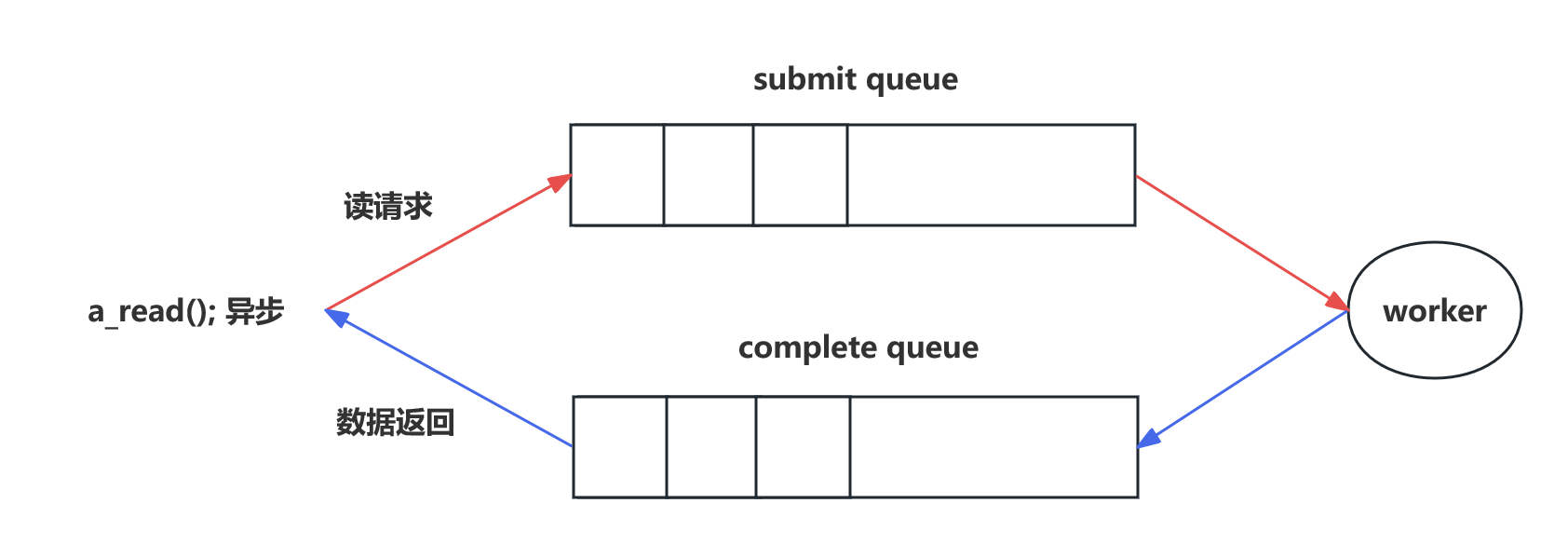

一、io_uring 原理

- 如何解决频繁 copy 的问题 → mmap 内存映射解决。

- submit queue 中的节点和 complete queue 中的节点共用一块内存,而不是把 submit queue 中的节点 copy 到 complete queue 中。

- 如何做到线程安全 → 无锁环形队列解决。

二、io_uring 使用

- 内核为 io_uring 提供了三个系统调用:

io_uring_setupio_uring_enterio_uring_register

- 封装好的库

liburing。

三、liburing 安装

git clone https://github.com/axboe/liburing.git

cd liburing

./configure

make

sudo make install

四、io_uring 实现 tcp server

- io_uring 的

EVENT_READ 表示数据已经读出来了。 - epoll 的

EPOLLIN 表示数据可以读了。

#include

#include

#include

#include

#include

#define EVENT_ACCEPT 0

#define EVENT_READ 1

#define EVENT_WRITE 2

struct conn_info {

int fd;

int event;

};

int init_server(unsigned short port) {

int sockfd = socket(AF_INET, SOCK_STREAM, 0);

struct sockaddr_in serveraddr;

memset(&serveraddr, 0, sizeof(struct sockaddr_in));

serveraddr.sin_family = AF_INET;

serveraddr.sin_addr.s_addr = htonl(INADDR_ANY);

serveraddr.sin_port = htons(port);

if (-1 == bind(sockfd, (struct sockaddr*)&serveraddr, sizeof(struct sockaddr))) {

perror("bind");

return -1;

}

listen(sockfd, 10);

return sockfd;

}

#define ENTRIES_LENGTH 1024

#define BUFFER_LENGTH 1024

int set_event_recv(struct io_uring *ring, int connfd, void *buf, size_t len, int flags) {

struct io_uring_sqe *sqe = io_uring_get_sqe(ring);

struct conn_info accept_info = {

.fd = connfd,

.event = EVENT_READ,

};

io_uring_prep_recv(sqe, connfd, buf, len, flags);

memcpy(&sqe->user_data, &accept_info, sizeof(struct conn_info));

}

int set_event_send(struct io_uring *ring, int connfd, void *buf, size_t len, int flags) {

struct io_uring_sqe *sqe = io_uring_get_sqe(ring);

struct conn_info accept_info = {

.fd = connfd,

.event = EVENT_WRITE,

};

io_uring_prep_send(sqe, connfd, buf, len, flags);

memcpy(&sqe->user_data, &accept_info, sizeof(struct conn_info));

}

int set_event_accept(struct io_uring *ring, int sockfd, struct sockaddr *addr, socklen_t *addrlen, int flags) {

struct io_uring_sqe *sqe = io_uring_get_sqe(ring);

struct conn_info accept_info = {

.fd = sockfd,

.event = EVENT_ACCEPT,

};

io_uring_prep_accept(sqe, sockfd, (struct sockaddr*)addr, addrlen, flags);

memcpy(&sqe->user_data, &accept_info, sizeof(struct conn_info));

}

int main(int argc, char *argv[]) {

unsigned short port = 9999;

int sockfd = init_server(port);

struct io_uring_params params;

memset(¶ms, 0, sizeof(params));

struct io_uring ring;

io_uring_queue_init_params(ENTRIES_LENGTH, &ring, ¶ms);

struct sockaddr_in clientaddr;

socklen_t len = sizeof(clientaddr);

set_event_accept(&ring, sockfd, (struct sockaddr*)&clientaddr, &len, 0);

char buffer[BUFFER_LENGTH] = {0};

while (1) {

io_uring_submit(&ring);

struct io_uring_cqe *cqe;

io_uring_wait_cqe(&ring, &cqe);

struct io_uring_cqe *cqes[128];

int nready = io_uring_peek_batch_cqe(&ring, cqes, 128);

for (int i = 0;i < nready;i++) {

struct io_uring_cqe *entries = cqes[i];

struct conn_info result;

memcpy(&result, &entries->user_data, sizeof(struct conn_info));

if (result.event == EVENT_ACCEPT) {

set_event_accept(&ring, sockfd, (struct sockaddr*)&clientaddr, &len, 0);

printf("set_event_accept\n");

int connfd = entries->res;

set_event_recv(&ring, connfd, buffer, BUFFER_LENGTH, 0);

}

else if (result.event == EVENT_READ) {

int ret = entries->res;

printf("set_event_recv ret: %d, %s\n", ret, buffer);

if (ret == 0) {

close(result.fd);

} else if (ret > 0) {

set_event_send(&ring, result.fd, buffer, ret, 0);

}

} else if (result.event == EVENT_WRITE) {

int ret = entries->res;

printf("set_event_send ret: %d, %s\n", ret, buffer);

set_event_recv(&ring, result.fd, buffer, BUFFER_LENGTH, 0);

}

}

io_uring_cq_advance(&ring, nready);

}

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

- 88

- 89

- 90

- 91

- 92

- 93

- 94

- 95

- 96

- 97

- 98

- 99

- 100

- 101

- 102

- 103

- 104

- 105

- 106

- 107

- 108

- 109

- 110

- 111

- 112

- 113

- 114

- 115

- 116

- 117

- 118

- 119

- 120

- 121

- 122

- 123

- 124

- 125

- 126

- 127

- 128

- 129

- 130

- 131

- 132

- 133

- 134

- 135

- 136

- 137

- 138

- 139

- 140

- 141

- 142

- 143

- 144

- 145

- 146

- 147

- 148

五、QPS 测试

#include

#include

#include

#include

#include

#include

#include

#include

typedef struct test_context_s {

char serverip[16];

int port;

int threadnum;

int connection;

int requestion;

int failed;

} test_context_t;

int connect_tcpserver(const char *ip, unsigned short port) {

int connfd = socket(AF_INET, SOCK_STREAM, 0);

struct sockaddr_in tcpserver_addr;

memset(&tcpserver_addr, 0, sizeof(struct sockaddr_in));

tcpserver_addr.sin_family = AF_INET;

tcpserver_addr.sin_addr.s_addr = inet_addr(ip);

tcpserver_addr.sin_port = htons(port);

int ret = connect(connfd, (struct sockaddr*)&tcpserver_addr, sizeof(struct sockaddr_in));

if (ret) {

perror("connect");

return -1;

}

return connfd;

}

#define TIME_SUB_MS(tv1, tv2) ((tv1.tv_sec - tv2.tv_sec) * 1000 + (tv1.tv_usec - tv2.tv_usec) / 1000)

#define TEST_MESSAGE "ABCDEFGHIJKLMNOPQRSTUVWXYZ1234567890abcdefghijklmnopqrstuvwxyz\r\n"

#define RBUFFER_LENGTH 2048

#define WBUFFER_LENGTH 2048

int send_recv_tcppkt(int fd) {

char wbuffer[WBUFFER_LENGTH] = {0};

for (int i = 0;i < 4;i ++) {

strcpy(wbuffer + i * strlen(TEST_MESSAGE), TEST_MESSAGE);

}

int res = send(fd, wbuffer, strlen(wbuffer), 0);

if (res < 0) {

exit(1);

}

char rbuffer[RBUFFER_LENGTH] = {0};

res = recv(fd, rbuffer, RBUFFER_LENGTH, 0);

if (res <= 0) {

exit(1);

}

if (strcmp(rbuffer, wbuffer) != 0) {

printf("failed: '%s' != '%s'\n", rbuffer, wbuffer);

return -1;

}

return 0;

}

static void *test_qps_entry(void *arg) {

test_context_t *pctx = (test_context_t*)arg;

int connfd = connect_tcpserver(pctx->serverip, pctx->port);

if (connfd < 0) {

printf("connect_tcp_server failed\n");

return NULL;

}

int count = pctx->requestion / pctx->threadnum;

int i = 0;

int res;

while (i++ < count) {

res = send_recv_tcppkt(connfd);

if (res != 0) {

printf("send_recv_tcppkt failed\n");

pctx->failed++;

continue;

}

}

return NULL;

}

int main(int argc, char *argv[]) {

int ret = 0;

test_context_t ctx = {0};

int opt;

while ((opt = getopt(argc, argv, "s:p:t:c:n:?")) != -1) {

switch (opt) {

case 's':

printf("-s: %s\n", optarg);

strcpy(ctx.serverip, optarg);

break;

case 'p':

printf("-p: %s\n", optarg);

ctx.port = atoi(optarg);

break;

case 't':

printf("-t: %s\n", optarg);

ctx.threadnum = atoi(optarg);

break;

case 'c':

printf("-c: %s\n", optarg);

ctx.connection = atoi(optarg);

break;

case 'n':

printf("-n: %s\n", optarg);

ctx.requestion = atoi(optarg);

break;

default:

return -1;

}

}

pthread_t *ptid = malloc(ctx.threadnum * sizeof(pthread_t));

struct timeval tv_begin;

gettimeofday(&tv_begin, NULL);

for (int i = 0;i < ctx.threadnum;i ++) {

pthread_create(&ptid[i], NULL, test_qps_entry, &ctx);

}

for (int i = 0;i < ctx.threadnum;i ++) {

pthread_join(ptid[i], NULL);

}

struct timeval tv_end;

gettimeofday(&tv_end, NULL);

int time_used = TIME_SUB_MS(tv_end, tv_begin);

printf("success: %d, failed: %d, time_used: %d, qps: %d\n", ctx.requestion - ctx.failed, ctx.failed, time_used, ctx.requestion * 1000 / time_used);

free(ptid);

return ret;

}

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

- 88

- 89

- 90

- 91

- 92

- 93

- 94

- 95

- 96

- 97

- 98

- 99

- 100

- 101

- 102

- 103

- 104

- 105

- 106

- 107

- 108

- 109

- 110

- 111

- 112

- 113

- 114

- 115

- 116

- 117

- 118

- 119

- 120

- 121

- 122

- 123

- 124

- 125

- 126

- 127

- 128

- 129

- 130

- 131

- 132

- 133

- 134

- 135

- 136

- 137

- 138

- 139

- 140

- 141

- 142

- 143

- 144

- 145

- 146

- 147

- 148

- 149

- 150

- 151

- 152

- 153

- 154

- 155

- 156

- 157

- 158

- 159

- 160

- 161

- 162

- 163

- 164

- 165

- 166

- 167