-

卷积神经网络(CNN)多种图片分类的实现

前期工作

1. 设置GPU(如果使用的是CPU可以忽略这步)

我的环境:

- 语言环境:Python3.6.5

- 编译器:jupyter notebook

- 深度学习环境:TensorFlow2.4.1

import tensorflow as tf gpus = tf.config.list_physical_devices("GPU") if gpus: gpu0 = gpus[0] #如果有多个GPU,仅使用第0个GPU tf.config.experimental.set_memory_growth(gpu0, True) #设置GPU显存用量按需使用 tf.config.set_visible_devices([gpu0],"GPU")- 1

- 2

- 3

- 4

- 5

- 6

- 7

2. 导入数据

import tensorflow as tf from tensorflow.keras import datasets, layers, models import matplotlib.pyplot as plt (train_images, train_labels), (test_images, test_labels) = datasets.cifar10.load_data()- 1

- 2

- 3

- 4

- 5

3.归一化

# 将像素的值标准化至0到1的区间内。 train_images, test_images = train_images / 255.0, test_images / 255.0 train_images.shape,test_images.shape,train_labels.shape,test_labels.shape- 1

- 2

- 3

- 4

4.可视化

class_names = ['airplane', 'automobile', 'bird', 'cat', 'deer','dog', 'frog', 'horse', 'ship', 'truck'] plt.figure(figsize=(20,10)) for i in range(20): plt.subplot(5,10,i+1) plt.xticks([]) plt.yticks([]) plt.grid(False) plt.imshow(train_images[i], cmap=plt.cm.binary) plt.xlabel(class_names[train_labels[i][0]]) plt.show()- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

二、构建CNN网络模型

model = models.Sequential([ layers.Conv2D(32, (3, 3), activation='relu', input_shape=(32, 32, 3)), #卷积层1,卷积核3*3 layers.MaxPooling2D((2, 2)), #池化层1,2*2采样 layers.Conv2D(64, (3, 3), activation='relu'), #卷积层2,卷积核3*3 layers.MaxPooling2D((2, 2)), #池化层2,2*2采样 layers.Conv2D(64, (3, 3), activation='relu'), #卷积层3,卷积核3*3 layers.Flatten(), #Flatten层,连接卷积层与全连接层 layers.Dense(64, activation='relu'), #全连接层,特征进一步提取 layers.Dense(10) #输出层,输出预期结果 ]) model.summary() # 打印网络结构- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

Model: "sequential" _________________________________________________________________ Layer (type) Output Shape Param # ================================================================= conv2d (Conv2D) (None, 30, 30, 32) 896 _________________________________________________________________ max_pooling2d (MaxPooling2D) (None, 15, 15, 32) 0 _________________________________________________________________ conv2d_1 (Conv2D) (None, 13, 13, 64) 18496 _________________________________________________________________ max_pooling2d_1 (MaxPooling2 (None, 6, 6, 64) 0 _________________________________________________________________ conv2d_2 (Conv2D) (None, 4, 4, 64) 36928 _________________________________________________________________ flatten (Flatten) (None, 1024) 0 _________________________________________________________________ dense (Dense) (None, 64) 65600 _________________________________________________________________ dense_1 (Dense) (None, 10) 650 ================================================================= Total params: 122,570 Trainable params: 122,570 Non-trainable params: 0 _________________________________________________________________- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

三、编译模型

model.compile(optimizer='adam', loss=tf.keras.losses.SparseCategoricalCrossentropy(from_logits=True), metrics=['accuracy'])- 1

- 2

- 3

四、训练模型

history = model.fit(train_images, train_labels, epochs=10, validation_data=(test_images, test_labels))- 1

- 2

Epoch 1/10 1563/1563 [==============================] - 9s 4ms/step - loss: 1.7862 - accuracy: 0.3390 - val_loss: 1.2697 - val_accuracy: 0.5406 Epoch 2/10 1563/1563 [==============================] - 5s 3ms/step - loss: 1.2270 - accuracy: 0.5595 - val_loss: 1.0731 - val_accuracy: 0.6167 Epoch 3/10 1563/1563 [==============================] - 5s 3ms/step - loss: 1.0355 - accuracy: 0.6337 - val_loss: 0.9678 - val_accuracy: 0.6610 Epoch 4/10 1563/1563 [==============================] - 5s 3ms/step - loss: 0.9221 - accuracy: 0.6727 - val_loss: 0.9589 - val_accuracy: 0.6648 Epoch 5/10 1563/1563 [==============================] - 5s 3ms/step - loss: 0.8474 - accuracy: 0.7022 - val_loss: 0.8962 - val_accuracy: 0.6853 Epoch 6/10 1563/1563 [==============================] - 5s 3ms/step - loss: 0.7814 - accuracy: 0.7292 - val_loss: 0.9124 - val_accuracy: 0.6873 Epoch 7/10 1563/1563 [==============================] - 5s 3ms/step - loss: 0.7398 - accuracy: 0.7398 - val_loss: 0.8924 - val_accuracy: 0.6929 Epoch 8/10 1563/1563 [==============================] - 5s 3ms/step - loss: 0.7008 - accuracy: 0.7542 - val_loss: 0.9809 - val_accuracy: 0.6854 Epoch 9/10 1563/1563 [==============================] - 5s 3ms/step - loss: 0.6474 - accuracy: 0.7732 - val_loss: 0.8549 - val_accuracy: 0.7137 Epoch 10/10 1563/1563 [==============================] - 5s 3ms/step - loss: 0.6041 - accuracy: 0.7889 - val_loss: 0.8909 - val_accuracy: 0.7046- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

五、预测

通过模型进行预测得到的是每一个类别的概率,数字越大该图片为该类别的可能性越大

plt.imshow(test_images[10])- 1

输出测试集中第一张图片的预测结果

import numpy as np pre = model.predict(test_images) print(class_names[np.argmax(pre[10])])- 1

- 2

- 3

- 4

313/313 [==============================] - 1s 3ms/step airplane- 1

- 2

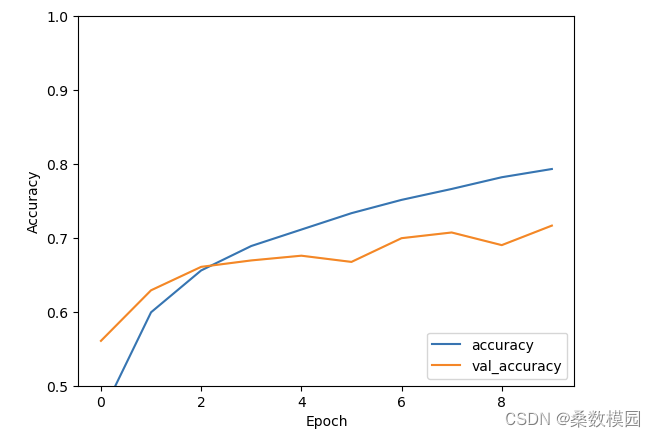

六、模型评估

import matplotlib.pyplot as plt plt.plot(history.history['accuracy'], label='accuracy') plt.plot(history.history['val_accuracy'], label = 'val_accuracy') plt.xlabel('Epoch') plt.ylabel('Accuracy') plt.ylim([0.5, 1]) plt.legend(loc='lower right') plt.show() test_loss, test_acc = model.evaluate(test_images, test_labels, verbose=2)- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

print(test_acc)- 1

0.7166000008583069- 1

- 2

-

相关阅读:

VINS-Fusion-GNSS松耦合原理

基于webman的CMS,企业官网通用PHP后台管理系统

Spring进阶(四):Boot

windows下快速进入指定目录下的dos窗口

Arcgis pro通过渔网工具生成规则采样点,并对栅格数据进行采样

Dubbo入门(四)Provider和Consumer的注解实现

Ubuntu 中Gitbook 使用笔记

PostgreSQL常用数据类型

跨境电商独立站怎么做?好做吗?

2023华为杯研究生数学建模D题思路代码分析

- 原文地址:https://blog.csdn.net/weixin_45822638/article/details/134452430