-

探索云原生技术之容器编排引擎-kubernetes常用的可视化管理工具搭建

文章目录

kubernetes常用的可视化管理工具搭建

- 注意:本次搭建可视化管理工具的kubernetes版本为1.21.10,如果版本过高或过低可能会出错。

第一种:kubernetes(v1.21.10)上安装kubernetes-dashboard(V2.4.0)

- kubernetes-dashboardV2.4.0版本对应

- 注意:kubernetes-dashboardV2.4.0对应的kubernetes版本为1.20或者1.21,其余版本可能未适配。

配置yaml文件

- 1:创建配置文件:(也可以使用github上的配置文件,不过要经过修改之后才能使用)

vi recommended.yaml- 1

- 内容如下:(这里的配置文件是经过我们优化之后的,不是github上原原本本的配置内容)

# Copyright 2017 The Kubernetes Authors. # # Licensed under the Apache License, Version 2.0 (the "License"); # you may not use this file except in compliance with the License. # You may obtain a copy of the License at # # http://www.apache.org/licenses/LICENSE-2.0 # # Unless required by applicable law or agreed to in writing, software # distributed under the License is distributed on an "AS IS" BASIS, # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. # See the License for the specific language governing permissions and # limitations under the License. apiVersion: v1 kind: Namespace metadata: name: kubernetes-dashboard --- apiVersion: v1 kind: ServiceAccount metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kubernetes-dashboard --- kind: Service apiVersion: v1 metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kubernetes-dashboard spec: type: NodePort # 新增内容 ports: - port: 443 targetPort: 8443 nodePort: 30010 # 新增内容 selector: k8s-app: kubernetes-dashboard --- apiVersion: v1 kind: Secret metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard-certs namespace: kubernetes-dashboard type: Opaque --- apiVersion: v1 kind: Secret metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard-csrf namespace: kubernetes-dashboard type: Opaque data: csrf: "" --- apiVersion: v1 kind: Secret metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard-key-holder namespace: kubernetes-dashboard type: Opaque --- kind: ConfigMap apiVersion: v1 metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard-settings namespace: kubernetes-dashboard --- kind: Role apiVersion: rbac.authorization.k8s.io/v1 metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kubernetes-dashboard rules: # Allow Dashboard to get, update and delete Dashboard exclusive secrets. - apiGroups: [""] resources: ["secrets"] resourceNames: ["kubernetes-dashboard-key-holder", "kubernetes-dashboard-certs", "kubernetes-dashboard-csrf"] verbs: ["get", "update", "delete"] # Allow Dashboard to get and update 'kubernetes-dashboard-settings' config map. - apiGroups: [""] resources: ["configmaps"] resourceNames: ["kubernetes-dashboard-settings"] verbs: ["get", "update"] # Allow Dashboard to get metrics. - apiGroups: [""] resources: ["services"] resourceNames: ["heapster", "dashboard-metrics-scraper"] verbs: ["proxy"] - apiGroups: [""] resources: ["services/proxy"] resourceNames: ["heapster", "http:heapster:", "https:heapster:", "dashboard-metrics-scraper", "http:dashboard-metrics-scraper"] verbs: ["get"] --- kind: ClusterRole apiVersion: rbac.authorization.k8s.io/v1 metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard rules: # Allow Metrics Scraper to get metrics from the Metrics server - apiGroups: ["metrics.k8s.io"] resources: ["pods", "nodes"] verbs: ["get", "list", "watch"] --- apiVersion: rbac.authorization.k8s.io/v1 kind: RoleBinding metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kubernetes-dashboard roleRef: apiGroup: rbac.authorization.k8s.io kind: Role name: kubernetes-dashboard subjects: - kind: ServiceAccount name: kubernetes-dashboard namespace: kubernetes-dashboard --- apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRoleBinding metadata: name: kubernetes-dashboard roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: kubernetes-dashboard subjects: - kind: ServiceAccount name: kubernetes-dashboard namespace: kubernetes-dashboard --- kind: Deployment apiVersion: apps/v1 metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kubernetes-dashboard spec: replicas: 1 revisionHistoryLimit: 10 selector: matchLabels: k8s-app: kubernetes-dashboard template: metadata: labels: k8s-app: kubernetes-dashboard spec: containers: - name: kubernetes-dashboard image: kubernetesui/dashboard:v2.4.0 imagePullPolicy: Always ports: - containerPort: 8443 protocol: TCP args: - --auto-generate-certificates - --namespace=kubernetes-dashboard # Uncomment the following line to manually specify Kubernetes API server Host # If not specified, Dashboard will attempt to auto discover the API server and connect # to it. Uncomment only if the default does not work. # - --apiserver-host=http://my-address:port volumeMounts: - name: kubernetes-dashboard-certs mountPath: /certs # Create on-disk volume to store exec logs - mountPath: /tmp name: tmp-volume livenessProbe: httpGet: scheme: HTTPS path: / port: 8443 initialDelaySeconds: 30 timeoutSeconds: 30 securityContext: allowPrivilegeEscalation: false readOnlyRootFilesystem: true runAsUser: 1001 runAsGroup: 2001 volumes: - name: kubernetes-dashboard-certs secret: secretName: kubernetes-dashboard-certs - name: tmp-volume emptyDir: {} serviceAccountName: kubernetes-dashboard nodeSelector: "kubernetes.io/os": linux # Comment the following tolerations if Dashboard must not be deployed on master tolerations: - key: node-role.kubernetes.io/master effect: NoSchedule --- kind: Service apiVersion: v1 metadata: labels: k8s-app: dashboard-metrics-scraper name: dashboard-metrics-scraper namespace: kubernetes-dashboard spec: ports: - port: 8000 targetPort: 8000 selector: k8s-app: dashboard-metrics-scraper --- kind: Deployment apiVersion: apps/v1 metadata: labels: k8s-app: dashboard-metrics-scraper name: dashboard-metrics-scraper namespace: kubernetes-dashboard spec: replicas: 1 revisionHistoryLimit: 10 selector: matchLabels: k8s-app: dashboard-metrics-scraper template: metadata: labels: k8s-app: dashboard-metrics-scraper spec: securityContext: seccompProfile: type: RuntimeDefault containers: - name: dashboard-metrics-scraper image: kubernetesui/metrics-scraper:v1.0.7 ports: - containerPort: 8000 protocol: TCP livenessProbe: httpGet: scheme: HTTP path: / port: 8000 initialDelaySeconds: 30 timeoutSeconds: 30 volumeMounts: - mountPath: /tmp name: tmp-volume securityContext: allowPrivilegeEscalation: false readOnlyRootFilesystem: true runAsUser: 1001 runAsGroup: 2001 serviceAccountName: kubernetes-dashboard nodeSelector: "kubernetes.io/os": linux # Comment the following tolerations if Dashboard must not be deployed on master tolerations: - key: node-role.kubernetes.io/master effect: NoSchedule volumes: - name: tmp-volume emptyDir: {}- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

- 88

- 89

- 90

- 91

- 92

- 93

- 94

- 95

- 96

- 97

- 98

- 99

- 100

- 101

- 102

- 103

- 104

- 105

- 106

- 107

- 108

- 109

- 110

- 111

- 112

- 113

- 114

- 115

- 116

- 117

- 118

- 119

- 120

- 121

- 122

- 123

- 124

- 125

- 126

- 127

- 128

- 129

- 130

- 131

- 132

- 133

- 134

- 135

- 136

- 137

- 138

- 139

- 140

- 141

- 142

- 143

- 144

- 145

- 146

- 147

- 148

- 149

- 150

- 151

- 152

- 153

- 154

- 155

- 156

- 157

- 158

- 159

- 160

- 161

- 162

- 163

- 164

- 165

- 166

- 167

- 168

- 169

- 170

- 171

- 172

- 173

- 174

- 175

- 176

- 177

- 178

- 179

- 180

- 181

- 182

- 183

- 184

- 185

- 186

- 187

- 188

- 189

- 190

- 191

- 192

- 193

- 194

- 195

- 196

- 197

- 198

- 199

- 200

- 201

- 202

- 203

- 204

- 205

- 206

- 207

- 208

- 209

- 210

- 211

- 212

- 213

- 214

- 215

- 216

- 217

- 218

- 219

- 220

- 221

- 222

- 223

- 224

- 225

- 226

- 227

- 228

- 229

- 230

- 231

- 232

- 233

- 234

- 235

- 236

- 237

- 238

- 239

- 240

- 241

- 242

- 243

- 244

- 245

- 246

- 247

- 248

- 249

- 250

- 251

- 252

- 253

- 254

- 255

- 256

- 257

- 258

- 259

- 260

- 261

- 262

- 263

- 264

- 265

- 266

- 267

- 268

- 269

- 270

- 271

- 272

- 273

- 274

- 275

- 276

- 277

- 278

- 279

- 280

- 281

- 282

- 283

- 284

- 285

- 286

- 287

- 288

- 289

- 290

- 291

- 292

- 293

- 294

- 295

- 296

- 297

- 298

- 299

- 300

- 301

- 302

- 303

- 304

- 305

- 2:执行配置文件:

[root@k8s-master ~]# kubectl apply -f recommended.yaml namespace/kubernetes-dashboard created serviceaccount/kubernetes-dashboard created service/kubernetes-dashboard created secret/kubernetes-dashboard-certs created secret/kubernetes-dashboard-csrf created secret/kubernetes-dashboard-key-holder created configmap/kubernetes-dashboard-settings created role.rbac.authorization.k8s.io/kubernetes-dashboard created clusterrole.rbac.authorization.k8s.io/kubernetes-dashboard created rolebinding.rbac.authorization.k8s.io/kubernetes-dashboard created clusterrolebinding.rbac.authorization.k8s.io/kubernetes-dashboard created deployment.apps/kubernetes-dashboard created service/dashboard-metrics-scraper created deployment.apps/dashboard-metrics-scraper created- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

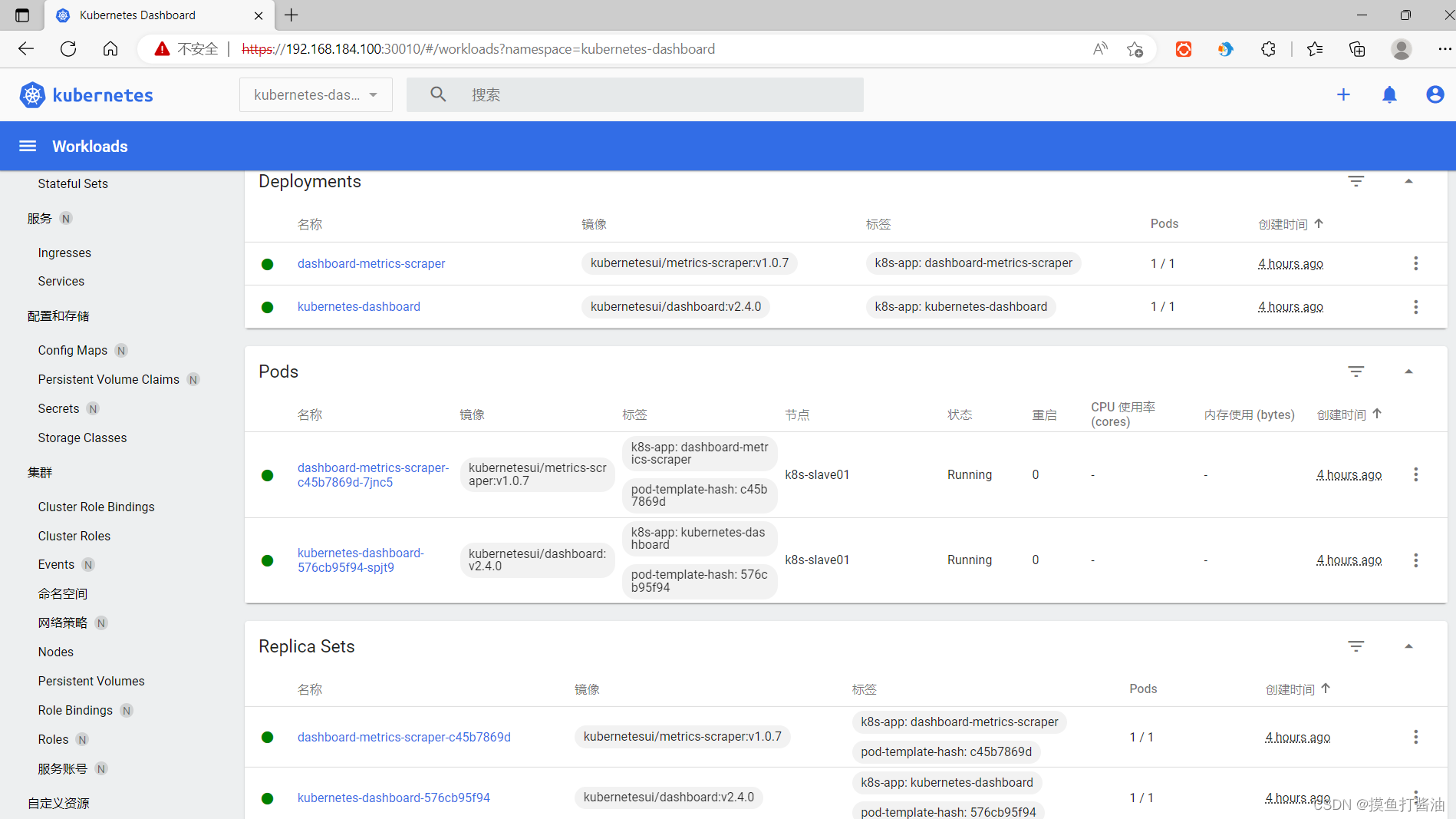

- 3:查看namespace为kubernetes-dashboard下的pod和service和deployment:(需要等到dashboard的pod全部处于Running状态)

[root@k8s-master ~]# kubectl get pod,service,deployment -n kubernetes-dashboard NAME READY STATUS RESTARTS AGE pod/dashboard-metrics-scraper-c45b7869d-7jnc5 1/1 Running 0 2m14s pod/kubernetes-dashboard-576cb95f94-spjt9 1/1 Running 0 2m14s NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE service/dashboard-metrics-scraper ClusterIP 10.96.116.190 <none> 8000/TCP 2m14s service/kubernetes-dashboard NodePort 10.96.39.222 <none> 443:30010/TCP 2m14s NAME READY UP-TO-DATE AVAILABLE AGE deployment.apps/dashboard-metrics-scraper 1/1 1 1 2m14s deployment.apps/kubernetes-dashboard 1/1 1 1 2m14s- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

创建账户

- 1:创建账户:

[root@k8s-master ~]# kubectl create serviceaccount dashboard-admin -n kubernetes-dashboard serviceaccount/dashboard-admin created- 1

- 2

- 2:分配权限:

[root@k8s-master ~]# kubectl create clusterrolebinding dashboard-admin-rb --clusterrole=cluster-admin --serviceaccount=kubernetes-dashboard:dashboard-admin clusterrolebinding.rbac.authorization.k8s.io/dashboard-admin-rb created- 1

- 2

获取token

- 1:查询dashboard的secret:

[root@k8s-master ~]# kubectl get secrets -n kubernetes-dashboard | grep dashboard-admin dashboard-admin-token-nfdvh kubernetes.io/service-account-token 3 55s- 1

- 2

- 2:根据查询到的dashboard的secrets使用describe命令来查询其Token:(保存token,生产环境token千万要保密)

[root@k8s-master ~]# kubectl describe secrets dashboard-admin-token-nfdvh -n kubernetes-dashboard Name: dashboard-admin-token-nfdvh Namespace: kubernetes-dashboard Labels: <none> Annotations: kubernetes.io/service-account.name: dashboard-admin kubernetes.io/service-account.uid: 8db8eb4a-72d3-4b86-b5df-6eb4d54d8473 Type: kubernetes.io/service-account-token Data ==== ca.crt: 1066 bytes namespace: 20 bytes token: eyJhbGciOiJSUzI1NiIsImtpZCI6InA1TWNFMVAtTHlaeVBZcUlubEhvQ1JHcEctaElMRlJFRGZOTS1uTFo1V3cifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJkYXNoYm9hcmQtYWRtaW4tdG9rZW4tbmZkdmgiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC5uYW1lIjoiZGFzaGJvYXJkLWFkbWluIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQudWlkIjoiOGRiOGViNGEtNzJkMy00Yjg2LWI1ZGYtNmViNGQ1NGQ4NDczIiwic3ViIjoic3lzdGVtOnNlcnZpY2VhY2NvdW50Omt1YmVybmV0ZXMtZGFzaGJvYXJkOmRhc2hib2FyZC1hZG1pbiJ9.XkUtUxWnqsqrHT8Nqdb26lY8awcW5bwbjdHmLo3CFSVmdXZgIzgLvHFNVU3WL8hAiNgl-csYDqvQ6cs7BiwgaOZgg691ho-ip1TcJi-uQwEcz-I6P6QFjVc527Rj3TUeVb5QREGQ2CYOf0YAsVZf9l-44ddvIXjlAFeuyIFHicMg2tOsceXbiu6Ha8dNeHWVkABKqCOf3PlbyZNshsWgEpvUAKoiO4bcSSt8twGmYdvE7nGDLYkSYpThFFhFbL3xG5DDINOsksQzsviDjgoIvC2JvECvFVgrDIN1jFkfuPxbePDP6XWnKTXS7fmcLHxmrAF5iBnJPZ0fhnRtGMgNPQ- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

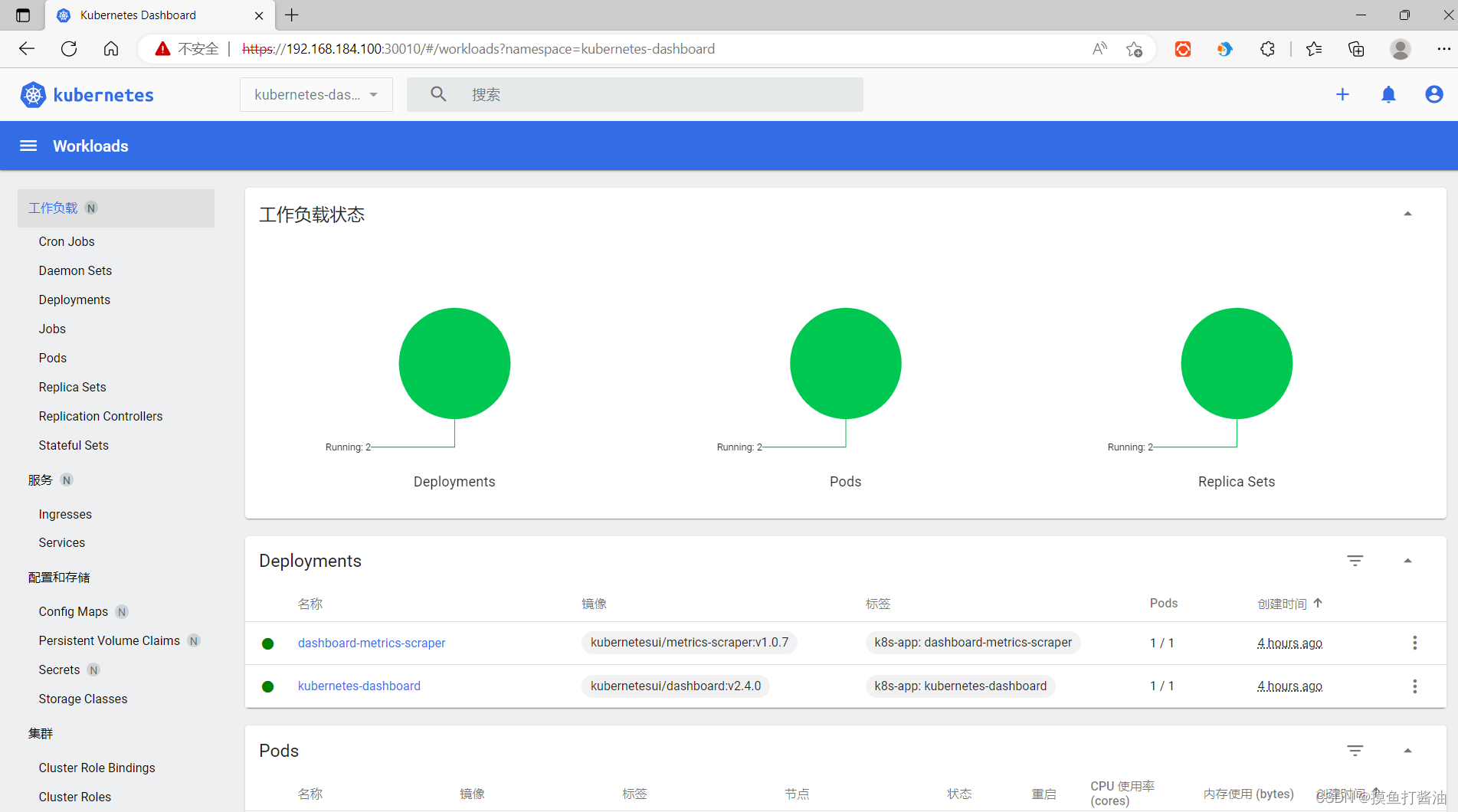

进入kubernetes-dashboard的可视化界面

- 1:查询dashboard的service对外暴露的端口:(由于我们在配置文件中指定的端口是30010且不会随着service的改变而改变)

[root@k8s-master ~]# kubectl get service -n kubernetes-dashboard NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE dashboard-metrics-scraper ClusterIP 10.96.116.190 <none> 8000/TCP 3h39m kubernetes-dashboard NodePort 10.96.39.222 <none> 443:30010/TCP 3h39m- 1

- 2

- 3

- 4

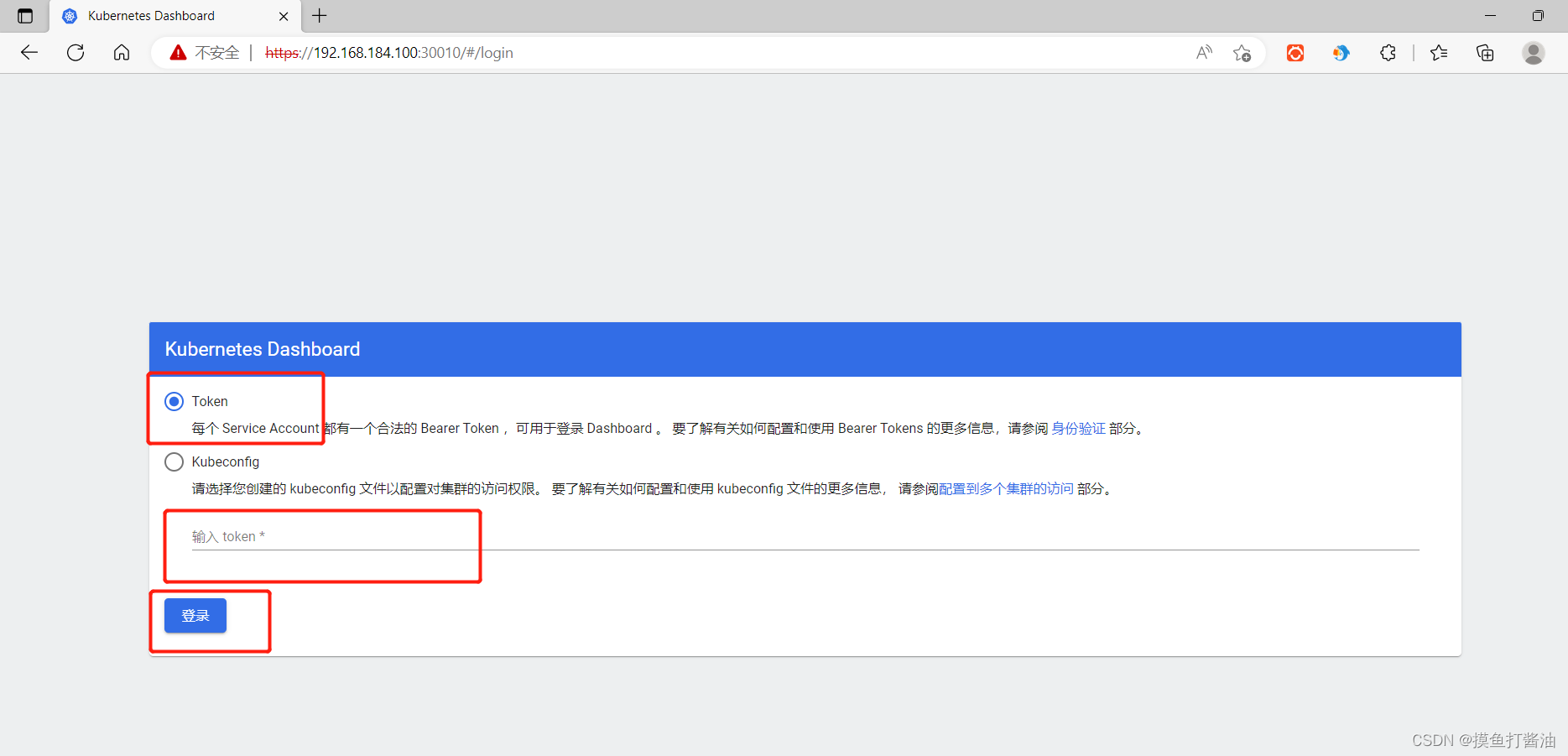

- 2:使用浏览器访问30010端口:

- 格式为:https://k8s集群机器的ip地址:30010/#/login(例如,我们机器访问的是 https://192.168.184.100:30010/#/login)

- 3:选择Token模式登陆,并将刚刚获取到的token复制到输入框中,点击登陆即可。

第二种:kubernetes(v1.21.10)上安装Kuboard v3(hostPath模式)⭐

安装k8s监控工具metrics-server

k8s与metrics-server版本的对应关系

- k8s和metrics-server的版本一定要符合下面的对应关系:(截止到2022/7/4日,目前metrics-server最新版为0.6.X。)

Metrics-Server version Supported k8s version 0.6.x 1.19+ 0.5.x *1.8+ 0.4.x *1.8+ 0.3.x 1.8-1.21 在Master节点安装监控工具metrics-server(v0.6.1)

- 1:创建配置文件:

vi k8s-metrics.yaml- 1

- 内容如下:

apiVersion: v1 kind: ServiceAccount metadata: labels: k8s-app: metrics-server name: metrics-server namespace: kube-system --- apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRole metadata: labels: k8s-app: metrics-server rbac.authorization.k8s.io/aggregate-to-admin: "true" rbac.authorization.k8s.io/aggregate-to-edit: "true" rbac.authorization.k8s.io/aggregate-to-view: "true" name: system:aggregated-metrics-reader rules: - apiGroups: - metrics.k8s.io resources: - pods - nodes verbs: - get - list - watch --- apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRole metadata: labels: k8s-app: metrics-server name: system:metrics-server rules: - apiGroups: - "" resources: - nodes/metrics verbs: - get - apiGroups: - "" resources: - pods - nodes verbs: - get - list - watch --- apiVersion: rbac.authorization.k8s.io/v1 kind: RoleBinding metadata: labels: k8s-app: metrics-server name: metrics-server-auth-reader namespace: kube-system roleRef: apiGroup: rbac.authorization.k8s.io kind: Role name: extension-apiserver-authentication-reader subjects: - kind: ServiceAccount name: metrics-server namespace: kube-system --- apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRoleBinding metadata: labels: k8s-app: metrics-server name: metrics-server:system:auth-delegator roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: system:auth-delegator subjects: - kind: ServiceAccount name: metrics-server namespace: kube-system --- apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRoleBinding metadata: labels: k8s-app: metrics-server name: system:metrics-server roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: system:metrics-server subjects: - kind: ServiceAccount name: metrics-server namespace: kube-system --- apiVersion: v1 kind: Service metadata: labels: k8s-app: metrics-server name: metrics-server namespace: kube-system spec: ports: - name: https port: 443 protocol: TCP targetPort: https selector: k8s-app: metrics-server --- apiVersion: apps/v1 kind: Deployment metadata: labels: k8s-app: metrics-server name: metrics-server namespace: kube-system spec: selector: matchLabels: k8s-app: metrics-server strategy: rollingUpdate: maxUnavailable: 0 template: metadata: labels: k8s-app: metrics-server spec: containers: - args: - --cert-dir=/tmp - --secure-port=4443 - --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname - --kubelet-use-node-status-port - --metric-resolution=15s - --kubelet-insecure-tls # 使用非安全的协议 image: bitnami/metrics-server:0.6.1 # k8s.gcr.io/metrics-server/metrics-server:v0.6.1 imagePullPolicy: IfNotPresent livenessProbe: failureThreshold: 3 httpGet: path: /livez port: https scheme: HTTPS periodSeconds: 10 name: metrics-server ports: - containerPort: 4443 name: https protocol: TCP readinessProbe: failureThreshold: 3 httpGet: path: /readyz port: https scheme: HTTPS initialDelaySeconds: 20 periodSeconds: 10 resources: requests: cpu: 100m memory: 200Mi securityContext: allowPrivilegeEscalation: false readOnlyRootFilesystem: true runAsNonRoot: true runAsUser: 1000 volumeMounts: - mountPath: /tmp name: tmp-dir nodeSelector: kubernetes.io/os: linux priorityClassName: system-cluster-critical serviceAccountName: metrics-server volumes: - emptyDir: {} name: tmp-dir --- apiVersion: apiregistration.k8s.io/v1 kind: APIService metadata: labels: k8s-app: metrics-server name: v1beta1.metrics.k8s.io spec: group: metrics.k8s.io groupPriorityMinimum: 100 insecureSkipTLSVerify: true service: name: metrics-server namespace: kube-system version: v1beta1 versionPriority: 100- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

- 88

- 89

- 90

- 91

- 92

- 93

- 94

- 95

- 96

- 97

- 98

- 99

- 100

- 101

- 102

- 103

- 104

- 105

- 106

- 107

- 108

- 109

- 110

- 111

- 112

- 113

- 114

- 115

- 116

- 117

- 118

- 119

- 120

- 121

- 122

- 123

- 124

- 125

- 126

- 127

- 128

- 129

- 130

- 131

- 132

- 133

- 134

- 135

- 136

- 137

- 138

- 139

- 140

- 141

- 142

- 143

- 144

- 145

- 146

- 147

- 148

- 149

- 150

- 151

- 152

- 153

- 154

- 155

- 156

- 157

- 158

- 159

- 160

- 161

- 162

- 163

- 164

- 165

- 166

- 167

- 168

- 169

- 170

- 171

- 172

- 173

- 174

- 175

- 176

- 177

- 178

- 179

- 180

- 181

- 182

- 183

- 184

- 185

- 186

- 187

- 188

- 189

- 190

- 191

- 192

- 193

- 194

- 195

- 196

- 197

- 2:执行配置文件:

[root@k8s-master ~]# kubectl apply -f k8s-metrics.yaml serviceaccount/metrics-server created clusterrole.rbac.authorization.k8s.io/system:aggregated-metrics-reader created clusterrole.rbac.authorization.k8s.io/system:metrics-server created rolebinding.rbac.authorization.k8s.io/metrics-server-auth-reader created clusterrolebinding.rbac.authorization.k8s.io/metrics-server:system:auth-delegator created clusterrolebinding.rbac.authorization.k8s.io/system:metrics-server created service/metrics-server created deployment.apps/metrics-server created apiservice.apiregistration.k8s.io/v1beta1.metrics.k8s.io created- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 3:等待一会再检查是否安装成功:(安装成功)

[root@k8s-master ~]# kubectl top nodes --use-protocol-buffers NAME CPU(cores) CPU% MEMORY(bytes) MEMORY% k8s-master 242m 12% 1130Mi 61% k8s-slave01 140m 7% 896Mi 48% k8s-slave02 126m 6% 787Mi 42%- 1

- 2

- 3

- 4

- 5

使用 hostPath 模式提供持久化⭐

- 1:创建文件夹:

mkdir kuboard && cd kuboard- 1

- 2:拉取配置文件:

wget https://addons.kuboard.cn/kuboard/kuboard-v3.yaml- 1

- 3:执行配置文件:

[root@k8s-master kuboard]# kubectl apply -f kuboard-v3.yaml namespace/kuboard created configmap/kuboard-v3-config created serviceaccount/kuboard-boostrap created clusterrolebinding.rbac.authorization.k8s.io/kuboard-boostrap-crb created daemonset.apps/kuboard-etcd created deployment.apps/kuboard-v3 created service/kuboard-v3 created- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 4:等待 Kuboard v3安装成功。

- 何为成功?

- 就是pods和我们下面的一样,并且全部处于Running状态

- 何为成功?

[root@k8s-master kuboard]# kubectl get pods -n kuboard NAME READY STATUS RESTARTS AGE kuboard-agent-2-7776757c59-wfmdw 1/1 Running 0 2m2s kuboard-agent-649bfbb7f9-kxs64 1/1 Running 0 2m2s kuboard-etcd-cwgkl 1/1 Running 0 4m kuboard-questdb-5c94d4698-vgkm9 1/1 Running 0 2m2s kuboard-v3-5fc46b5557-n6vr6 1/1 Running 0 4m- 1

- 2

- 3

- 4

- 5

- 6

- 7

注意:如果安装的pods和我上面不一样的话,就说明出错了,可以看后面的解决方案!

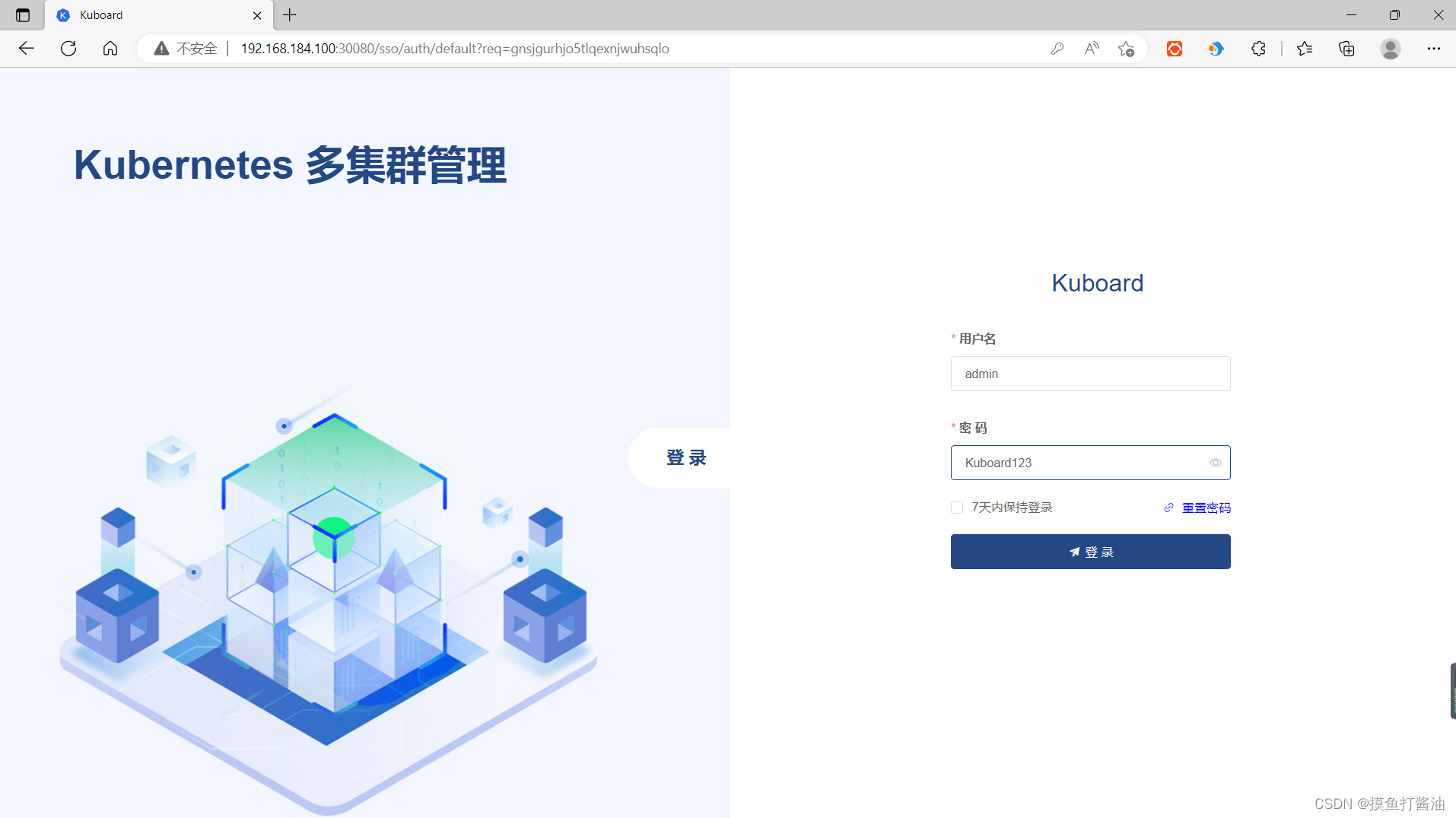

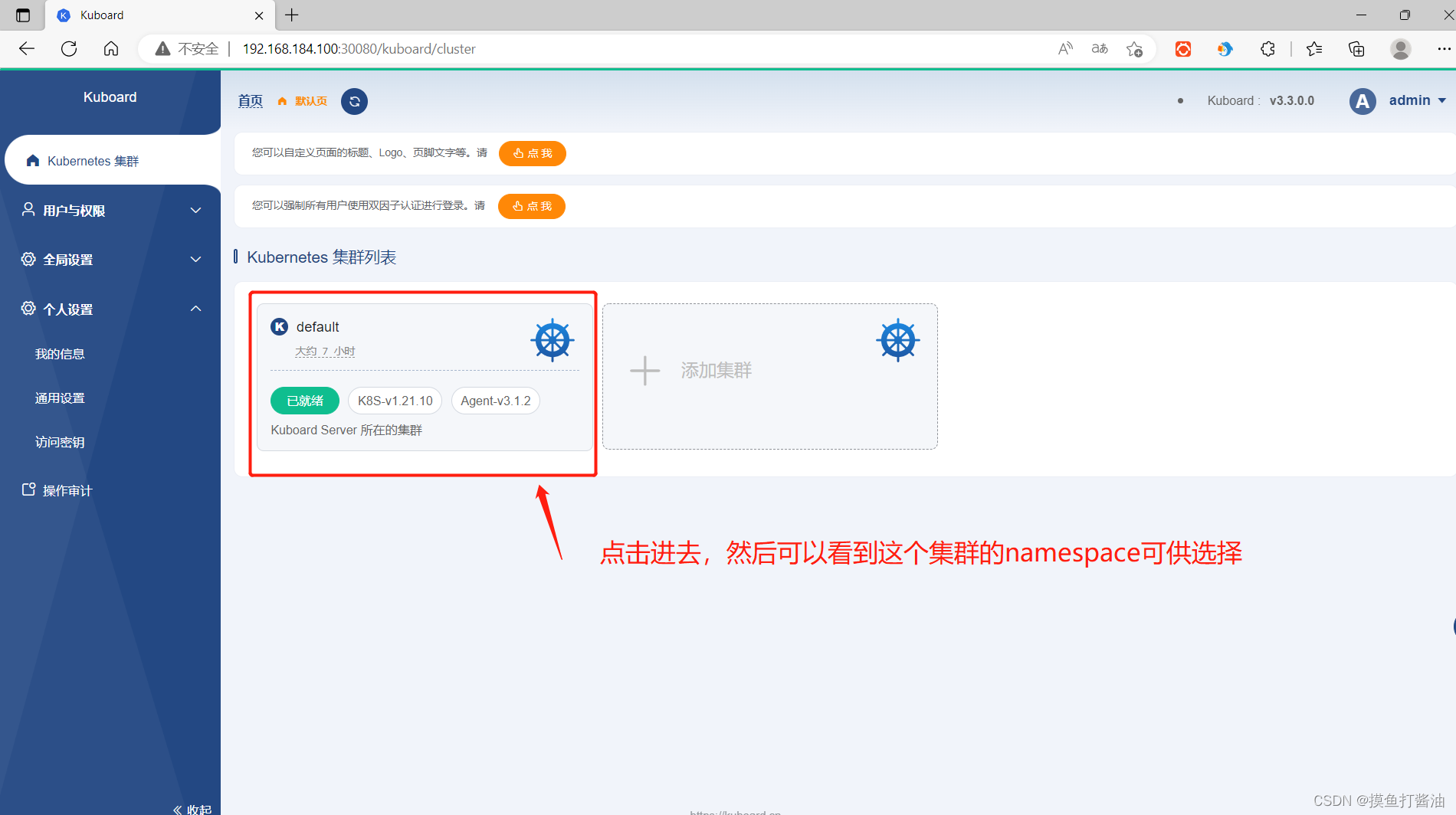

访问 Kuboard管理界面

-

在浏览器中打开链接

http://你的节点ip地址:30080(我们访问的是192.168.184.100:30080) -

输入初始用户名和密码,并登录:

- 用户名:

admin - 密码:

Kuboard123

- 用户名:

登录页/首页

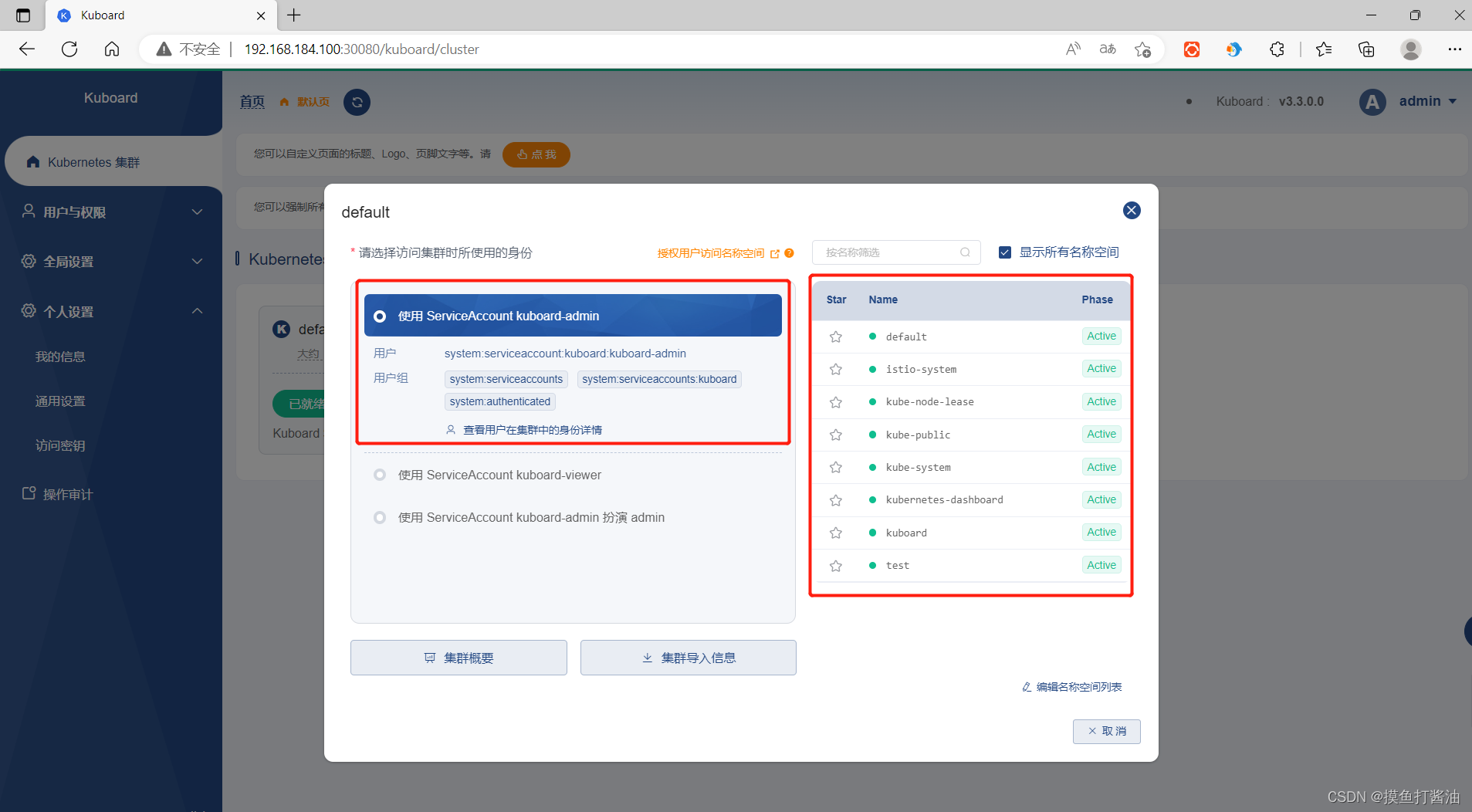

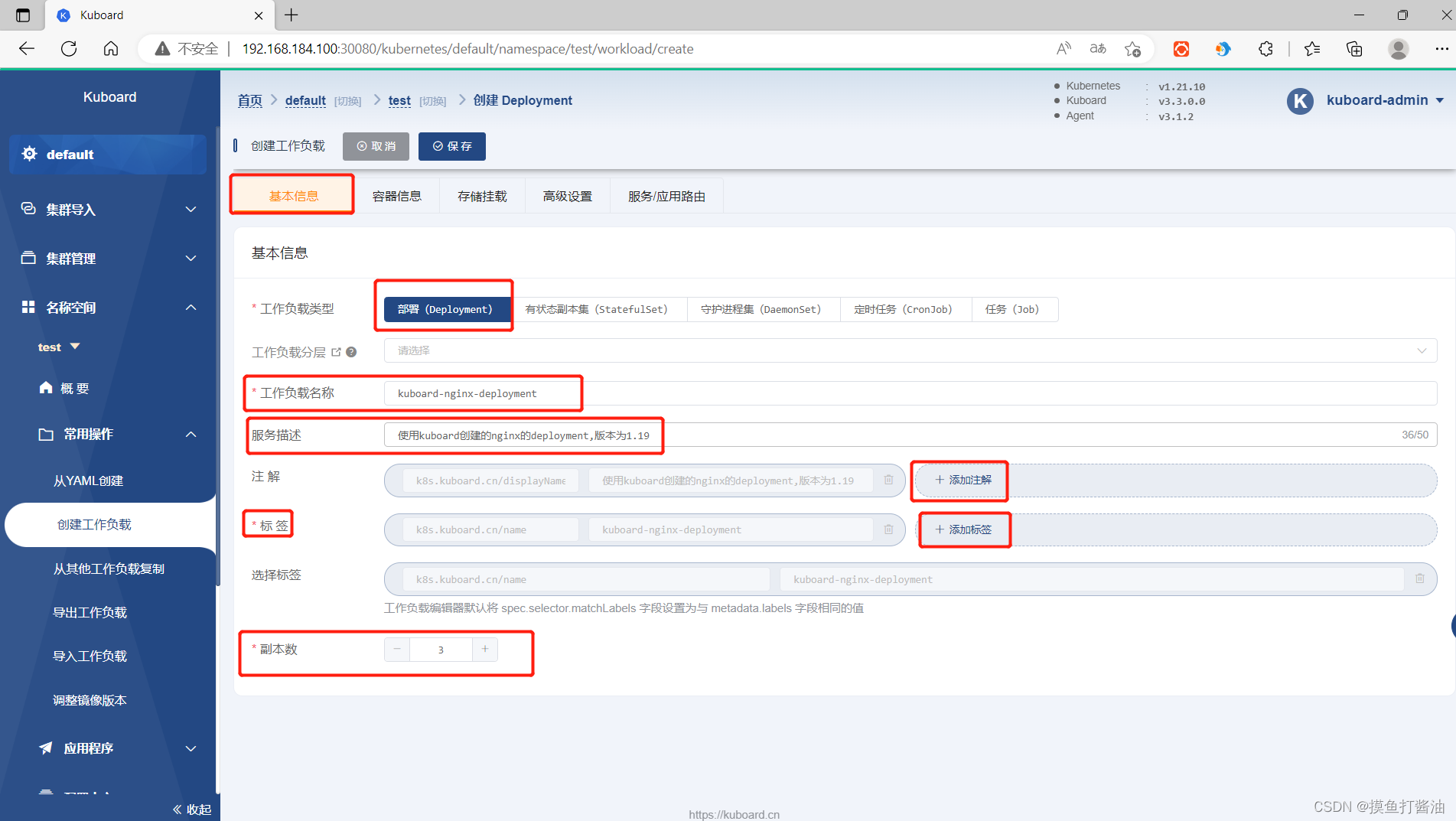

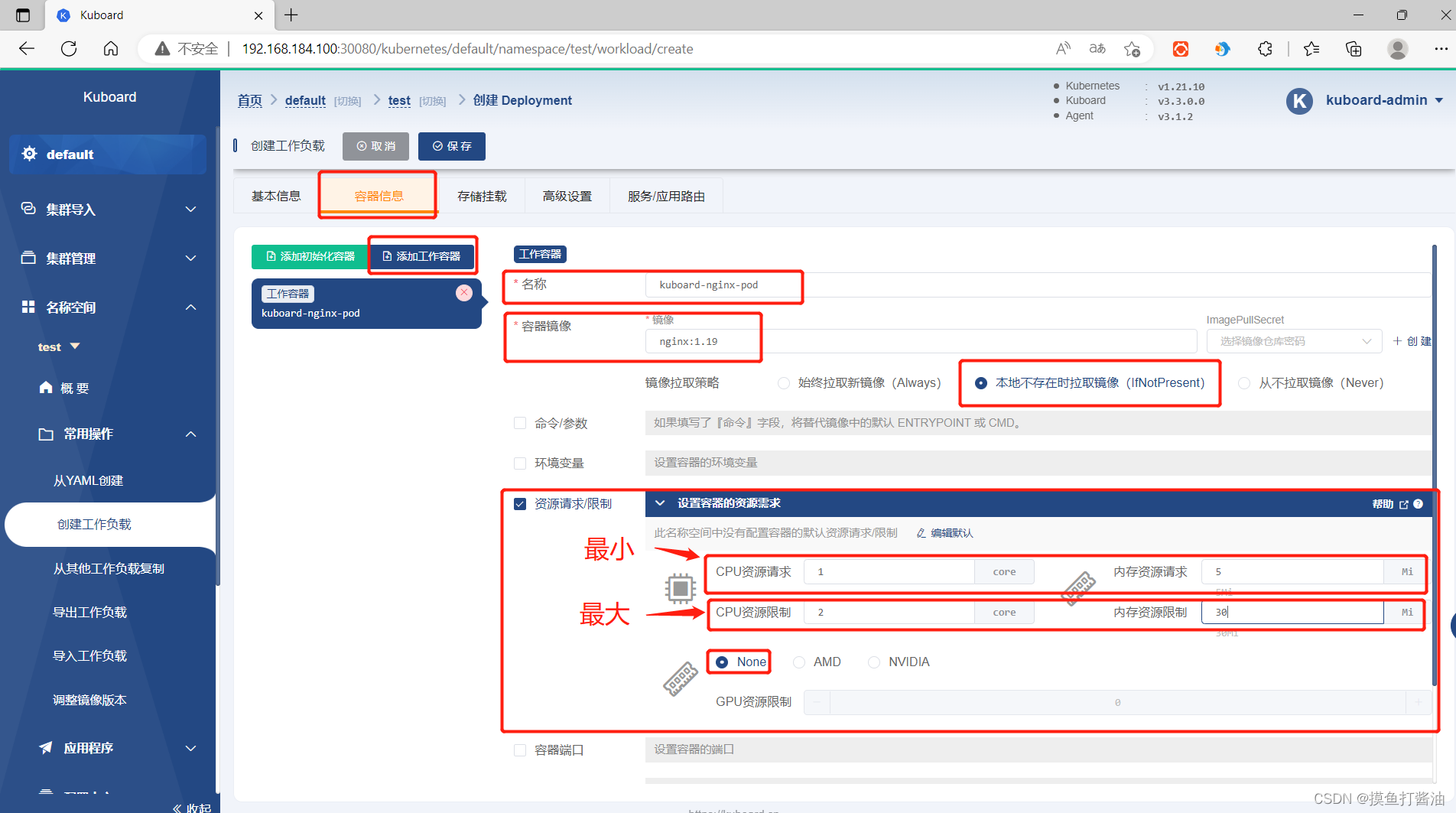

实战(详细图解)⭐

案例过程:

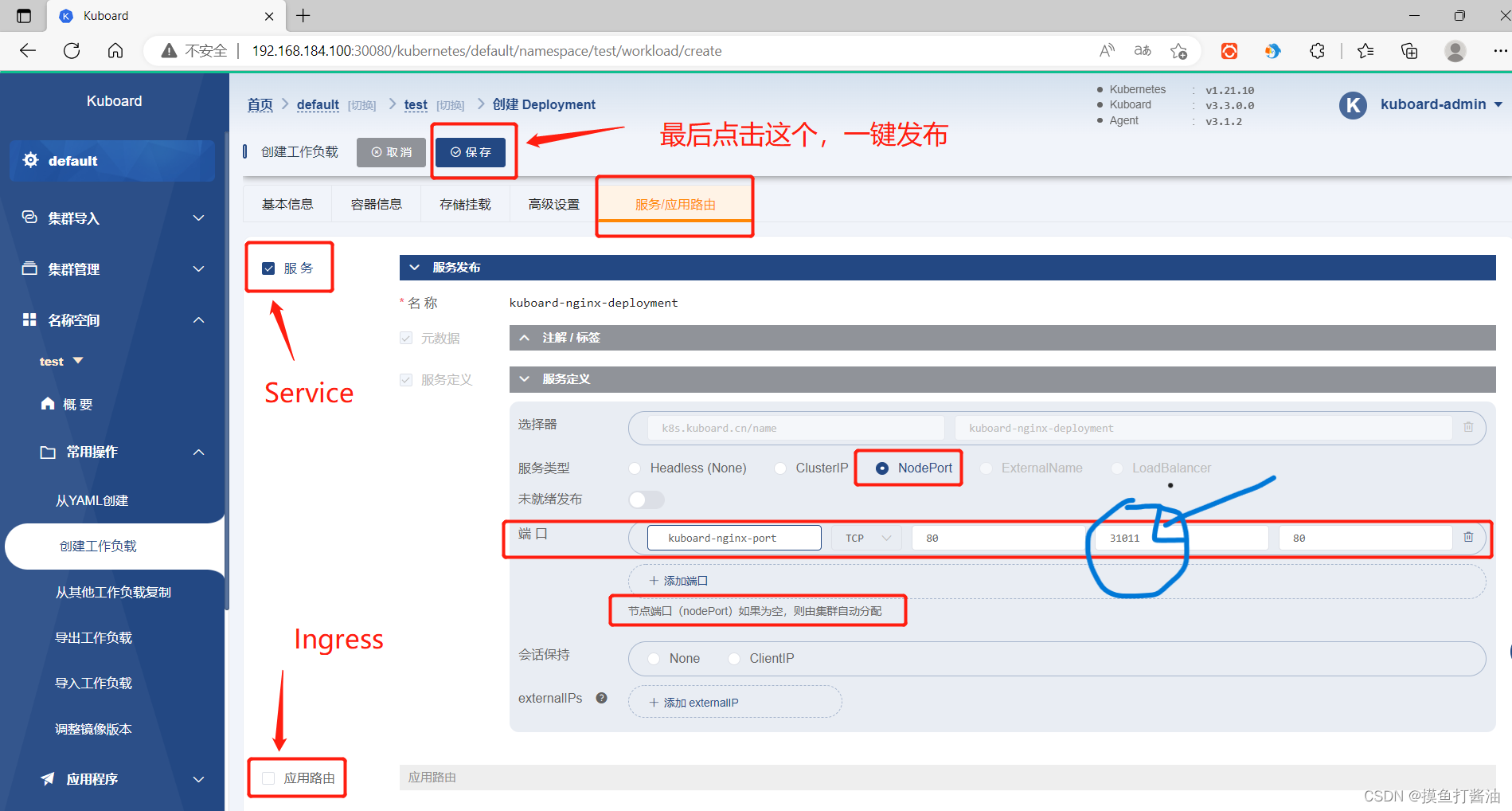

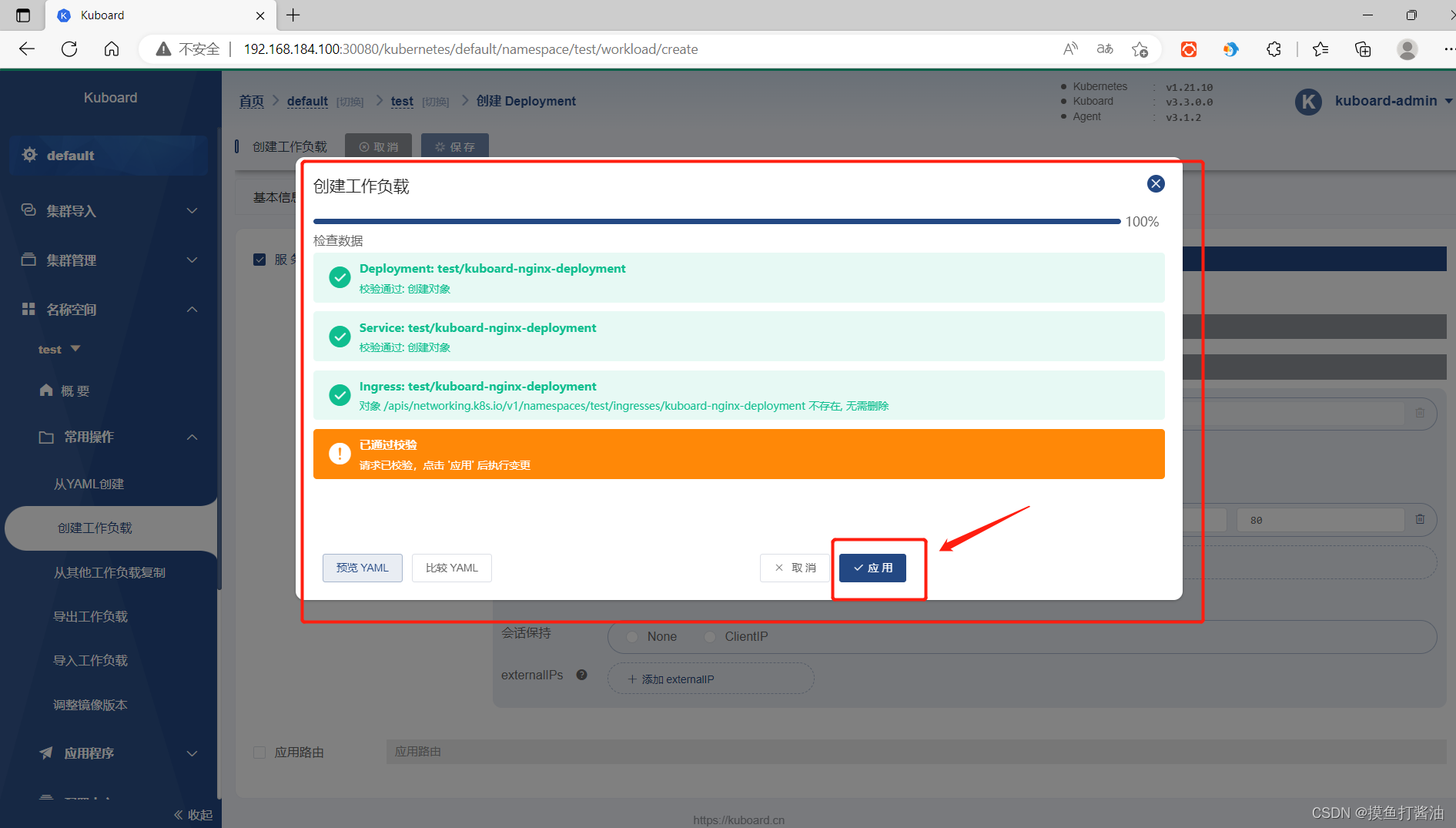

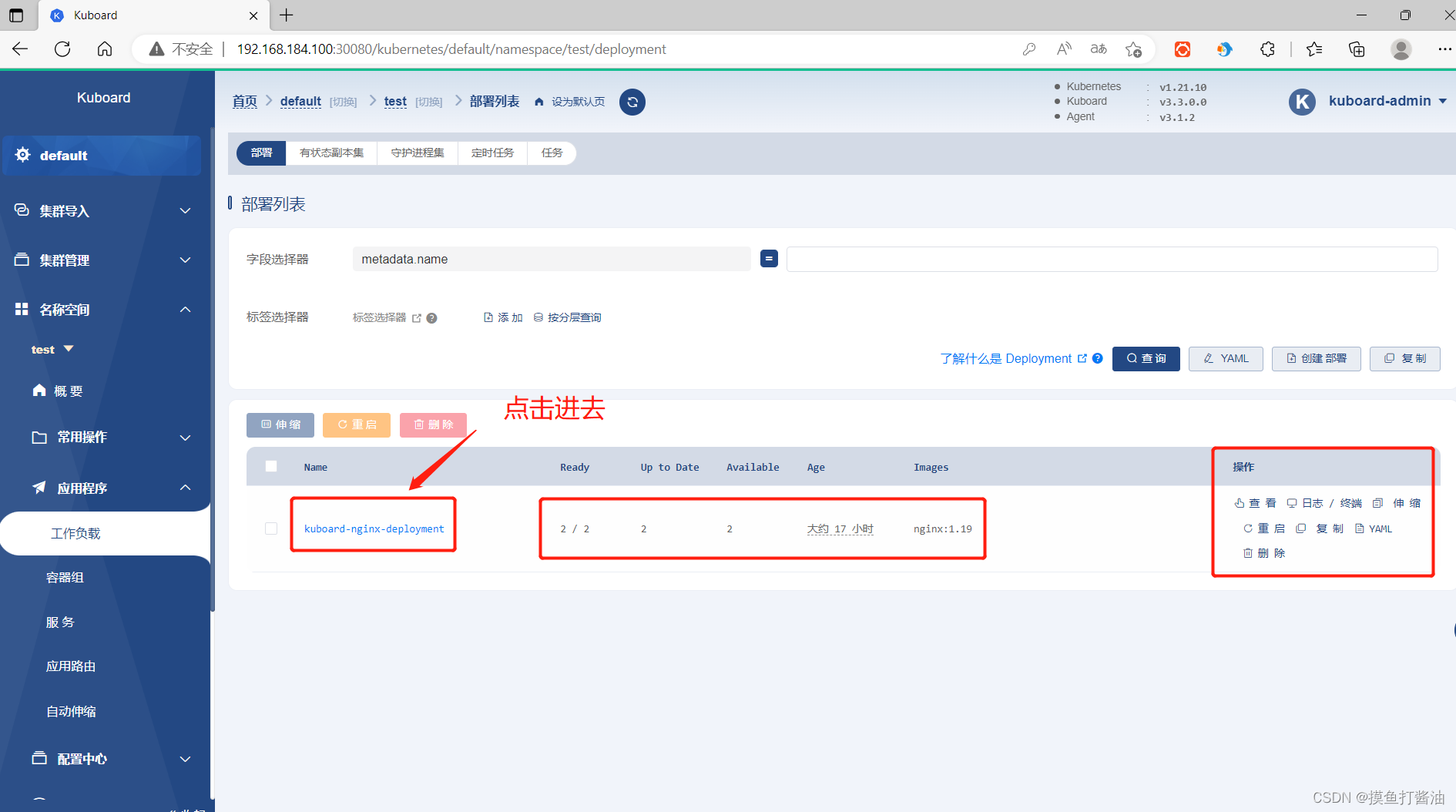

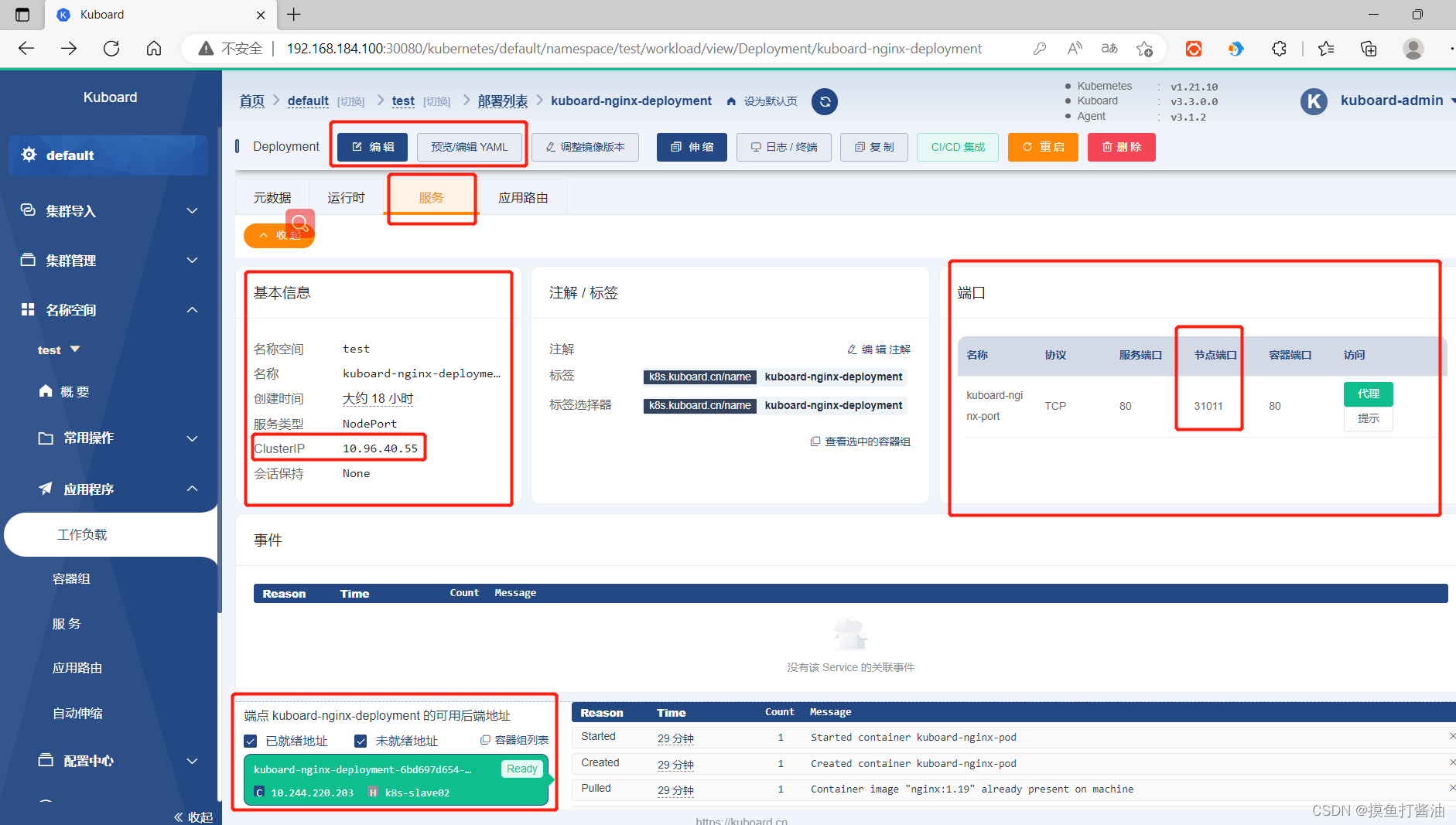

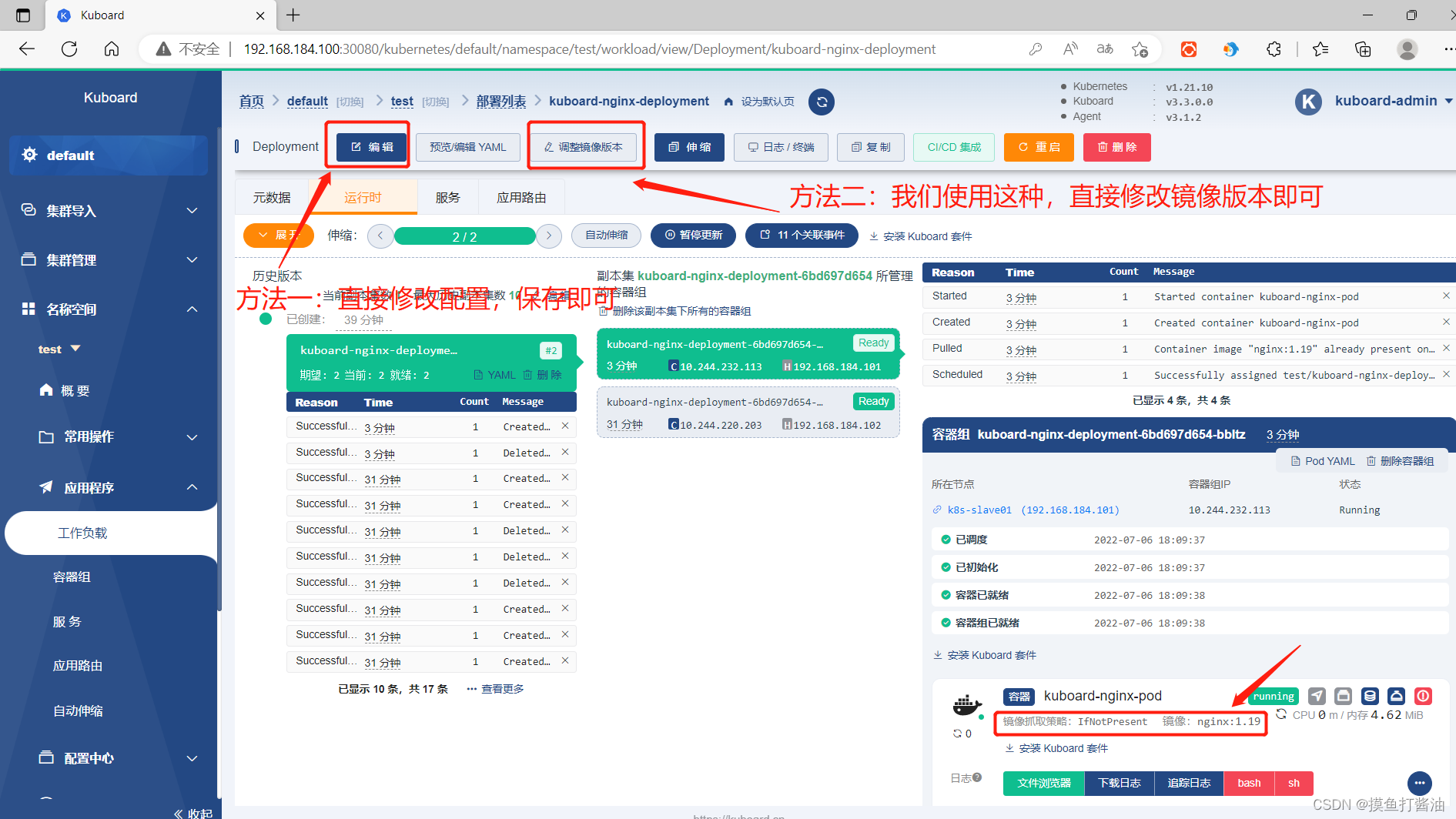

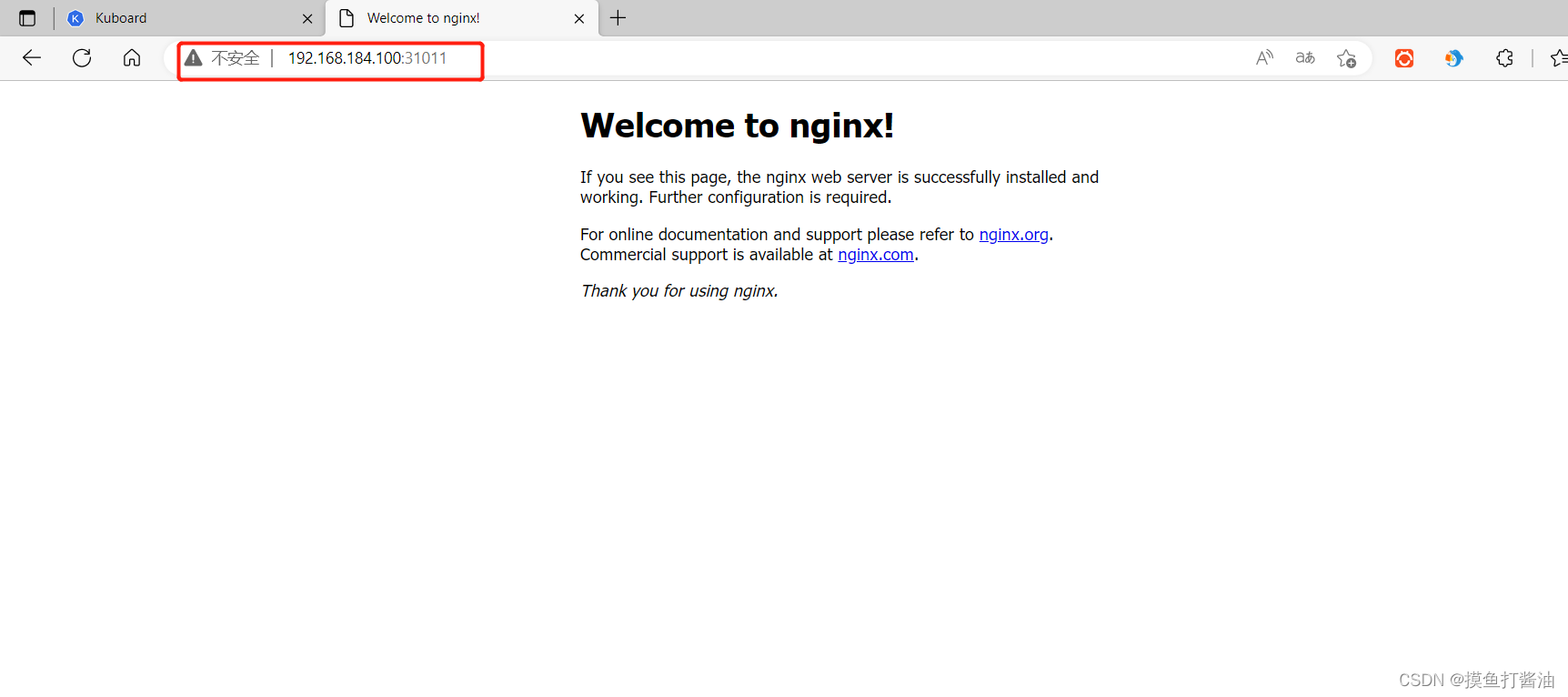

1:利用kuboard创建一个Deployment(nginx:1.19)和Service(并且可以在集群外访问的到,端口为31011)。

2:使用kuboard对Deployment进行扩缩容和开启动态扩缩容(HPA)。

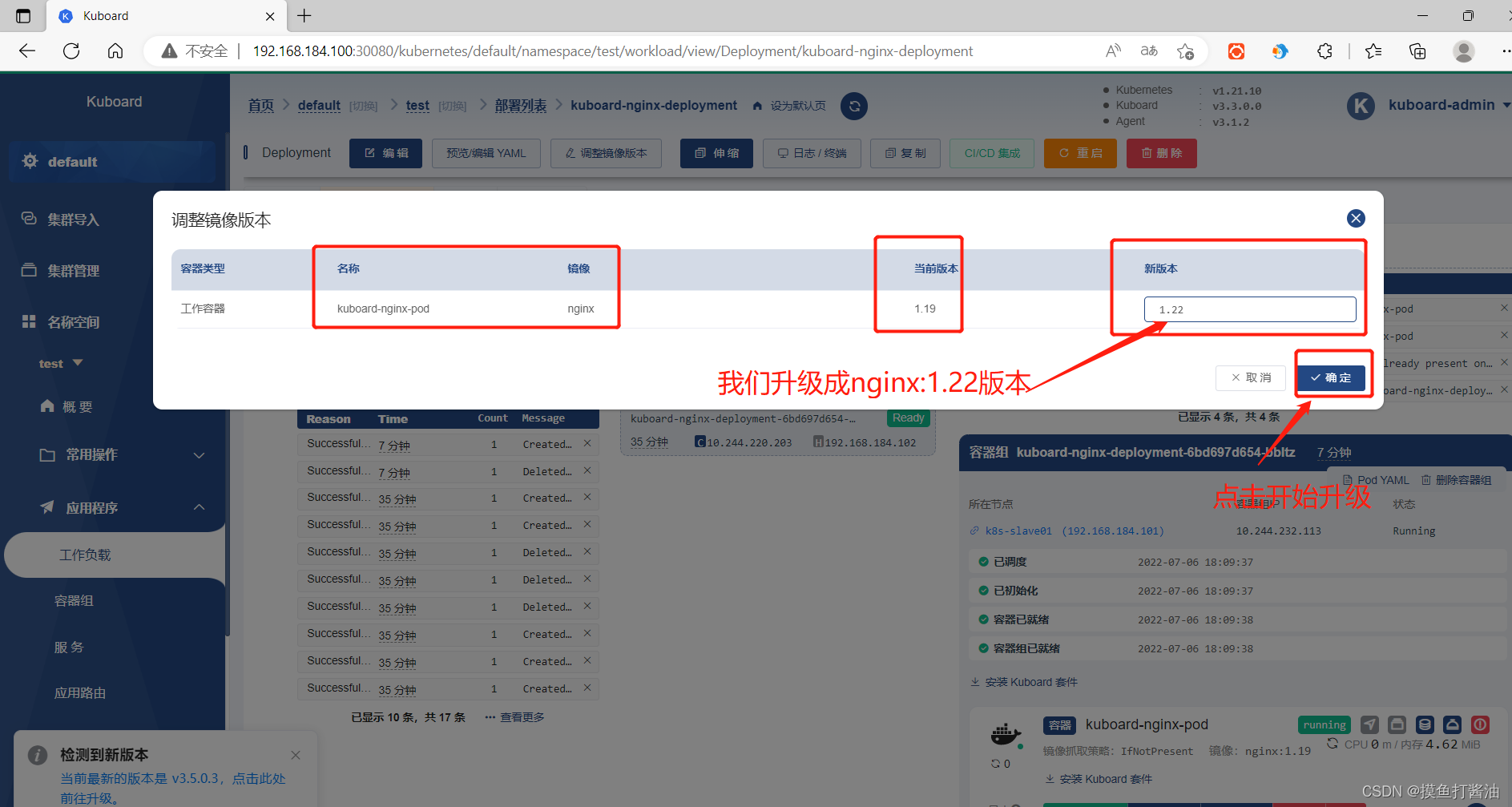

3:再利用kuboard对这个Deployment进行升级到(nginx:1.22)。

4:浏览器访问这个Service。(ip+31011)。

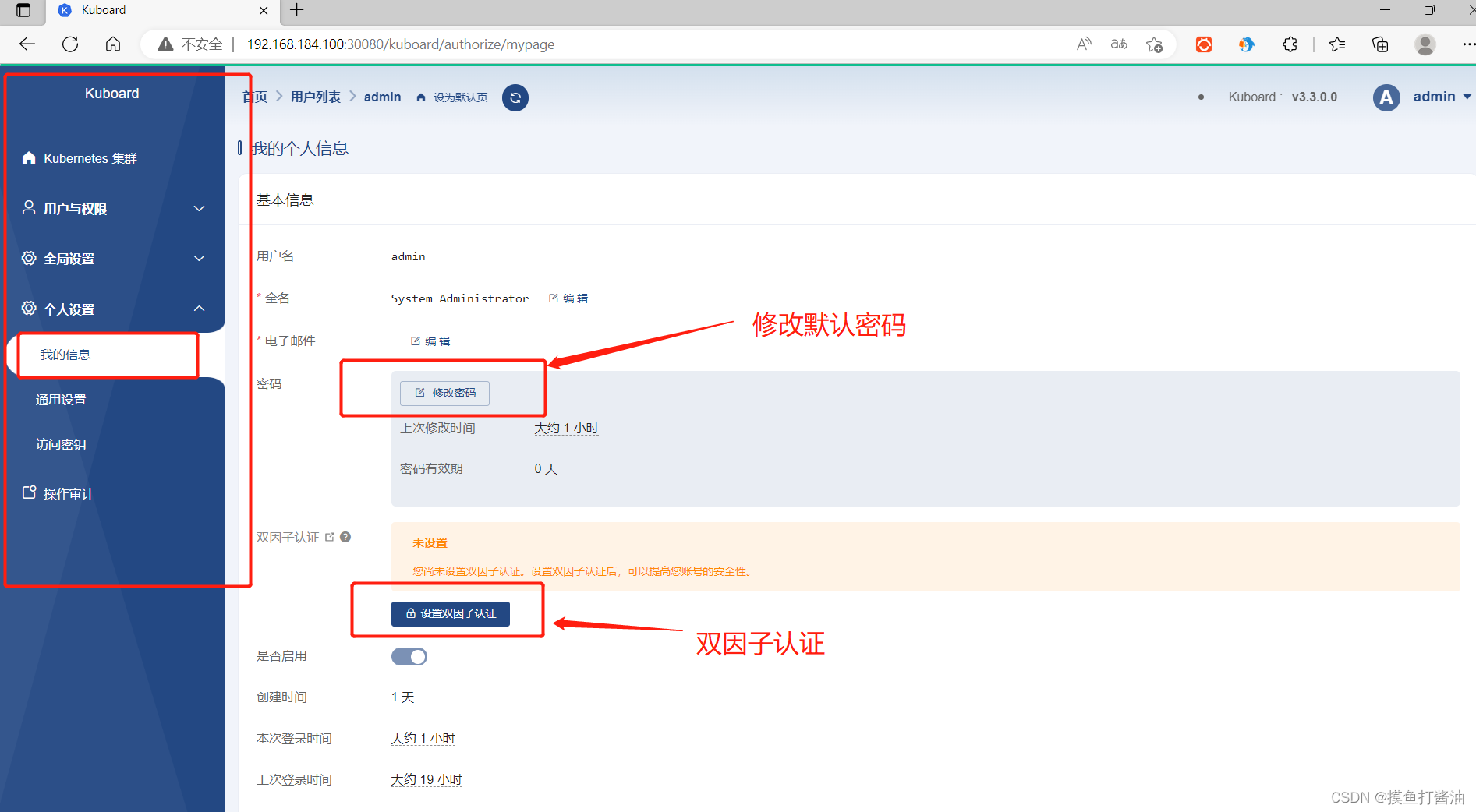

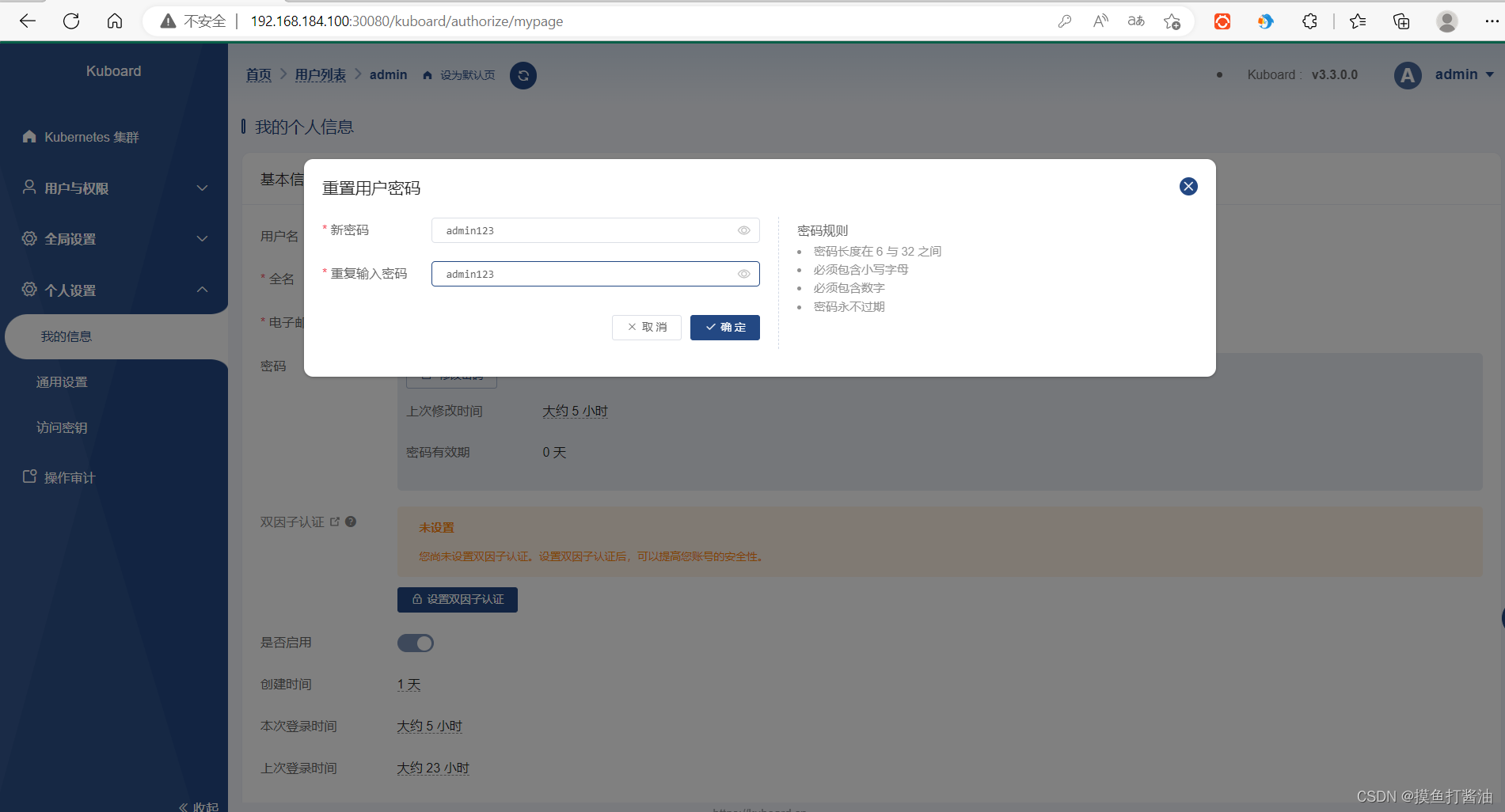

修改kuboard密码

卸载Kuboard v3(谨慎使用)

- 1:删除配置文件:

kubectl delete -f https://addons.kuboard.cn/kuboard/kuboard-v3.yaml- 1

- 2:清理遗留数据。在 master 节点以及带有

k8s.kuboard.cn/role=etcd标签的节点上执行

rm -rf /usr/share/kuboard- 1

安装kuboard常见错误

CrashLoopBackOff:

- kuboard-v3-xxxxx 的容器出现 CrashLoopBackOff 的状态,可能的原因有:

- 缺少 kuboard-etcd-xxxx 容器,请查看本章节后面关于

缺少 Master Role的描述; - kuboard-etcd-xxxx 容器未就绪,请查看 kuboard-etcd-xxxx 容器的日志,解决其不能启动的问题;

- 缺少 kuboard-etcd-xxxx 容器,请查看本章节后面关于

- kuboard-agent-xxxxx 出现 CrashLoopBackOff 的状态,可能的原因有:

- 其依赖的 kuboard-v3 尚未就绪,请耐心等候一会儿即可(根据您的服务器下载镜像速度的不同,大约 3-5 分钟);

- kuboard-v3 已经处于 READY (1/1)状态,但是集群的网络插件配置错误或者其他的网络因素,导致 kuboard-agent 的容器不能访问到 kuboard-v3 的容器;

缺少 Master Role:

-

可能缺少 Master Role 的情况有:

-

当您在 阿里云、腾讯云(以及其他云)托管 的 K8S 集群中以此方式安装 Kuboard 时,您执行

kubectl get nodes将 看不到 master 节点; -

当您的集群是通过二进制方式安装时,您的集群中可能缺少 Master Role,或者当您删除了 Master 节点的

node-role.kubernetes.io/master=标签时,此时执行kubectl get nodes- 1

,结果如下所示:

[root@k8s-19-master-01 ~]# kubectl get nodes NAME STATUS ROLES AGE VERSION k8s-19-master-01 Ready <none> 19d v1.19.11 k8s-19-node-01 Ready <none> 19d v1.19.11 k8s-19-node-02 Ready <none> 19d v1.19.11 k8s-19-node-03 Ready <none> 19d v1.19.11- 1

- 2

- 3

- 4

- 5

- 6

-

-

在集群中缺少 Master Role 节点时,您也可以为一个或者三个 worker 节点添加

k8s.kuboard.cn/role=etcd的标签,来增加 kuboard-etcd 的实例数量;-

执行如下指令,可以为

your-node-name节点添加所需要的标签kubectl label nodes your-node-name k8s.kuboard.cn/role=etcd- 1

-

etcd:

- Kuboard V3 依赖于 etcd 提供数据的持久化服务,在当前的安装方式下,kuboard-etcd 的存储卷被映射到宿主机节点的 hostPath (/usr/share/kuboard/etcd 目录);

- 为了确保每次重启,etcd 能够加载到原来的数据,以 DaemonSet 的形式部署 kuboard-etcd,并且其容器组将始终被调度到 master 节点,因此,您有多少个 master 节点,就会调度多少个 kuboard-etcd 的实例;

- 某些情况下,您的 master 节点只有一个或者两个,却仍然想要保证 kubuoard-etcd 的高可用,此时,您可以通过为一到两个 worker 节点添加

k8s.kuboard.cn/role=etcd的标签,来增加 kuboard-etcd 的实例数量;- 如果您已经安装了 Kuboard V3,通过此方式调整 etcd 数量时,需要按照如下步骤执行,否则 etcd 将不能正常启动:

- 执行

kubectl delete daemonset kuboard-etcd -n kuboard - 为节点添加标签

- 执行

kubectl apply -f https://addons.kuboard.cn/kuboard/kuboard-v3.yaml

- 执行

- 如果您已经安装了 Kuboard V3,通过此方式调整 etcd 数量时,需要按照如下步骤执行,否则 etcd 将不能正常启动:

- 建议 etcd 部署的数量为 奇数

-

相关阅读:

电子学会C++编程等级考试2023年05月(二级)真题解析

Activity7-BPMN介绍

C#WPF相对好看的登录界面

[Linux入门]---进程优先级

29java容器方法概述(第二级结构)

JavaScript——数据类型

跟着视频学习java,发现swagger打不开?怎么解决

gdb 常用命令

吃透这本Java性能调优实战(MySQL+JVM+Tomcat)已助我拿下阿里offer!

逻辑漏洞(越权)

- 原文地址:https://blog.csdn.net/weixin_50071998/article/details/125591984