-

第81步 时间序列建模实战:Adaboost回归建模

基于WIN10的64位系统演示

一、写在前面

这一期,我们介绍AdaBoost回归。

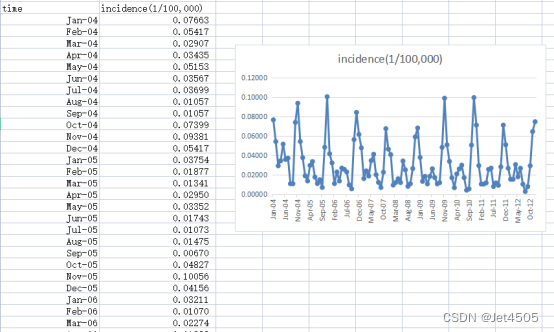

同样,这里使用这个数据:

《PLoS One》2015年一篇题目为《Comparison of Two Hybrid Models for Forecasting the Incidence of Hemorrhagic Fever with Renal Syndrome in Jiangsu Province, China》文章的公开数据做演示。数据为江苏省2004年1月至2012年12月肾综合症出血热月发病率。运用2004年1月至2011年12月的数据预测2012年12个月的发病率数据。

二、AdaBoost回归

(1)代码解读

sklearn.ensemble.AdaBoostRegressor(estimator=None, *, n_estimators=50, learning_rate=1.0, loss='linear', random_state=None, base_estimator='deprecated')咋一看,跟AdaBoostClassifier(用于分类,上传送门)参数也差不多,因此,我们列举出它们相同和不同的地方,便于对比记忆:

共同的参数:

base_estimator: 基估计器用于训练弱学习器。如果为 None,分类器默认使用决策树分类器,而回归器默认使用决策树回归器。

n_estimators: 最大的弱学习器数量。

learning_rate: 按指定的学习率缩小每个弱学习器的贡献。

random_state: 随机数生成器的种子或随机数生成器。

algorithm: 用于 AdaBoost 算法的执行版本。在分类器中是 {"SAMME", "SAMME.R"},在回归器中只有 "SAMME"。

差异:

AdaBoostClassifier 特有参数:

algorithm: 可选的执行算法可以是 "SAMME" 或 "SAMME.R"。默认为 "SAMME.R"。其中 "SAMME.R" 是 "SAMME" 的实值版本,它通常表现得更好,因为它依赖于类别概率,而不是类别预测。

AdaBoostRegressor 特有参数:

loss: 在增加新的弱学习器时用于更新权重的损失函数。可选的值包括 'linear', 'square', 和 'exponential'。

综上可见,虽然这两个类的大部分参数都很相似,但它们的主要区别在于分类器具有两种执行算法("SAMME" 和 "SAMME.R"),而回归器则添加了一个 loss 参数来定义更新权重时使用的损失函数。

(2)单步滚动预测

- import pandas as pd

- import numpy as np

- from sklearn.metrics import mean_absolute_error, mean_squared_error

- from sklearn.ensemble import AdaBoostRegressor

- from sklearn.model_selection import GridSearchCV

- data = pd.read_csv('data.csv')

- # 将时间列转换为日期格式

- data['time'] = pd.to_datetime(data['time'], format='%b-%y')

- # 拆分输入和输出

- lag_period = 6

- # 创建滞后期特征

- for i in range(lag_period, 0, -1):

- data[f'lag_{i}'] = data['incidence'].shift(lag_period - i + 1)

- # 删除包含NaN的行

- data = data.dropna().reset_index(drop=True)

- # 划分训练集和验证集

- train_data = data[(data['time'] >= '2004-01-01') & (data['time'] <= '2011-12-31')]

- validation_data = data[(data['time'] >= '2012-01-01') & (data['time'] <= '2012-12-31')]

- # 定义特征和目标变量

- X_train = train_data[['lag_1', 'lag_2', 'lag_3', 'lag_4', 'lag_5', 'lag_6']]

- y_train = train_data['incidence']

- X_validation = validation_data[['lag_1', 'lag_2', 'lag_3', 'lag_4', 'lag_5', 'lag_6']]

- y_validation = validation_data['incidence']

- # 初始化AdaBoostRegressor模型

- adaboost_model = AdaBoostRegressor()

- # 定义参数网格

- param_grid = {

- 'n_estimators': [50, 100, 150],

- 'learning_rate': [0.01, 0.05, 0.1, 0.5, 1],

- 'loss': ['linear', 'square', 'exponential']

- }

- # 初始化网格搜索

- grid_search = GridSearchCV(adaboost_model, param_grid, cv=5, scoring='neg_mean_squared_error')

- # 进行网格搜索

- grid_search.fit(X_train, y_train)

- # 获取最佳参数

- best_params = grid_search.best_params_

- # 使用最佳参数初始化AdaBoostRegressor模型

- best_adaboost_model = AdaBoostRegressor(**best_params)

- # 在训练集上训练模型

- best_adaboost_model.fit(X_train, y_train)

- # 对于验证集,我们需要迭代地预测每一个数据点

- y_validation_pred = []

- for i in range(len(X_validation)):

- if i == 0:

- pred = best_adaboost_model.predict([X_validation.iloc[0]])

- else:

- new_features = list(X_validation.iloc[i, 1:]) + [pred[0]]

- pred = best_adaboost_model.predict([new_features])

- y_validation_pred.append(pred[0])

- y_validation_pred = np.array(y_validation_pred)

- # 计算验证集上的MAE, MAPE, MSE和RMSE

- mae_validation = mean_absolute_error(y_validation, y_validation_pred)

- mape_validation = np.mean(np.abs((y_validation - y_validation_pred) / y_validation))

- mse_validation = mean_squared_error(y_validation, y_validation_pred)

- rmse_validation = np.sqrt(mse_validation)

- # 计算训练集上的MAE, MAPE, MSE和RMSE

- y_train_pred = best_adaboost_model.predict(X_train)

- mae_train = mean_absolute_error(y_train, y_train_pred)

- mape_train = np.mean(np.abs((y_train - y_train_pred) / y_train))

- mse_train = mean_squared_error(y_train, y_train_pred)

- rmse_train = np.sqrt(mse_train)

- print("Train Metrics:", mae_train, mape_train, mse_train, rmse_train)

- print("Validation Metrics:", mae_validation, mape_validation, mse_validation, rmse_validation)

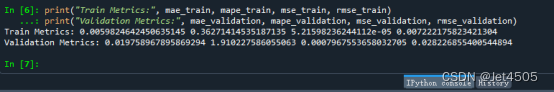

看结果:

(3)多步滚动预测-vol. 1

AdaBoostRegressor预期的目标变量y应该是一维数组,所以你们懂的。

(4)多步滚动预测-vol. 2

同上。

(5)多步滚动预测-vol. 3

- import pandas as pd

- import numpy as np

- from sklearn.ensemble import AdaBoostRegressor

- from sklearn.model_selection import GridSearchCV

- from sklearn.metrics import mean_absolute_error, mean_squared_error

- # 数据读取和预处理

- data = pd.read_csv('data.csv')

- data_y = pd.read_csv('data.csv')

- data['time'] = pd.to_datetime(data['time'], format='%b-%y')

- data_y['time'] = pd.to_datetime(data_y['time'], format='%b-%y')

- n = 6

- for i in range(n, 0, -1):

- data[f'lag_{i}'] = data['incidence'].shift(n - i + 1)

- data = data.dropna().reset_index(drop=True)

- train_data = data[(data['time'] >= '2004-01-01') & (data['time'] <= '2011-12-31')]

- X_train = train_data[[f'lag_{i}' for i in range(1, n+1)]]

- m = 3

- X_train_list = []

- y_train_list = []

- for i in range(m):

- X_temp = X_train

- y_temp = data_y['incidence'].iloc[n + i:len(data_y) - m + 1 + i]

- X_train_list.append(X_temp)

- y_train_list.append(y_temp)

- for i in range(m):

- X_train_list[i] = X_train_list[i].iloc[:-(m-1)]

- y_train_list[i] = y_train_list[i].iloc[:len(X_train_list[i])]

- # 模型训练

- param_grid = {

- 'n_estimators': [50, 100, 150],

- 'learning_rate': [0.01, 0.05, 0.1, 0.5, 1],

- 'loss': ['linear', 'square', 'exponential']

- }

- best_ada_models = []

- for i in range(m):

- grid_search = GridSearchCV(AdaBoostRegressor(), param_grid, cv=5, scoring='neg_mean_squared_error')

- grid_search.fit(X_train_list[i], y_train_list[i])

- best_ada_model = AdaBoostRegressor(**grid_search.best_params_)

- best_ada_model.fit(X_train_list[i], y_train_list[i])

- best_ada_models.append(best_ada_model)

- validation_start_time = train_data['time'].iloc[-1] + pd.DateOffset(months=1)

- validation_data = data[data['time'] >= validation_start_time]

- X_validation = validation_data[[f'lag_{i}' for i in range(1, n+1)]]

- y_validation_pred_list = [model.predict(X_validation) for model in best_ada_models]

- y_train_pred_list = [model.predict(X_train_list[i]) for i, model in enumerate(best_ada_models)]

- def concatenate_predictions(pred_list):

- concatenated = []

- for j in range(len(pred_list[0])):

- for i in range(m):

- concatenated.append(pred_list[i][j])

- return concatenated

- y_validation_pred = np.array(concatenate_predictions(y_validation_pred_list))[:len(validation_data['incidence'])]

- y_train_pred = np.array(concatenate_predictions(y_train_pred_list))[:len(train_data['incidence']) - m + 1]

- mae_validation = mean_absolute_error(validation_data['incidence'], y_validation_pred)

- mape_validation = np.mean(np.abs((validation_data['incidence'] - y_validation_pred) / validation_data['incidence']))

- mse_validation = mean_squared_error(validation_data['incidence'], y_validation_pred)

- rmse_validation = np.sqrt(mse_validation)

- print("验证集:", mae_validation, mape_validation, mse_validation, rmse_validation)

- mae_train = mean_absolute_error(train_data['incidence'][:-(m-1)], y_train_pred)

- mape_train = np.mean(np.abs((train_data['incidence'][:-(m-1)] - y_train_pred) / train_data['incidence'][:-(m-1)]))

- mse_train = mean_squared_error(train_data['incidence'][:-(m-1)], y_train_pred)

- rmse_train = np.sqrt(mse_train)

- print("训练集:", mae_train, mape_train, mse_train, rmse_train)

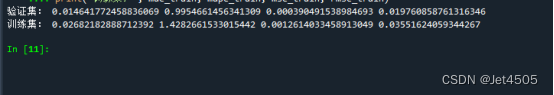

结果:

三、数据

链接:https://pan.baidu.com/s/1EFaWfHoG14h15KCEhn1STg?pwd=q41n

提取码:q41n

-

相关阅读:

【Javascript】编写⼀个函数,排列任意元素个数的数字数组,按从⼩到⼤顺序输出

静态语言和动态语言,解释和编译

怎样快速入门SpringMVC

Linux- open() & lseek()

java计算机毕业设计健康管理系统源码+数据库+系统+lw文档+mybatis+运行部署4

计算机网络——网络安全

计算机毕业设计Java高铁在线购票系统(源码+系统+mysql数据库+lw文档)

基于Spring Boot的智能分析平台

visio将形状、图形、文字、符合进行任意角度旋转(已解决)

OSPF状态机+SPF算法

- 原文地址:https://blog.csdn.net/qq_30452897/article/details/133486453