@

集成Spark开发

Spark编程读写示例

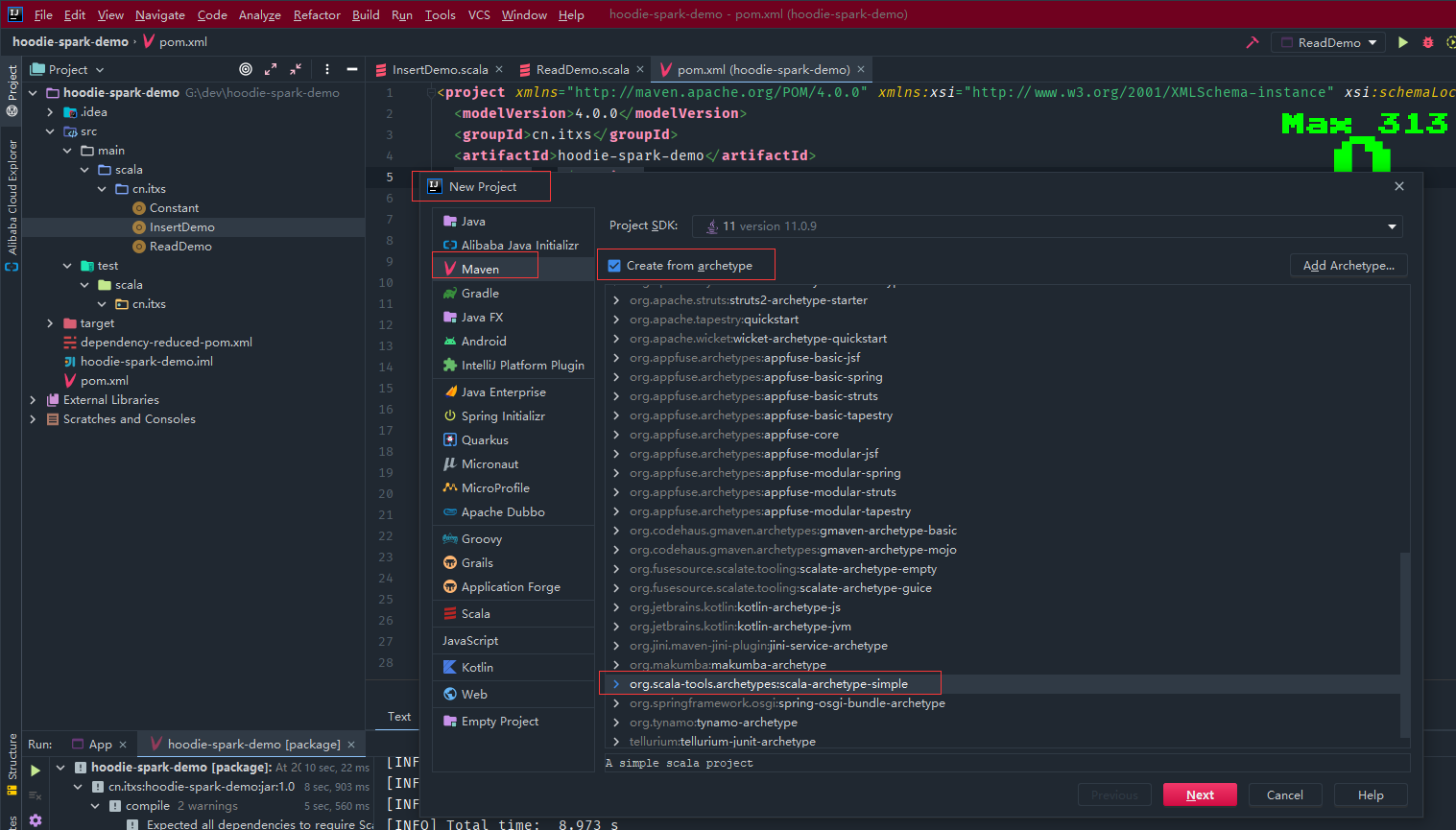

通过IDE如Idea编程实质上和前面的spark-shell和spark-sql相似,其他都是Spark编程的知识,下面以scala语言为示例,idea新建scala的maven项目

pom文件添加如下依赖

4.0.0

cn.itxs

hoodie-spark-demo

1.0

UTF-8

2.12.10

2.12

3.3.0

0.12.1

3.3.4

org.scala-lang

scala-library

${scala.version}

org.apache.spark

spark-core_${scala.binary.version}

${spark.version}

provided

org.apache.spark

spark-sql_${scala.binary.version}

${spark.version}

provided

org.apache.spark

spark-hive_${scala.binary.version}

${spark.version}

provided

org.apache.hadoop

hadoop-client

${hadoop.version}

provided

org.apache.hudi

hudi-spark3.3-bundle_${scala.binary.version}

${hoodie.version}

provided

org.apache.maven.plugins

maven-compiler-plugin

3.10.1

1.8

1.8

${project.build.sourceEncoding}

org.scala-tools

maven-scala-plugin

2.15.2

compile

testCompile

org.apache.maven.plugins

maven-shade-plugin

3.2.4

package

shade

*:*

META-INF/*.SF

META-INF/*.DSA

META-INF/*.RSA

创建常量对象

object Constant {

val HUDI_STORAGE_PATH = "hdfs://192.168.5.53:9000/tmp/"

}

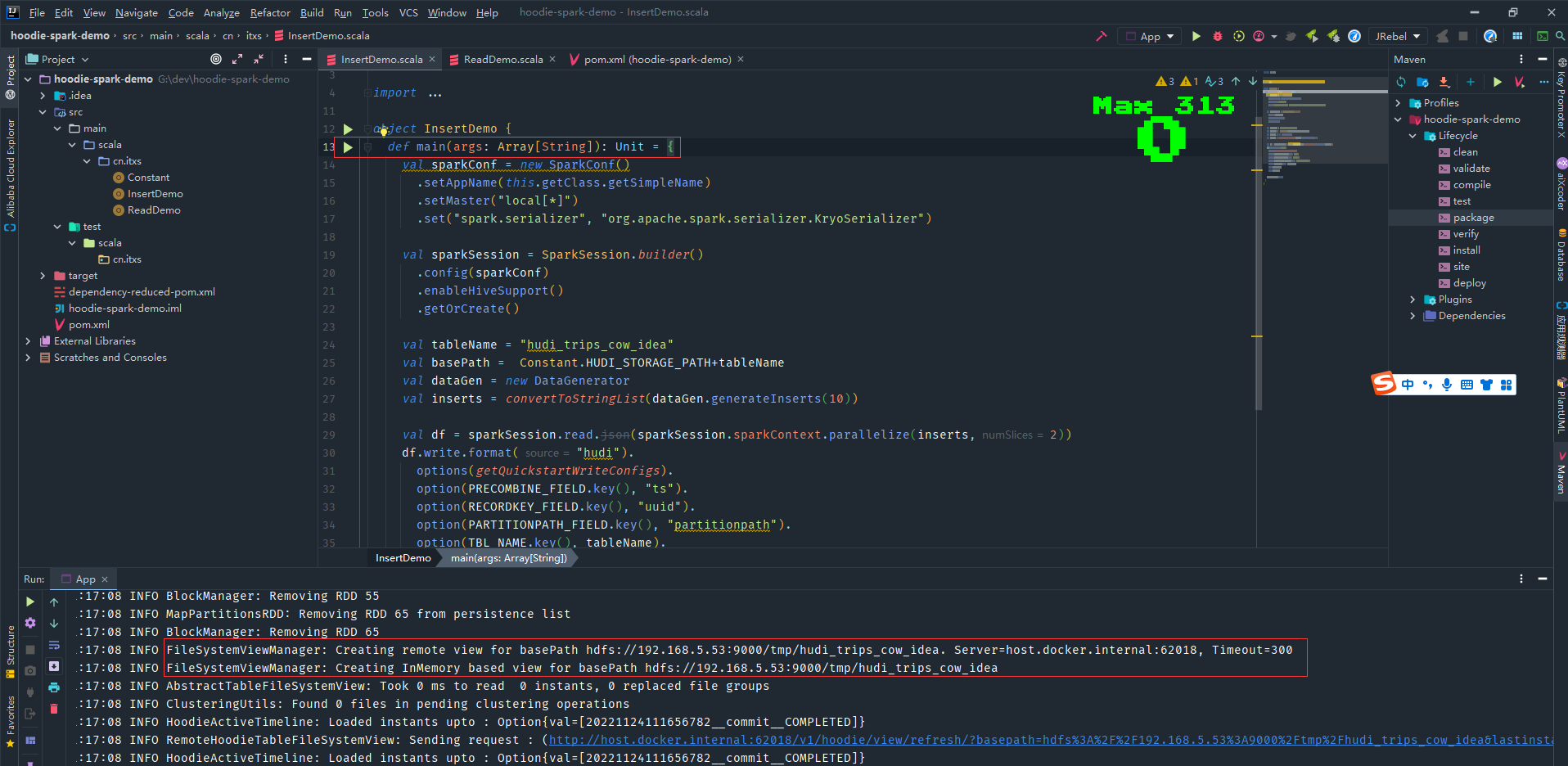

插入hudi数据

package cn.itxs

import org.apache.spark.sql.SparkSession

import org.apache.spark.SparkConf

import org.apache.hudi.QuickstartUtils._

import scala.collection.JavaConversions._

import org.apache.spark.sql.SaveMode._

import org.apache.hudi.DataSourceWriteOptions._

import org.apache.hudi.config.HoodieWriteConfig._

object InsertDemo {

def main(args: Array[String]): Unit = {

val sparkConf = new SparkConf()

.setAppName(this.getClass.getSimpleName)

.setMaster("local[*]")

.set("spark.serializer", "org.apache.spark.serializer.KryoSerializer")

val sparkSession = SparkSession.builder()

.config(sparkConf)

.enableHiveSupport()

.getOrCreate()

val tableName = "hudi_trips_cow_idea"

val basePath = Constant.HUDI_STORAGE_PATH+tableName

val dataGen = new DataGenerator

val inserts = convertToStringList(dataGen.generateInserts(10))

val df = sparkSession.read.json(sparkSession.sparkContext.parallelize(inserts,2))

df.write.format("hudi").

options(getQuickstartWriteConfigs).

option(PRECOMBINE_FIELD.key(), "ts").

option(RECORDKEY_FIELD.key(), "uuid").

option(PARTITIONPATH_FIELD.key(), "partitionpath").

option(TBL_NAME.key(), tableName).

mode(Overwrite).

save(basePath)

sparkSession.close()

}

}

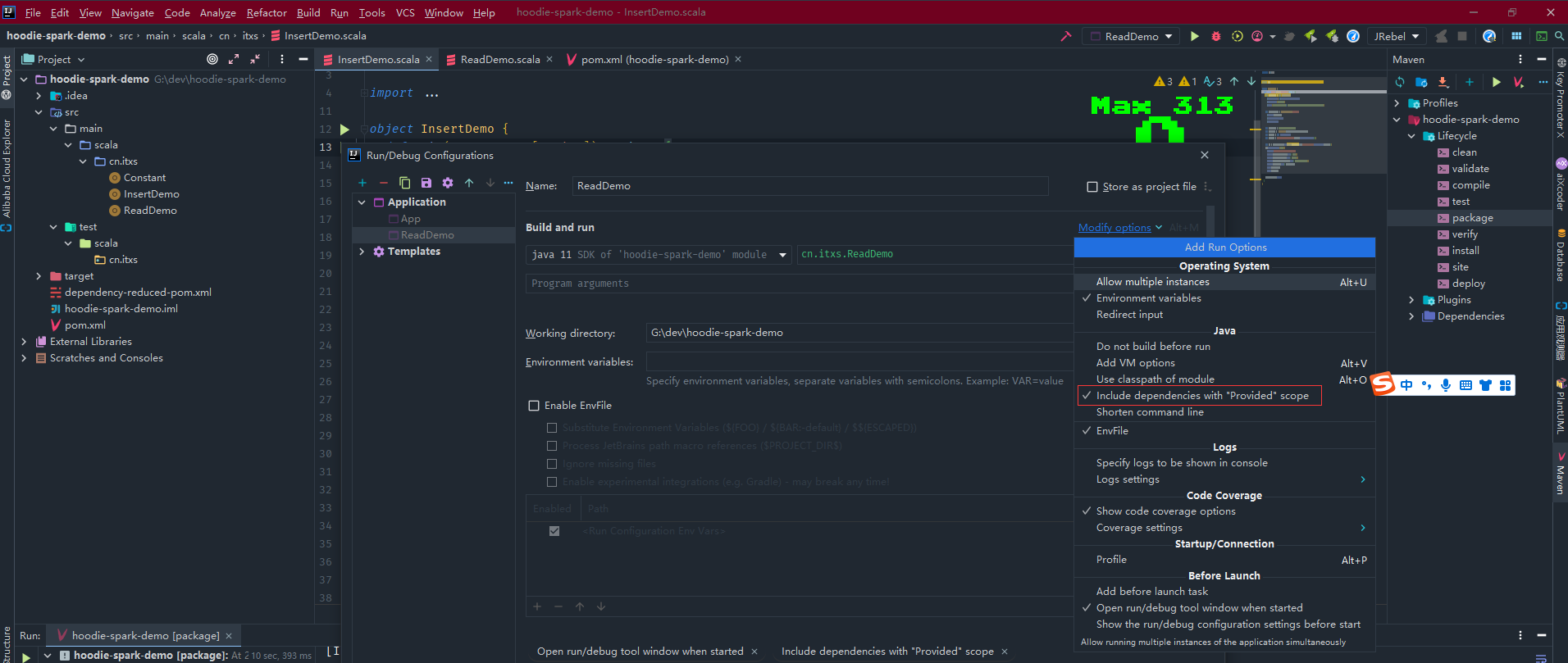

由于依赖中scope是配置为provided,因此运行配置中勾选下面这项

运行InsertDemo程序写入hudi数据

运行ReadDemo程序读取hudi数据

通过mvn clean package打包后上传运行

spark-submit \

--class cn.itxs.ReadDemo \

/home/commons/spark-3.3.0-bin-hadoop3/appjars/hoodie-spark-demo-1.0.jar

DeltaStreamer

HoodieDeltaStreamer实用程序(hudi-utilities-bundle的一部分)提供了从不同源(如DFS或Kafka)中获取的方法,具有以下功能。

- 从Kafka的新事件,从Sqoop的增量导入或输出HiveIncrementalPuller或DFS文件夹下的文件。

- 支持json, avro或自定义记录类型的传入数据。

- 管理检查点、回滚和恢复。

- 利用来自DFS或Confluent模式注册中心的Avro模式。

- 支持插入转换。

# 拷贝hudi-utilities-bundle_2.12-0.12.1.jar到spark的jars目录

cp /home/commons/hudi-release-0.12.1/packaging/hudi-utilities-bundle/target/hudi-utilities-bundle_2.12-0.12.1.jar jars/

# 查看帮助文档,参数非常多,可以在有需要使用的时候查阅

spark-submit --class org.apache.hudi.utilities.deltastreamer.HoodieDeltaStreamer /home/commons/spark-3.3.0-bin-hadoop3/jars/hudi-utilities-bundle_2.12-0.12.1.jar --help

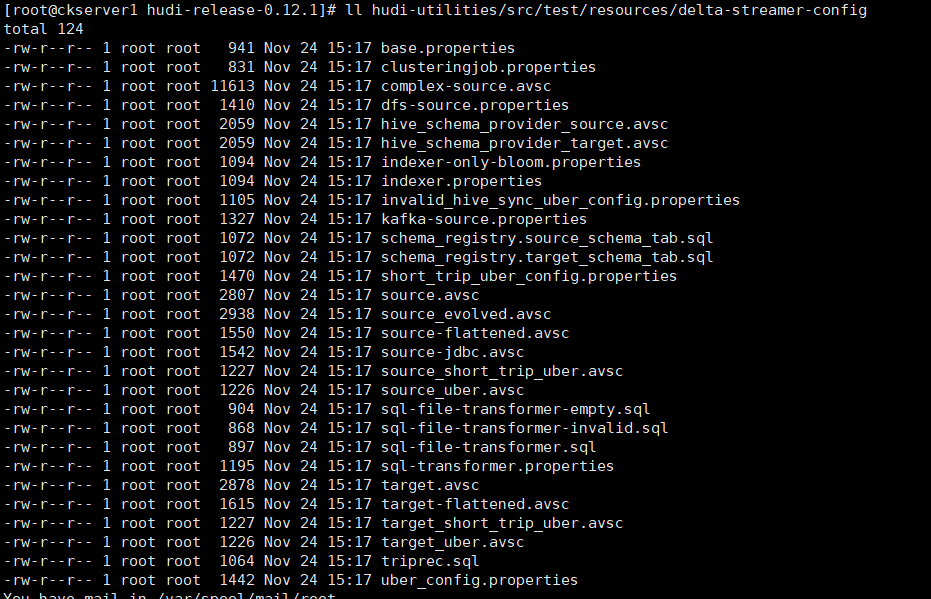

该工具采用层次结构组成的属性文件,并具有提取数据、密钥生成和提供模式的可插入接口。在hudi-下提供了从kafka和dfs中摄取的示例配置

接下里以File Based Schema Provider和JsonKafkaSoiurce为示例演示如何使用

# 创建topic

bin/kafka-topics.sh --zookeeper zk1:2181,zk2:2181,zk3:2181 --create --partitions 1 --replication-factor 1 --topic data_test

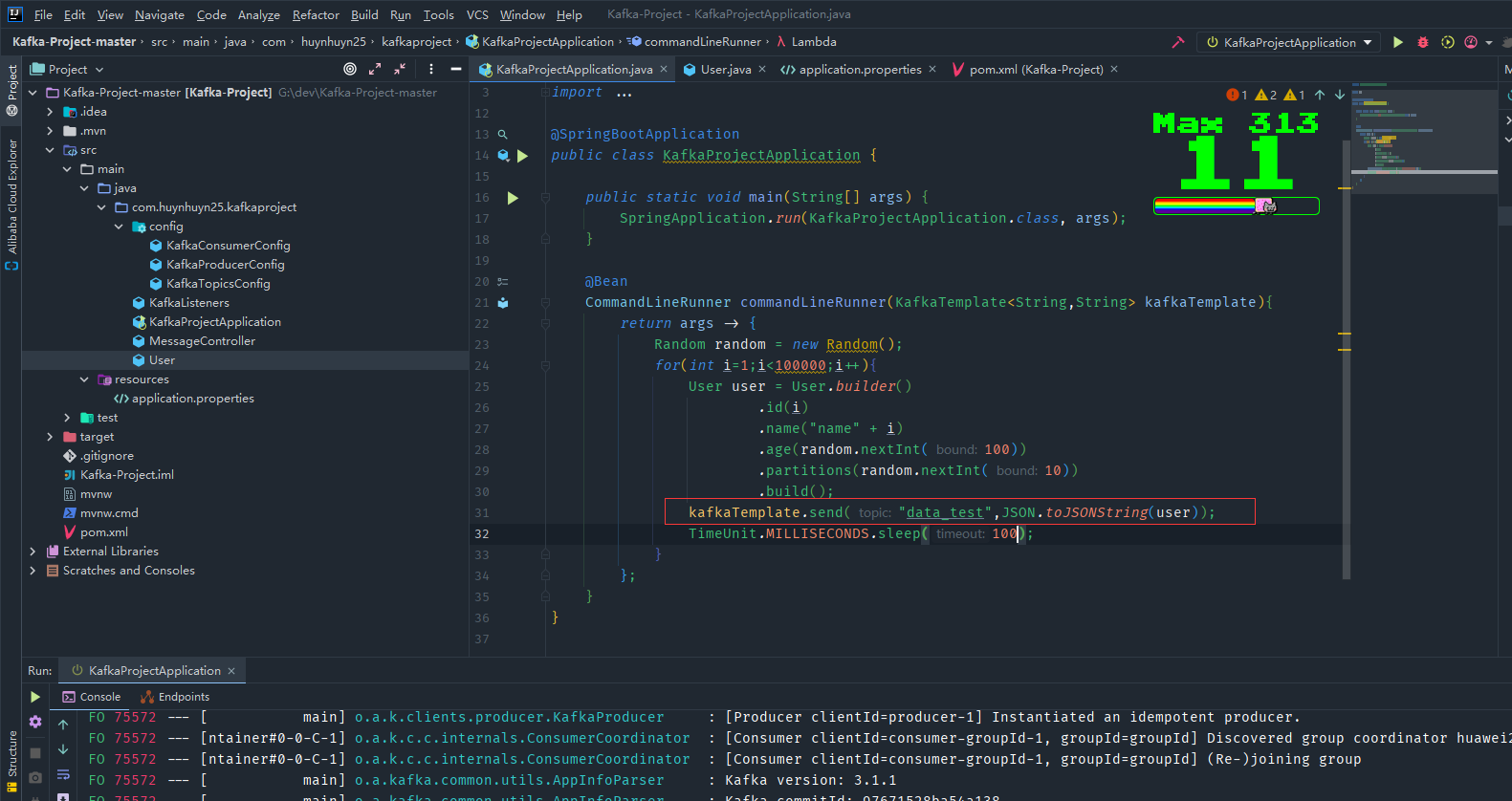

然后编写demo程序持续向这个kafka的topic发送消息

# 创建一个配置文件目录

mkdir /home/commons/hudi-properties

# 拷贝示例配置文件

cp hudi-utilities/src/test/resources/delta-streamer-config/kafka-source.properties /home/commons/hudi-properties/

cp hudi-utilities/src/test/resources/delta-streamer-config/base.properties /home/commons/hudi-properties/

定义avro所需的schema文件包括source和target,创建source文件 vim source-json-schema.avsc

{

"type" : "record",

"name" : "Profiles",

"fields" : [

{

"name" : "id",

"type" : "long"

}, {

"name" : "name",

"type" : "string"

}, {

"name" : "age",

"type" : "int"

}, {

"name" : "partitions",

"type" : "int"

}

]

}

拷贝一份为target文件

cp source-json-schema.avsc target-json-schema.avsc

修改kafka-source.properties的配置如下

include=hdfs://hadoop2:9000/hudi-properties/base.properties

# Key fields, for kafka example

hoodie.datasource.write.recordkey.field=id

hoodie.datasource.write.partitionpath.field=partitions

# schema provider configs

#hoodie.deltastreamer.schemaprovider.registry.url=http://localhost:8081/subjects/impressions-value/versions/latest

hoodie.deltastreamer.schemaprovider.source.schema.file=hdfs://hadoop2:9000/hudi-properties/source-json-schema.avsc

hoodie.deltastreamer.schemaprovider.target.schema.file=hdfs://hadoop2:9000/hudi-properties/target-json-schema.avsc

# Kafka Source

#hoodie.deltastreamer.source.kafka.topic=uber_trips

hoodie.deltastreamer.source.kafka.topic=data_test

#Kafka props

bootstrap.servers=kafka1:9092,kafka2:9092,kafka3:9092

auto.offset.reset=earliest

#schema.registry.url=http://localhost:8081

group.id=mygroup

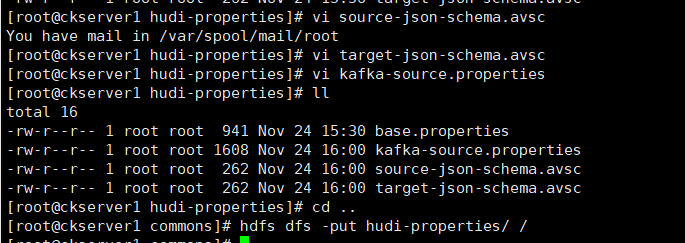

将本地hudi-properties文件夹上传到HDFS

cd ..

hdfs dfs -put hudi-properties/ /

# 运行导入命令

spark-submit \

--class org.apache.hudi.utilities.deltastreamer.HoodieDeltaStreamer \

/home/commons/spark-3.3.0-bin-hadoop3/jars/hudi-utilities-bundle_2.12-0.12.1.jar \

--props hdfs://hadoop2:9000/hudi-properties/kafka-source.properties \

--schemaprovider-class org.apache.hudi.utilities.schema.FilebasedSchemaProvider \

--source-class org.apache.hudi.utilities.sources.JsonKafkaSource \

--source-ordering-field id \

--target-base-path hdfs://hadoop2:9000/tmp/hudi/user_test \

--target-table user_test \

--op BULK_INSERT \

--table-type MERGE_ON_READ

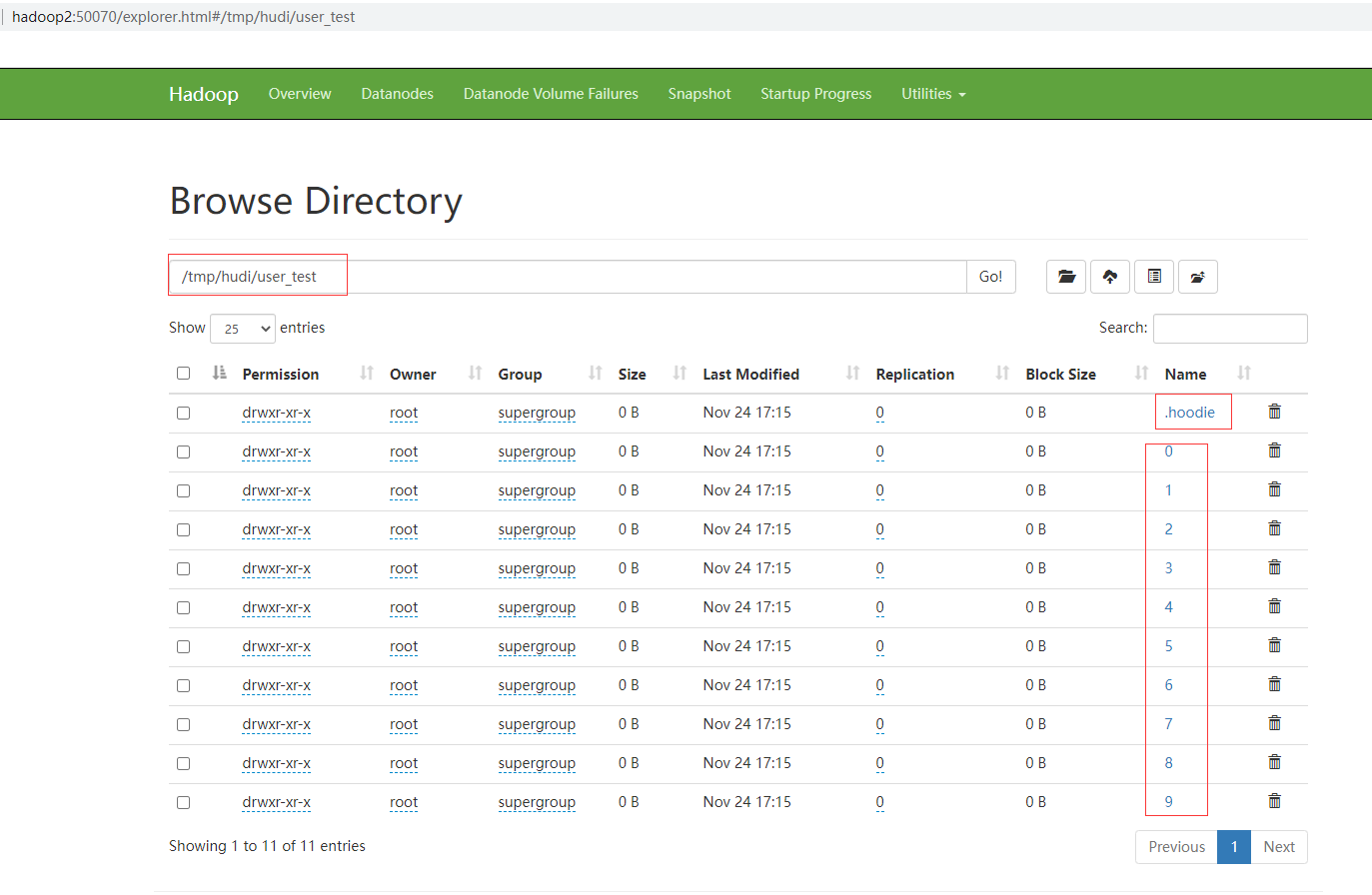

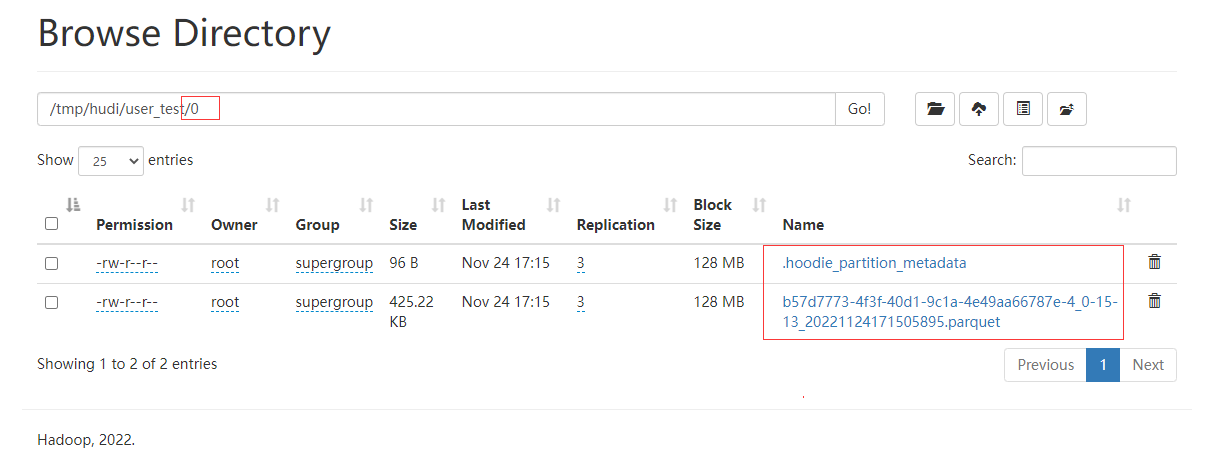

查看hdfs目录已经有表目录和分区目录

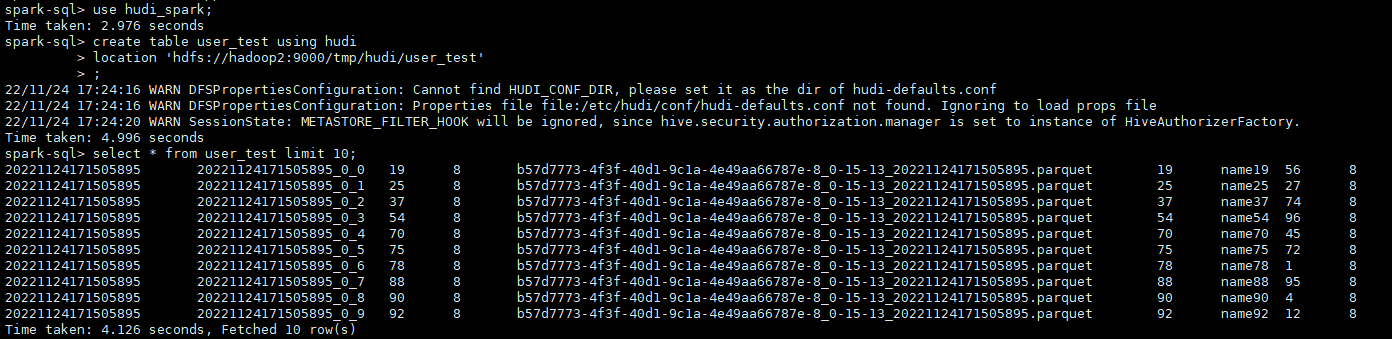

通过spark-sql查询从kafka摄取的数据

use hudi_spark;

create table user_test using hudi

location 'hdfs://hadoop2:9000/tmp/hudi/user_test';

select * from user_test limit 10;

集成Flink

环境准备

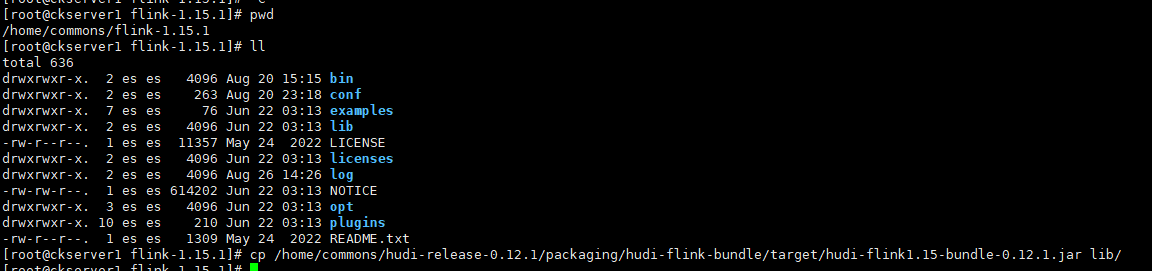

# 解压进入flink目录,这里我就用之前flink的环境,详细可以查看之前关于flink的文章

cd /home/commons/flink-1.15.1

# 拷贝编译好的jar到flink的lib目录

cp /home/commons/hudi-release-0.12.1/packaging/hudi-flink-bundle/target/hudi-flink1.15-bundle-0.12.1.jar lib/

# 拷贝guava包,解决依赖冲突

cp /home/commons/hadoop/share/hadoop/common/lib/guava-27.0-jre.jar lib/

# 配置hadoop环境变量和启动hadoop

export HADOOP_CLASSPATH=`$HADOOP_HOME/bin/hadoop classpath`

sql-clent使用

启动

修改配置文件 vi conf/flink-conf.yaml

classloader.check-leaked-classloader: false

taskmanager.numberOfTaskSlots: 4

state.backend: rocksdb

state.checkpoints.dir: hdfs://hadoop2:9000/checkpoints/flink

state.backend.incremental: true

execution.checkpointing.interval: 5min

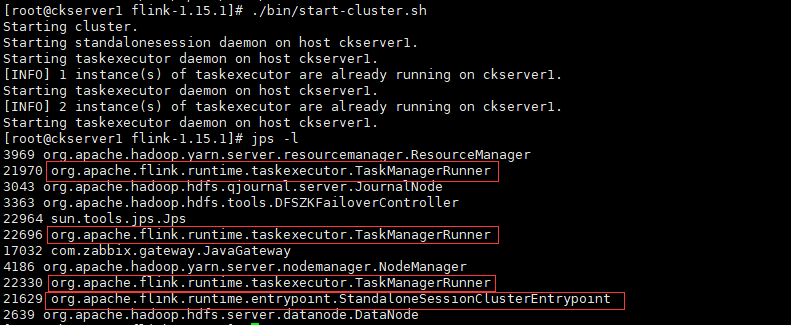

- local 模式

修改workers文件,也可以多配制几个(伪分布式或完全分布式),官方提供示例是4个

localhost

localhost

localhost

# 在本机上启动三个TaskManagerRunner和一个Standalone伪分布式集群

./bin/start-cluster.sh

# 查看进程确认

jps -l

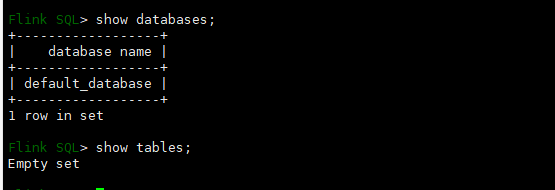

# 启动内嵌的flink sql客户端

./bin/sql-client.sh embedded

show databases;

show tables;

-

yarn-session 模式

- 解决依赖冲突问题

# 拷贝jar到flink的lib目录 cp /home/commons/hadoop/share/hadoop/mapreduce/hadoop-mapreduce-client-core-3.3.4.jar lib/- 启动yarn-session

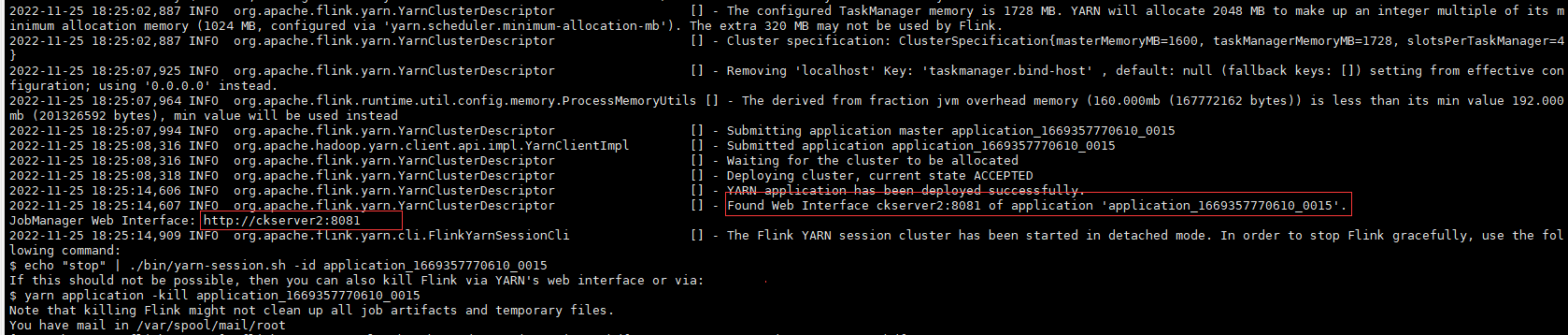

# 先停止上面启动Standalone伪分布式集群 ./bin/stop-cluster.sh # 启动yarn-session分布式集群 ./bin/yarn-session.sh --detached

查看yarn上已经有一个Flink session集群job, ID为application_1669357770610_0015

查看Flink的Web UI可用TaskSlots为0,可确认已切换为yarn管理资源非分配

- 启动sql-client

# 由于使用内嵌模式管理元数据,元数据是保存在内存中,关闭sql-client后则元数据也会消失,生产环境建议使用如Hive元数据管理方式,后面再做配置 ./bin/sql-client.sh embedded -s yarn-session show databases; show tables;

插入数据

CREATE TABLE t1(

uuid VARCHAR(20),

name VARCHAR(10),

age INT,

ts TIMESTAMP(3),

`partition` VARCHAR(20),

PRIMARY KEY(uuid) NOT ENFORCED

)

PARTITIONED BY (`partition`)

WITH (

'connector' = 'hudi',

'path' = 'hdfs://hadoop1:9000/tmp/hudi_flink/t1',

'table.type' = 'MERGE_ON_READ' -- 创建一个MERGE_ON_READ表,默认情况下是COPY_ON_WRITE表

);

-- 插入数据

INSERT INTO t1 VALUES

('id1','Danny',23,TIMESTAMP '2022-11-25 00:00:01','par1'),

('id2','Stephen',33,TIMESTAMP '2022-11-25 00:00:02','par1'),

('id3','Julian',53,TIMESTAMP '2022-11-25 00:00:03','par2'),

('id4','Fabian',31,TIMESTAMP '2022-11-25 00:00:04','par2'),

('id5','Sophia',18,TIMESTAMP '2022-11-25 00:00:05','par3'),

('id6','Emma',20,TIMESTAMP '2022-11-25 00:00:06','par3'),

('id7','Bob',44,TIMESTAMP '2022-11-25 00:00:07','par4'),

('id8','Han',56,TIMESTAMP '2022-11-25 00:00:08','par4');

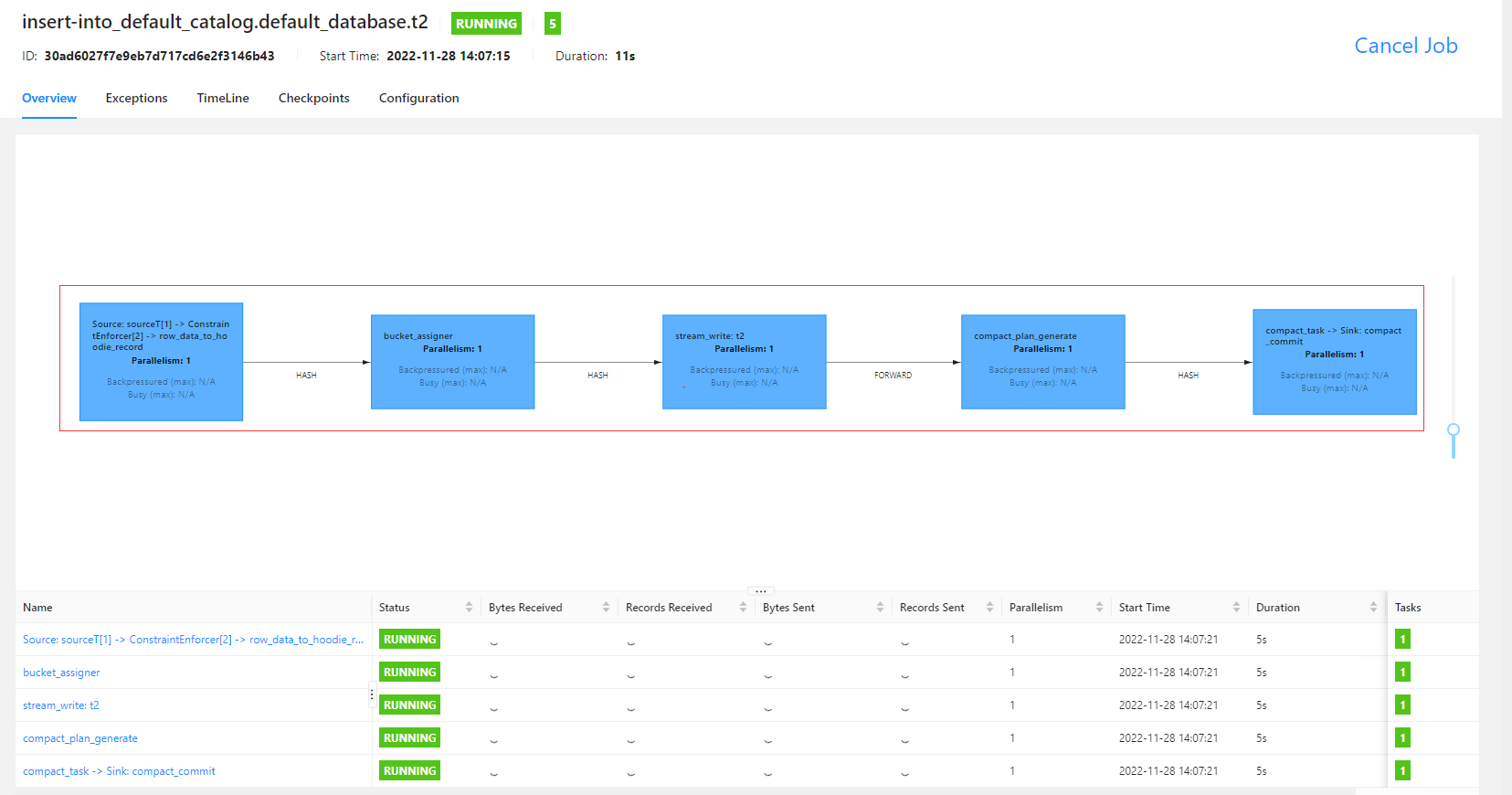

查看Flink Web UI Job的信息

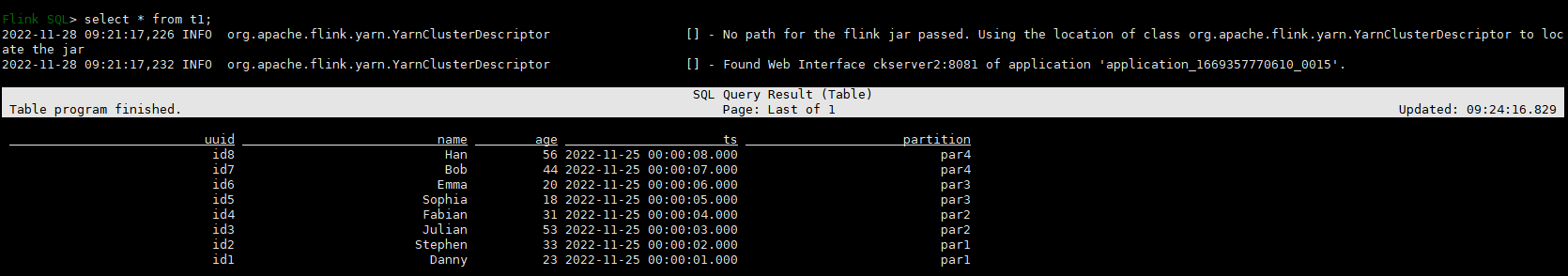

# 查询数据

select * from t1;

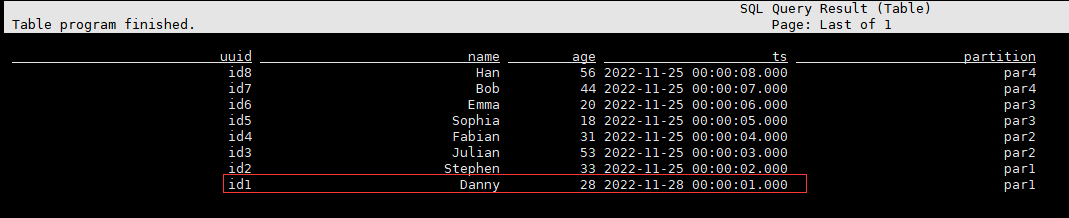

# 更新数据

INSERT INTO t1 VALUES

('id1','Danny',28,TIMESTAMP '2022-11-25 00:00:01','par1');

# 查询数据

select * from t1;

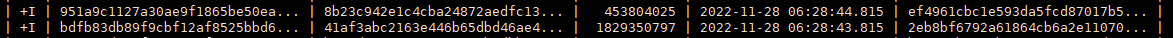

流式读取

-- 设置结果模式为tableau,在CLI中直接显示结果;另外还有table和changelog;changelog模式可以获取+I,-U之类动作数据;

set 'sql-client.execution.result-mode' = 'tableau';

CREATE TABLE sourceT (

uuid varchar(20),

name varchar(10),

age int,

ts timestamp(3),

`partition` varchar(20),

PRIMARY KEY(uuid) NOT ENFORCED

) WITH (

'connector' = 'datagen',

'rows-per-second' = '1'

);

CREATE TABLE t2 (

uuid varchar(20),

name varchar(10),

age int,

ts timestamp(3),

`partition` varchar(20),

PRIMARY KEY(uuid) NOT ENFORCED

)

WITH (

'connector' = 'hudi',

'path' = 'hdfs://hadoop1:9000/tmp/hudi_flink/t2',

'table.type' = 'MERGE_ON_READ',

'read.streaming.enabled' = 'true',

'read.streaming.check-interval' = '4'

);

insert into t2 select * from sourceT;

select * from t2;

Bucket索引

在0.11.0增加了一种高效、轻量级的索引类型bucket index,其为字节贡献回馈给hudi社区。

- Bucket Index是一种Hash分配方式,根据指定的索引字段,计算hash值,然后结合Bucket个数,均匀分配到具体的文件中。Bucket Index支持大数据量场景下的更新,Bucket Index也可以对数据进行分桶存储,但是对于桶数的计算是需要根据当前数据量的大小进行评估的,如果后续需要re-hash的话成本也会比较高。在这里我们预计通过建立Extensible Hash Index来提高哈希索引的可扩展能力。

- 要使用此索引,请将索引类型设置为BUCKET并设置hoodie.storage.layout.partitioner.class为

org.apache.hudi.table.action.commit.SparkBucketIndexPartitioner。对于 Flink,设置index.type=BUCKET. - 该方式相比于BloomIndex在元素定位性能高很多,缺点是Bucket个数无法动态扩展。另外Bucket不适合于COW表,否则会导致写放大更严重。

- 实时入湖写入的性能要求高的场景建议采用Bucket索引。

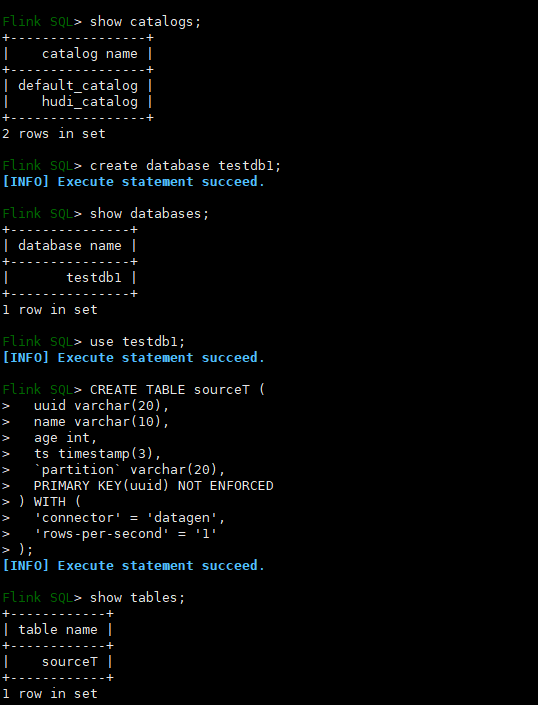

Hudi Catalog

前面基于内容管理hudi元数据的方式每次重启sql客户端就丢掉了,Hudi Catalog则是可以持久化元数据;Hudi Catalog支持多种模式,包括dfs和hms,hudi还可以直接集群hive使用,后续再一步步演示,现在先简单看下dfs模式的Hudi Catalog,先添加启动sql文件,vim conf/sql-client-init.sql

create catalog hudi_catalog

with(

'type' = 'hudi',

'mode' = 'dfs',

'catalog.path'='/tmp/hudi_catalog'

);

use catalog hudi_catalog;

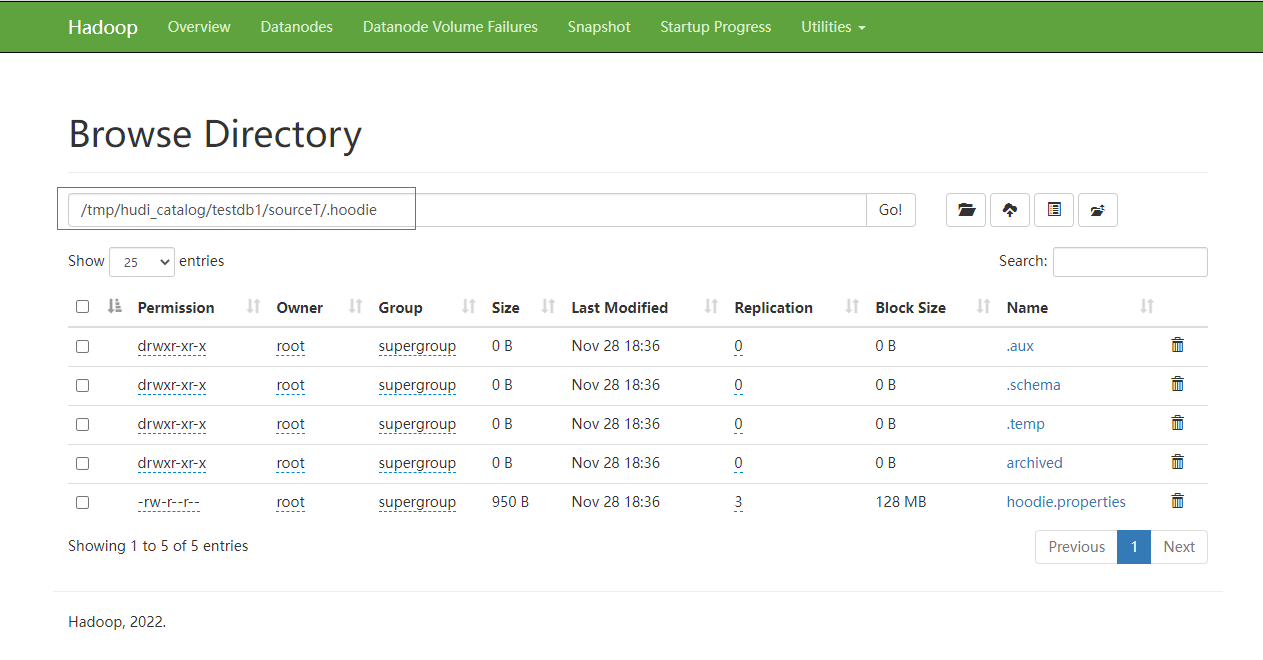

创建目录并启动,建表测试

hdfs dfs -mkdir /tmp/hudi_catalog

./bin/sql-client.sh embedded -i conf/sql-client-init.sql -s yarn-session

查看hdfs的数据如下,退出客户端后重新登录客户端还可以查到上面的hudi_catalog及其库和表的数据。

本人博客网站IT小神 www.itxiaoshen.com