-

hadoop2.8.2分布式集群实战

环境

CentOS6.5+jdk1.8+Hadoop2.8.2;

概述

本文档搭建三台hadoop的集群,其中一台为Master,两台为Slaves。

Master上的进程:NameNode,SecondaryNameNode,ResourceManager。

Slaves上的进程:DataNode,NodeManager。准备环境

设置hostname

我们定义三台服务器的host那么为hadoop1,hadoop2,hadoop3。这样在下面的配置中我们就使用hostname来代替ip,更加一目了然。

服务器1命令如下:[root@chu home]# sudo hostname hadoop1 [root@hadoop1 home]#- 1

- 2

然后分别在另外两台服务器上,执行hostname hadoop2和hostname hadoop3。

这只是临时的修改hostname,重启之后就失效了,如果你想永久的修改hostname,请继续修改/etc/sysconfig/network文件中的HOSTNAME属性的值。修改hosts文件

打开/etc/hosts文件,在末尾添加下面内容:

192.168.1.235 hadoop1 192.168.1.237 hadoop2 192.168.1.239 hadoop3- 1

- 2

- 3

192.168.1.235,192.168.1.237,192.168.1.239是三台服务器的ip,请正确填写你的服务器ip。

设置服务器之间无密码通信

分别在三台服务器上执行下面的命令:

ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa- 1

如下所示:

[root@hadoop2 hadoop]# ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa Generating public/private rsa key pair. /root/.ssh/id_rsa already exists. Overwrite (y/n)? y Your identification has been saved in /root/.ssh/id_rsa. Your public key has been saved in /root/.ssh/id_rsa.pub. The key fingerprint is: 7e:52:41:43:9d:07:a8:b2:15:07:86:1c:0d:f5:8f:59 root@hadoop2 The key's randomart image is: +--[ RSA 2048]----+ | .o*+o+o.o | | o.ooo.o . | | +o E. | | . o * | | +S + . | | .. . | | o . | | o | | | +-----------------+- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

此命令会在/root/.ssh目录下生成私钥和公钥文件,如下所示:

[root@hadoop2 home]# ll /root/.ssh total 16 -rw-------. 1 root root 1182 Nov 2 02:17 authorized_keys -rw-------. 1 root root 1675 Nov 2 01:47 id_rsa -rw-r--r--. 1 root root 394 Nov 2 01:47 id_rsa.pub -rw-r--r--. 1 root root 1197 Nov 1 01:57 known_hosts [root@hadoop2 home]#- 1

- 2

- 3

- 4

- 5

- 6

- 7

首先把hadoop2和hadoop3服务器上的公钥拷贝到hadoop1的/root/.ssh目录下,重命名为hadoop1.pub和hadoop2.pub。这里使用scp命令,过程之中需要你输入目标服务器密码。当然你可以其他方法拷贝,只要符合要求就行,

进入hadoop2服务器,执行下面命令:[root@hadoop2 home]# scp /root/.ssh/id_rsa.pub hadoop1:/root/.ssh/hadoop2.pub- 1

进入hadoop3服务器,执行下面命令:

[root@hadoop3 home]# scp /root/.ssh/id_rsa.pub hadoop1:/root/.ssh/hadoop3.pub- 1

然后再hadoop1服务器中查看.ssh中的文件如下:

[root@hadoop1 home]# ll /root/.ssh total 24 -rw-------. 1 root root 1182 Nov 1 17:59 authorized_keys -rw-r--r--. 1 root root 394 Nov 1 17:57 hadoop2.pub -rw-r--r--. 1 root root 394 Nov 1 17:57 hadoop3.pub -rw-------. 1 root root 1675 Nov 1 17:46 id_rsa -rw-r--r--. 1 root root 394 Nov 1 17:46 id_rsa.pub -rw-r--r--. 1 root root 2370 Oct 31 18:41 known_hosts- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

在hadoop1服务器上将id_rsa.pub,hadoop2.pub,hadoop3.pub都写入认证文件authorized_keys中。命令如下:

[root@hadoop1 home]# cd /root/.ssh [root@hadoop1 .ssh]# cat *.pub > authorized_keys [root@hadoop1 .ssh]# cat authorized_keys ssh-rsa AAAAB3NzaC1yc2EAAAABIwAAAQEAp/r5sJ5XXrUPncNC9n6lOkcJanNuu9KX9nDMVMw6i0Q0Lq64mib3n8HtSuh6ytc1zaCA3nP3YApofcf7rM406IlxFcrNAA77UfMTw7EjyAhwpaN/045/MRd/yklqEDvtzeQSaWfzns3WFrc3ELF41cWh2k6wR9MCdsJyUfUG7SukCw3BRzHvqFhBV2sMnCzGLUxnAvOklNqmLtQ9LbWKOjv47GQBrMHu16awwru5frSlcnbO0pJa+c/enri2Sm6LfeskqyOlDeTgcdXh/97hqAgAetMh893an0X9hlqoa6zq6ybhOmgCSDYCD7RpzQpoB+o4qWzkGEixopIQ8otjbw== root@hadoop2 ssh-rsa AAAAB3NzaC1yc2EAAAABIwAAAQEA5EbNzzX41YXY0XFU24gqypU8dQqYHfRRJdUBAkf1AGc6S0K+FMaVdlLhWvWDE5+4nVKNQmXe22cRDLel/9PqnNStcRBnHQazKEICNN11FnuixMZKkDcxx5Ikcv01ToGf3KBupFgxnGPvrpVOUyWZ8TH4JVJNKuPA9AbWRIvpdZ7Y04OYLphjduGQq+8zDuwlPn4epEHXtIaLHomdI9Rt4Qhufq8c6ZnwC3DsR8r1XTX0x+nngpgKMyspt3h7tGysJr4nfnG5gt68L3X8H5Yl0hLuxPJqDEORVRTFm3ag/HV1UR+BXpOBeYjDsMKKLYebBVivdAcmWJhsSlhvS5Q2Xw== root@hadoop3 ssh-rsa AAAAB3NzaC1yc2EAAAABIwAAAQEAygyRKgUIxj1wjkvwfYP3QIoZ1gpP5gayx5z4b1nxuu7cD3bu7f2hLAve3cwcbDpkjeLP8Lj2Sz6VdzIvhDvVF+ZN7qwx8bsmPElVoiiZJecxuYt6wizg8IPxLf6NQknfxkKEv0QIeSlN8IQlXVaCz04FiYmFvincPeyvszTXTXcVf6YWXHNbqtm6p6t4kxf4rpm9/lWR8VapzaPM3/669fqrfAkIjkUGEdzD3wUWpHtgGpmNdAW6My3lyWhYTm4INftpDzsL47lXo1UNGwvlhaLneMdGQP/1+t0k3wsNzggzLQSV8GN+jy0jIbSsc6HlIk663OLKz6vY+fccGlE30Q== root@hadoop1- 1

- 2

- 3

- 4

- 5

- 6

看到authorized_keys文件中存在三行认证信息,三行末尾分别是root@hadoop1,root@hadoop2和root@hadoop3,说明写入成功。然后把authorized_keys文件的属性改为600,命令如下:

[root@hadoop1 .ssh]# chmod 600 authorized_keys- 1

将这个认证文件拷贝到hadoop2和hadoop3中,覆盖掉原来的认证文件,过程中需要输出目标服务器密码,命令如下:

[root@hadoop1 .ssh]# scp authorized_keys hadoop2:/root/.ssh [root@hadoop1 .ssh]# scp authorized_keys hadoop3:/root/.ssh- 1

- 2

分别在三台服务器上验证无密码通信,下面展示了再hadoop1上验证,如下所示:

[root@hadoop1 home]# ssh hadoop2 Last login: Thu Nov 2 02:18:57 2017 from hadoop3 [root@hadoop2 ~]# exit logout Connection to hadoop2 closed. [root@hadoop1 home]# ssh hadoop3 Last login: Wed Nov 1 03:18:41 2017 from hadoop2 [root@hadoop3 ~]# exit logout Connection to hadoop3 closed. [root@hadoop1 home]#- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

看到hadoop1不需要输入密码就可以和hadoop2和hadoop3通信。当然在hadoop2和hadoop3也需要验证,这里不再赘述。到这里无密码通信就结束了。

安装JDK

从oracle下载jdk,三台服务器上都要安装好JDK,自定义目录解压就OK了。注意三台服务器的JDK安装目录要相同,否则后面会麻烦。配置环境变量,打开/etc/profile文件,在文件末尾加上如下命令:

export JAVA_HOME=/usr/java/jdk1.8 export CLASSPATH=.:$JAVA_HOME/jre/lib/rt.jar:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar export PATH=$PATH:$JAVA_HOME/bin- 1

- 2

- 3

环境变量生效:

source /etc/profile- 1

然后使用 java -version命令验证环境变量设置是否生效。如下:

[root@hadoop1 home]# java -version java version "1.8.0_91" Java(TM) SE Runtime Environment (build 1.8.0_91-b14) Java HotSpot(TM) 64-Bit Server VM (build 25.91-b14, mixed mode)- 1

- 2

- 3

- 4

安装Hadoop

从Apache下载hadoop2.8.2版本,解压为hadoop,我们现在hadoop1服务器上安装并配置hadoop,配置完成之后,复制到另外两台服务器上就可以了。

配置JAVA_HOME和HADOOP_PREFIX,打开hadoop目录下etc/hadoop/hadoop-env.sh文件,修改参数为正确的值,如下:[root@hadoop1 hadoop]# more hadoop-env.sh # The only required environment variable is JAVA_HOME. All others are # optional. When running a distributed configuration it is best to # set JAVA_HOME in this file, so that it is correctly defined on # remote nodes. # The java implementation to use. export JAVA_HOME=/home/software/jdk1.8 #请修改为你的目录 export HADOOP_PREFIX=/home/software/hadoop #请修改为你的目录- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

修改配置

主要是修改slaves、core-site.xml,hdfs-site.xml,yarn-site.xml,maped-site.xml。文件的内容如下:

slaves文件

hadoop2 hadoop3- 1

- 2

core-site.xml文件

fs.defaultFS hdfs://hadoop1:9000 io.file.buffer.size 131072 hadoop.tmp.dir /home/software/hadoop/tmp - 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

hdfs-site.xml

dfs.namenode.secondary.http-address hadoop1:50090 dfs.replication 2 dfs.namenode.name.dir file:/home/software/hadoop/hdfs/name dfs.datanode.data.dir file:/home/software/hadoop/hdfs/data - 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

yarn-site.xml文件

yarn.nodemanager.aux-services mapreduce_shuffle yarn.resourcemanager.address hadoop1:8032 yarn.resourcemanager.scheduler.address hadoop1:8030 yarn.resourcemanager.resource-tracker.address hadoop1:8031 yarn.resourcemanager.admin.address hadoop1:8033 yarn.resourcemanager.webapp.address hadoop1:8088 - 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

maped-site.xml文件

mapreduce.framework.name yarn mapreduce.jobhistory.address hadoop1:10020 mapreduce.jobhistory.address hadoop1:19888 - 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

配置修改完成之后,需要把hadoop的整个目录向hadoop2和hadoop3中都复制一份,复制过程你随意选择,且要注意目录必须和hadoop1服务器的目录相同。

开放端口

上面步骤中用到了一些端口,因为是测试环境为了简单起见,统一开放用到的全部端口。三台服务器都要开放,分别在三台服务器上进行下面的操作。打开/etc/sysconfig/iptables文件,修改内容如下:

# Firewall configuration written by system-config-firewall # Manual customization of this file is not recommended. *filter :INPUT ACCEPT [0:0] :FORWARD ACCEPT [0:0] :OUTPUT ACCEPT [0:0] -A INPUT -m state --state ESTABLISHED,RELATED -j ACCEPT -A INPUT -p icmp -j ACCEPT -A INPUT -i lo -j ACCEPT -A INPUT -m state --state NEW -m tcp -p tcp --dport 22 -j ACCEPT -A INPUT -m state --state NEW -m tcp -p tcp --dport 8088 -j ACCEPT -A INPUT -m state --state NEW -m tcp -p tcp --dport 9000 -j ACCEPT -A INPUT -m state --state NEW -m tcp -p tcp --dport 8030 -j ACCEPT -A INPUT -m state --state NEW -m tcp -p tcp --dport 8031 -j ACCEPT -A INPUT -m state --state NEW -m tcp -p tcp --dport 8032 -j ACCEPT -A INPUT -m state --state NEW -m tcp -p tcp --dport 8033 -j ACCEPT -A INPUT -m state --state NEW -m tcp -p tcp --dport 50010 -j ACCEPT -A INPUT -m state --state NEW -m tcp -p tcp --dport 50070 -j ACCEPT -A INPUT -j REJECT --reject-with icmp-host-prohibited -A FORWARD -j REJECT --reject-with icmp-host-prohibited- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

并执行下面命令生效:

[root@hadoop3 hadoop]# /etc/init.d/iptables restart- 1

再次重申:三台服务器都要开放端口

启动集群

启动集群之前我们需要格式化namenode,命令如下,这条命令只需要在hadoop1上执行。:

[root@hadoop1 hadoop]# bin/hdfs namenode -format- 1

最后使用start-all.sh启动集群,命令如下,这条命令只需要在hadoop1上执行:

[root@hadoop1 hadoop]# sbin/start-all.sh This script is Deprecated. Instead use start-dfs.sh and start-yarn.sh 17/10/31 18:50:22 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable Starting namenodes on [hadoop1] hadoop1: starting namenode, logging to /home/software/hadoop/logs/hadoop-root-namenode-hadoop1.out hadoop2: starting datanode, logging to /home/software/hadoop/logs/hadoop-root-datanode-hadoop2.out hadoop3: starting datanode, logging to /home/software/hadoop/logs/hadoop-root-datanode-hadoop3.out Starting secondary namenodes [hadoop1] hadoop1: starting secondarynamenode, logging to /home/software/hadoop/logs/hadoop-root-secondarynamenode-hadoop1.out 17/10/31 18:50:42 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable starting yarn daemons starting resourcemanager, logging to /home/software/hadoop/logs/yarn-root-resourcemanager-hadoop1.out hadoop2: starting nodemanager, logging to /home/software/hadoop/logs/yarn-root-nodemanager-hadoop2.out hadoop3: starting nodemanager, logging to /home/software/hadoop/logs/yarn-root-nodemanager-hadoop3.out- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

看到输出中包括所有节点的启动以及日志文件的位置。使用jps查看三台服务器上的节点。

hadoop1上执行jps如下:[root@hadoop1 hadoop]# jps 13393 SecondaryNameNode 13547 ResourceManager 14460 Jps 13199 NameNode- 1

- 2

- 3

- 4

- 5

hadoop2上执行jps如下:

[root@hadoop2 home]# jps 4497 NodeManager 4386 DataNode 5187 Jps- 1

- 2

- 3

- 4

hadoop3上执行jps如下:

[root@hadoop3 hadoop]# jps 23474 Jps 4582 NodeManager 4476 DataNode- 1

- 2

- 3

- 4

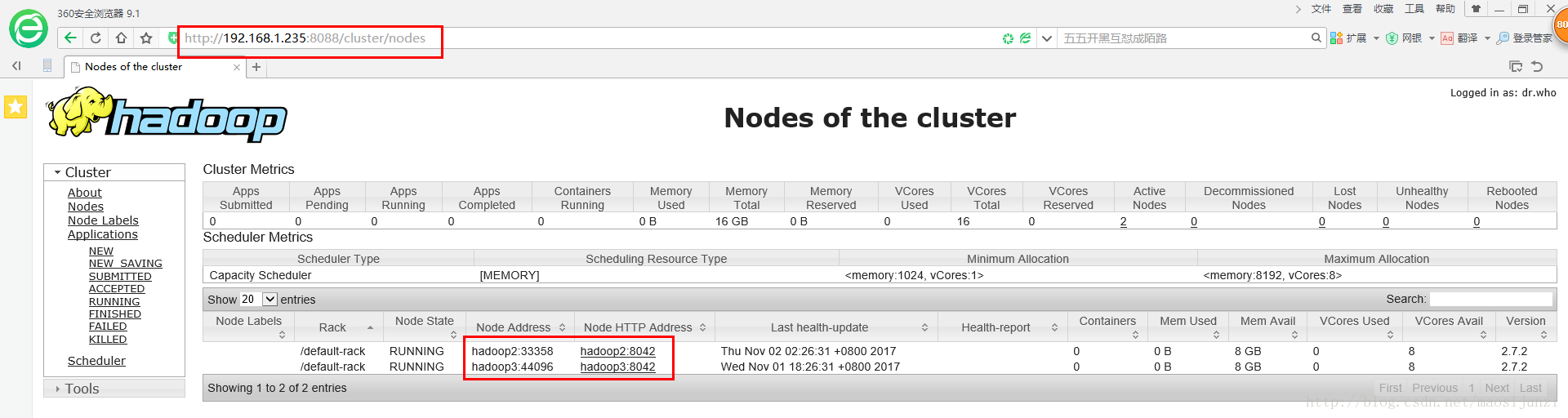

使用浏览器查看集群信息:

搞定

-

相关阅读:

二叉树的深度

GBASE 8A v953报错集锦51--非空列的数据加载

中国剩余定理(crt)和扩展中国剩余定理(excrt)

【云原生| K8S系列】Kubernetes Daemonset,全面指南

LeetCode:二分查找

activiti6 ui搭建

计算机网络—概述

拆解常见的6类爆款标题写作技巧!

源码安装gstreamer

设计LRU/LFU缓存结构

- 原文地址:https://blog.csdn.net/drhrht/article/details/126366681