-

深度学习CNN--眼睛姿态识别联练习

活动地址:CSDN21天学习挑战赛

这里就讲述整个识别流程,提炼出几个和以往发表文章不同的进行表述,相关识别文章参考连接1、混淆矩阵

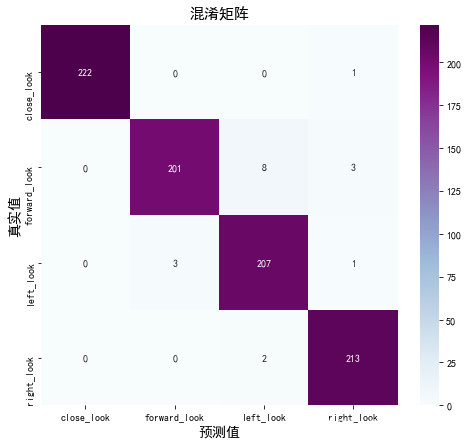

混淆矩阵通常用于评价训练模型的好坏,这里简单的列举一个二分类的例子,有类别A和B,预测结果正确且为A的数量记为TA,预测结果正确且为B的数量记作TB,那预测错误且为A的为FA,预测错误且为B的记为FB,这样就做成了一个混淆矩阵。混淆矩阵展示效果如下可以直观的看出预测结果及数量。

下面是混淆矩阵的代码from sklearn.metrics import confusion_matrix import seaborn as sns import pandas as pd # 定义一个绘制混淆矩阵图的函数 def plot_cm(labels, predictions): # 生成混淆矩阵 conf_numpy = confusion_matrix(labels, predictions) # 将矩阵转化为 DataFrame conf_df = pd.DataFrame(conf_numpy, index=class_names ,columns=class_names) plt.figure(figsize=(8,7)) sns.heatmap(conf_df, annot=True, fmt="d", cmap="BuPu") plt.title('混淆矩阵',fontsize=15) plt.ylabel('真实值',fontsize=14) plt.xlabel('预测值',fontsize=14) val_pre = [] val_label = [] for images, labels in val_ds:#这里可以取部分验证数据(.take(1))生成混淆矩阵 for image, label in zip(images, labels): # 需要给图片增加一个维度 img_array = tf.expand_dims(image, 0) # 使用模型预测图片中的人物 prediction = model.predict(img_array) val_pre.append(class_names[np.argmax(prediction)]) val_label.append(class_names[label]) plot_cm(val_label, val_pre)- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

下面是眼睛识别的混淆矩阵结果

2、常用网络结构调用方法

tensorflow自带许多程度的神经网络,可以通过函数进行调用,下面以VGG16模型调用为例代码如下model = tf.keras.applications.VGG16() # 打印模型信息 model.summary()- 1

- 2

- 3

- 4

下面列举出可以直接调用的网络模型:

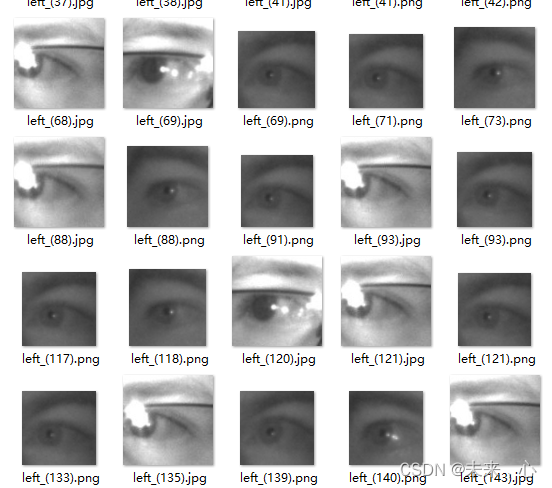

3、眼睛数据集连接如下:

链接:https://pan.baidu.com/s/12Waf0no8vKHhqUK8RzGmUA

提取码:n2v0

里面有四个文件:

4、最后给出完整代码参考import matplotlib.pyplot as plt # 支持中文 plt.rcParams['font.sans-serif'] = ['SimHei'] # 用来正常显示中文标签 plt.rcParams['axes.unicode_minus'] = False # 用来正常显示负号 import os,PIL # 设置随机种子尽可能使结果可以重现 import numpy as np np.random.seed(1) # 设置随机种子尽可能使结果可以重现 import tensorflow as tf tf.random.set_seed(1) import pathlib data_dir = "H:\python_project\python辅助算法\data\\017_Eye_dataset" data_dir = pathlib.Path(data_dir) image_count = len(list(data_dir.glob('*/*'))) print("图片总数为:",image_count) # 预处理数据 batch_size = 64 img_height = 224 img_width = 224 """ 关于image_dataset_from_directory()的详细介绍可以参考文章:https://mtyjkh.blog.csdn.net/article/details/117018789 """ train_ds = tf.keras.preprocessing.image_dataset_from_directory( data_dir, validation_split=0.2, subset="training", seed=12, image_size=(img_height, img_width), batch_size=batch_size) """ 关于image_dataset_from_directory()的详细介绍可以参考文章:https://mtyjkh.blog.csdn.net/article/details/117018789 """ val_ds = tf.keras.preprocessing.image_dataset_from_directory( data_dir, validation_split=0.2, subset="validation", seed=12, image_size=(img_height, img_width), batch_size=batch_size) class_names = train_ds.class_names print(class_names) # 配置数据集 AUTOTUNE = tf.data.AUTOTUNE train_ds = train_ds.cache().shuffle(1000).prefetch(buffer_size=AUTOTUNE) val_ds = val_ds.cache().prefetch(buffer_size=AUTOTUNE) # 调用模型 model = tf.keras.applications.VGG16() # 打印模型信息 model.summary() # 设置初始学习率 initial_learning_rate = 1e-4 lr_schedule = tf.keras.optimizers.schedules.ExponentialDecay( initial_learning_rate, decay_steps=20, # 敲黑板!!!这里是指 steps,不是指epochs decay_rate=0.96, # lr经过一次衰减就会变成 decay_rate*lr staircase=True) # 将指数衰减学习率送入优化器 optimizer = tf.keras.optimizers.Adam(learning_rate=lr_schedule) # 编译 model.compile(optimizer=optimizer, loss ='sparse_categorical_crossentropy', metrics =['accuracy']) epochs = 10 # 训练 history = model.fit( train_ds, validation_data=val_ds, epochs=epochs ) # 训练过程存储在history里面 acc = history.history['accuracy'] val_acc = history.history['val_accuracy'] loss = history.history['loss'] val_loss = history.history['val_loss'] epochs_range = range(epochs) # 展示训练结果 plt.figure(figsize=(12, 4)) plt.subplot(1, 2, 1) plt.plot(epochs_range, acc, label='Training Accuracy') plt.plot(epochs_range, val_acc, label='Validation Accuracy') plt.legend(loc='lower right') plt.title('Training and Validation Accuracy') plt.subplot(1, 2, 2) plt.plot(epochs_range, loss, label='Training Loss') plt.plot(epochs_range, val_loss, label='Validation Loss') plt.legend(loc='upper right') plt.title('Training and Validation Loss') plt.show() from sklearn.metrics import confusion_matrix import seaborn as sns import pandas as pd # 定义一个绘制混淆矩阵图的函数 def plot_cm(labels, predictions): # 生成混淆矩阵 conf_numpy = confusion_matrix(labels, predictions) # 将矩阵转化为 DataFrame conf_df = pd.DataFrame(conf_numpy, index=class_names, columns=class_names) plt.figure(figsize=(8, 7)) sns.heatmap(conf_df, annot=True, fmt="d", cmap="BuPu") plt.title('混淆矩阵', fontsize=15) plt.ylabel('真实值', fontsize=14) plt.xlabel('预测值', fontsize=14) val_pre = [] val_label = [] for images, labels in val_ds:#这里可以取部分验证数据(.take(1))生成混淆矩阵 for image, label in zip(images, labels): # 需要给图片增加一个维度 img_array = tf.expand_dims(image, 0) # 使用模型预测图片中的人物 prediction = model.predict(img_array) val_pre.append(class_names[np.argmax(prediction)]) val_label.append(class_names[label]) # 保存模型 model.save('model/17_model.h5') # 加载模型 new_model = tf.keras.models.load_model('model/17_model.h5') # 采用加载的模型(new_model)来看预测结果 plt.figure(figsize=(10, 5)) # 图形的宽为10高为5 plt.suptitle("预测结果展示") for images, labels in val_ds.take(1): for i in range(8): ax = plt.subplot(2, 4, i + 1) # 显示图片 plt.imshow(images[i].numpy().astype("uint8")) # 需要给图片增加一个维度 img_array = tf.expand_dims(images[i], 0) # 使用模型预测图片中的人物 predictions = new_model.predict(img_array) plt.title(class_names[np.argmax(predictions)]) plt.axis("off")- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

- 88

- 89

- 90

- 91

- 92

- 93

- 94

- 95

- 96

- 97

- 98

- 99

- 100

- 101

- 102

- 103

- 104

- 105

- 106

- 107

- 108

- 109

- 110

- 111

- 112

- 113

- 114

- 115

- 116

- 117

- 118

- 119

- 120

- 121

- 122

- 123

- 124

- 125

- 126

- 127

- 128

- 129

- 130

- 131

- 132

- 133

- 134

- 135

- 136

- 137

- 138

- 139

- 140

- 141

- 142

- 143

- 144

- 145

- 146

- 147

- 148

- 149

- 150

- 151

- 152

- 153

- 154

- 155

- 156

- 157

- 158

- 159

下面给出VGG16的具体参数展示,这个模型参数比较多,有很多种方法可以进行优化

Model: "vgg16" _________________________________________________________________ Layer (type) Output Shape Param # ================================================================= input_1 (InputLayer) [(None, 224, 224, 3)] 0 _________________________________________________________________ block1_conv1 (Conv2D) (None, 224, 224, 64) 1792 _________________________________________________________________ block1_conv2 (Conv2D) (None, 224, 224, 64) 36928 _________________________________________________________________ block1_pool (MaxPooling2D) (None, 112, 112, 64) 0 _________________________________________________________________ block2_conv1 (Conv2D) (None, 112, 112, 128) 73856 _________________________________________________________________ block2_conv2 (Conv2D) (None, 112, 112, 128) 147584 _________________________________________________________________ block2_pool (MaxPooling2D) (None, 56, 56, 128) 0 _________________________________________________________________ block3_conv1 (Conv2D) (None, 56, 56, 256) 295168 _________________________________________________________________ block3_conv2 (Conv2D) (None, 56, 56, 256) 590080 _________________________________________________________________ block3_conv3 (Conv2D) (None, 56, 56, 256) 590080 _________________________________________________________________ block3_pool (MaxPooling2D) (None, 28, 28, 256) 0 _________________________________________________________________ block4_conv1 (Conv2D) (None, 28, 28, 512) 1180160 _________________________________________________________________ block4_conv2 (Conv2D) (None, 28, 28, 512) 2359808 _________________________________________________________________ block4_conv3 (Conv2D) (None, 28, 28, 512) 2359808 _________________________________________________________________ block4_pool (MaxPooling2D) (None, 14, 14, 512) 0 _________________________________________________________________ block5_conv1 (Conv2D) (None, 14, 14, 512) 2359808 _________________________________________________________________ block5_conv2 (Conv2D) (None, 14, 14, 512) 2359808 _________________________________________________________________ block5_conv3 (Conv2D) (None, 14, 14, 512) 2359808 _________________________________________________________________ block5_pool (MaxPooling2D) (None, 7, 7, 512) 0 _________________________________________________________________ flatten (Flatten) (None, 25088) 0 _________________________________________________________________ fc1 (Dense) (None, 4096) 102764544 _________________________________________________________________ fc2 (Dense) (None, 4096) 16781312 _________________________________________________________________ predictions (Dense) (None, 1000) 4097000 ================================================================= Total params: 138,357,544 Trainable params: 138,357,544 Non-trainable params: 0- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

下面是训练曲线(训练和验证)的代码展示及曲线图:

acc = history.history['accuracy'] val_acc = history.history['val_accuracy'] loss = history.history['loss'] val_loss = history.history['val_loss'] epochs_range = range(epochs) plt.figure(figsize=(12, 4)) plt.subplot(1, 2, 1) plt.plot(epochs_range, acc, label='Training Accuracy') plt.plot(epochs_range, val_acc, label='Validation Accuracy') plt.legend(loc='lower right') plt.title('Training and Validation Accuracy') plt.subplot(1, 2, 2) plt.plot(epochs_range, loss, label='Training Loss') plt.plot(epochs_range, val_loss, label='Validation Loss') plt.legend(loc='upper right') plt.title('Training and Validation Loss') plt.show()- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

-

相关阅读:

基础选择器汇总——标签选择器,类选择器、id选择器、通配符选择器

Ubuntu 20.04 升级 GLIBC 2.35

CloudCompare 技巧四 点云匹配

SpringBoot 常用注解的原理和使用

Nginx

POST请求

网址收藏-技术类

SpringBoot项目启动的时候直接退出了?

9_数据的增删改查(重点)

项目管理之人力资源管理

- 原文地址:https://blog.csdn.net/self_Name_/article/details/126328288