前言

如果直接使用Elasticsearch的朋友在处理中文内容的搜索时,肯定会遇到很尴尬的问题——中文词语被分成了一个一个的汉字,当用Kibana作图的时候,按照term来分组,结果一个汉字被分成了一组。

这是因为使用了Elasticsearch中默认的标准分词器,这个分词器在处理中文的时候会把中文单词切分成一个一个的汉字,因此引入中文的分词器就能解决这个问题。

默认已安装:ElasticSerach7.15.2 和 Kibana-7.15.2

插件下载

下载编译压缩包方法:

- pingying 下载地址: https://github.com/medcl/elasticsearch-analysis-pinyin

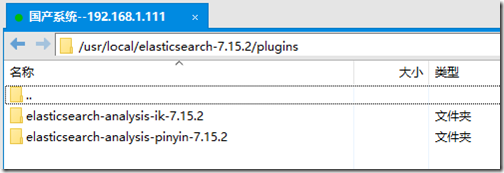

将下载且编译好的zip包进行解压,进行拷贝,上传到ElasticSerach的 plugins的文件夹下(/usr/local/elasticsearch-7.15.2/plugins)

- 重启ElasticSearch:systemctl restart elasticsearch.service

IK分词器测试

- #创建索引

- put index

-

- #创建映射

- post index/_mapping

- {

- "properties": {

- "content": {

- "type": "text",

- "analyzer": "ik_max_word",

- "search_analyzer": "ik_smart"

- }

- }

-

- }

-

- #添加内容

- post /index/_create/1

- {"content":"美国留给伊拉克的是个烂摊子吗"}

-

- post /index/_create/2

- {"content":"公安部:各地校车将享最高路权"}

-

- post /index/_create/3

- {"content":"中韩渔警冲突调查:韩警平均每天扣1艘中国渔船"}

-

- post /index/_create/4

- {"content":"中国驻洛杉矶领事馆遭亚裔男子枪击 嫌犯已自首"}

-

- #根据条件查询

- post /index/_search

- {

- "query" : { "match" : { "content" : "中国" }},

- "highlight" : {

- "pre_tags" : ["

" , "" ], - "post_tags" : ["", ""],

- "fields" : {

- "content" : {}

- }

- }

- }

返回结果:

- {

- "took" : 4,

- "timed_out" : false,

- "_shards" : {

- "total" : 1,

- "successful" : 1,

- "skipped" : 0,

- "failed" : 0

- },

- "hits" : {

- "total" : {

- "value" : 2,

- "relation" : "eq"

- },

- "max_score" : 0.642793,

- "hits" : [

- {

- "_index" : "index",

- "_type" : "_doc",

- "_id" : "3",

- "_score" : 0.642793,

- "_source" : {

- "content" : "中韩渔警冲突调查:韩警平均每天扣1艘中国渔船"

- },

- "highlight" : {

- "content" : [

- "中韩渔警冲突调查:韩警平均每天扣1艘

中国 渔船" - ]

- }

- },

- {

- "_index" : "index",

- "_type" : "_doc",

- "_id" : "4",

- "_score" : 0.642793,

- "_source" : {

- "content" : "中国驻洛杉矶领事馆遭亚裔男子枪击 嫌犯已自首"

- },

- "highlight" : {

- "content" : [

- "

中国 驻洛杉矶领事馆遭亚裔男子枪击 嫌犯已自首" - ]

- }

- }

- ]

- }

- }

拼音分词器测试

1、使用自定义拼音分析器创建索引

- PUT /medcl/

- {

- "settings" : {

- "analysis" : {

- "analyzer" : {

- "pinyin_analyzer" : {

- "tokenizer" : "my_pinyin"

- }

- },

- "tokenizer" : {

- "my_pinyin" : {

- "type" : "pinyin",

- "keep_separate_first_letter" : false,

- "keep_full_pinyin" : true,

- "keep_original" : true,

- "limit_first_letter_length" : 16,

- "lowercase" : true,

- "remove_duplicated_term" : true

- }

- }

- }

- }

- }

2、测试分析器,分析一个中文名称,如:刘德华

- GET /medcl/_analyze

- {

- "text": ["刘德华"],

- "analyzer": "pinyin_analyzer"

- }

- {

- "tokens" : [

- {

- "token" : "liu",

- "start_offset" : 0,

- "end_offset" : 1,

- "type" : "word",

- "position" : 0

- },

- {

- "token" : "de",

- "start_offset" : 1,

- "end_offset" : 2,

- "type" : "word",

- "position" : 1

- },

- {

- "token" : "hua",

- "start_offset" : 2,

- "end_offset" : 3,

- "type" : "word",

- "position" : 2

- },

- {

- "token" : "刘德华",

- "start_offset" : 0,

- "end_offset" : 3,

- "type" : "word",

- "position" : 3

- },

- {

- "token" : "ldh",

- "start_offset" : 0,

- "end_offset" : 3,

- "type" : "word",

- "position" : 4

- }

- ]

- }

3、创建映射

- POST /medcl/_mapping

- {

- "properties": {

- "name": {

- "type": "keyword",

- "fields": {

- "pinyin": {

- "type": "text",

- "store": false,

- "term_vector": "with_offsets",

- "analyzer": "pinyin_analyzer",

- "boost": 10

- }

- }

- }

- }

-

- }

4、添加内容

- POST /medcl/_create/andy

- {"name":"刘德华"}

5、测试搜索

- #根据条件查询

- GET /medcl/_search

- {

- "query": {

- "match": {

- "name.pinyin": "liu"

- }

- }

- }

6、使用过滤

- PUT /medcl1/

- {

- "settings" : {

- "analysis" : {

- "analyzer" : {

- "user_name_analyzer" : {

- "tokenizer" : "whitespace",

- "filter" : "pinyin_first_letter_and_full_pinyin_filter"

- }

- },

- "filter" : {

- "pinyin_first_letter_and_full_pinyin_filter" : {

- "type" : "pinyin",

- "keep_first_letter" : true,

- "keep_full_pinyin" : false,

- "keep_none_chinese" : true,

- "keep_original" : false,

- "limit_first_letter_length" : 16,

- "lowercase" : true,

- "trim_whitespace" : true,

- "keep_none_chinese_in_first_letter" : true

- }

- }

- }

- }

- }

Token Test:刘德华 张学友 郭富城 黎明 四大天王

- GET /medcl1/_analyze

- {

- "text": ["刘德华 张学友 郭富城 黎明 四大天王"],

- "analyzer": "user_name_analyzer"

- }

- {

- "tokens" : [

- {

- "token" : "ldh",

- "start_offset" : 0,

- "end_offset" : 3,

- "type" : "word",

- "position" : 0

- },

- {

- "token" : "zxy",

- "start_offset" : 4,

- "end_offset" : 7,

- "type" : "word",

- "position" : 1

- },

- {

- "token" : "gfc",

- "start_offset" : 8,

- "end_offset" : 11,

- "type" : "word",

- "position" : 2

- },

- {

- "token" : "lm",

- "start_offset" : 12,

- "end_offset" : 14,

- "type" : "word",

- "position" : 3

- },

- {

- "token" : "sdtw",

- "start_offset" : 15,

- "end_offset" : 19,

- "type" : "word",

- "position" : 4

- }

- ]

- }

7、用于短语查询

- PUT /medcl2/

- {

- "settings" : {

- "analysis" : {

- "analyzer" : {

- "pinyin_analyzer" : {

- "tokenizer" : "my_pinyin"

- }

- },

- "tokenizer" : {

- "my_pinyin" : {

- "type" : "pinyin",

- "keep_first_letter":false,

- "keep_separate_first_letter" : false,

- "keep_full_pinyin" : true,

- "keep_original" : false,

- "limit_first_letter_length" : 16,

- "lowercase" : true

- }

- }

- }

- }

- }

- GET /medcl2/_search

- {

- "query": {"match_phrase": {

- "name.pinyin": "刘德华"

- }}

- }

- PUT /medcl3/

- {

- "settings" : {

- "analysis" : {

- "analyzer" : {

- "pinyin_analyzer" : {

- "tokenizer" : "my_pinyin"

- }

- },

- "tokenizer" : {

- "my_pinyin" : {

- "type" : "pinyin",

- "keep_first_letter":true,

- "keep_separate_first_letter" : true,

- "keep_full_pinyin" : true,

- "keep_original" : false,

- "limit_first_letter_length" : 16,

- "lowercase" : true

- }

- }

- }

- }

- }

-

- POST /medcl3/_mapping

- {

- "properties": {

- "name": {

- "type": "keyword",

- "fields": {

- "pinyin": {

- "type": "text",

- "store": false,

- "term_vector": "with_offsets",

- "analyzer": "pinyin_analyzer",

- "boost": 10

- }

- }

- }

- }

- }

-

-

- GET /medcl3/_analyze

- {

- "text": ["刘德华"],

- "analyzer": "pinyin_analyzer"

- }

-

- POST /medcl3/_create/andy

- {"name":"刘德华"}

-

- GET /medcl3/_search

- {

- "query": {"match_phrase": {

- "name.pinyin": "刘德h"

- }}

- }

-

- GET /medcl3/_search

- {

- "query": {"match_phrase": {

- "name.pinyin": "刘dh"

- }}

- }

-

- GET /medcl3/_search

- {

- "query": {"match_phrase": {

- "name.pinyin": "liudh"

- }}

- }

-

- GET /medcl3/_search

- {

- "query": {"match_phrase": {

- "name.pinyin": "liudeh"

- }}

- }

-

- GET /medcl3/_search

- {

- "query": {"match_phrase": {

- "name.pinyin": "liude华"

- }}

- }