-

Python深度学习实践代码实现

一、线性模型

课程

代码

import numpy as np import matplotlib.pyplot as plt x_data=[1.0,2.0,3.0] y_data=[2.0,4.0,6.0] #前馈函数 def forward(x): return x*w #损失函数 def loss(x,y): y_pred=forward(x) return (y_pred-y)*(y_pred-y) w_list=[] mse_list=[] for w in np.arange(0.0,4.1,0.1): print("w=",w) l_sum=0 for x_v,y_v in zip(x_data,y_data): loss_v=loss(x_v,y_v) l_sum+=loss_v print(x_v,y_v,loss_v) l_sum/=3 print("MSE=",l_sum) w_list.append(w) mse_list.append(l_sum) plt.plot(w_list,mse_list) plt.xlabel('w') plt.ylabel('loss') plt.show()- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

结果

w= 0.0

1.0 2.0 4.0

2.0 4.0 16.0

3.0 6.0 36.0

MSE= 18.666666666666668

w= 2.0

1.0 2.0 0.0

2.0 4.0 0.0

3.0 6.0 0.0

MSE= 0.0

w= 4.0

1.0 2.0 4.0

2.0 4.0 16.0

3.0 6.0 36.0

MSE= 18.666666666666668作业

代码

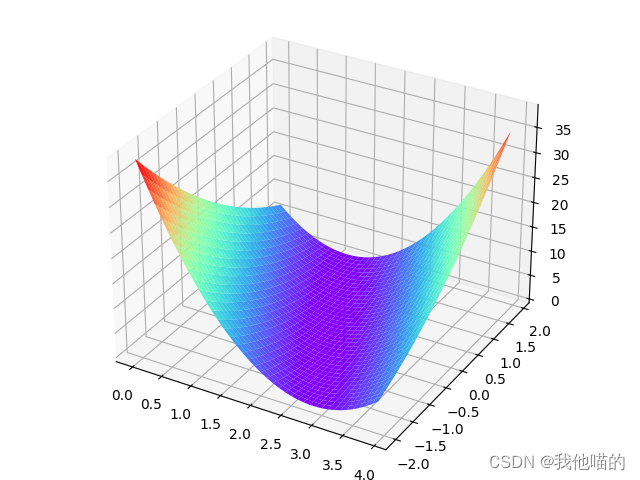

import numpy as np import matplotlib.pyplot as plt from mpl_toolkits.mplot3d import Axes3D #令y=2x+1 x_data=[1.0,2.0,3.0] y_data=[2.0,4.0,6.0] def forward(x): return x*w+b def loss(x,y): y_pred=forward(x) return (y_pred-y)*(y_pred-y) w_list=np.arange(0.0,4.0,0.1) b_list=np.arange(-2.0,2.0,0.1) mse_list=[] for w in np.arange(0.0,4.0,0.1): for b in np.arange(-2.0,2.0,0.1): print("w=",w) print("b=",b) l_sum=0 for x_v,y_v in zip(x_data,y_data): loss_v=loss(x_v,y_v) l_sum+=loss_v print(x_v,y_v,loss_v) l_sum/=3 print("MSE=",l_sum) mse_list.append(l_sum) #将一维的数值转变为二维坐标点 w,b=np.meshgrid(w_list,b_list) #调整形状并转置,以适应w,b的坐标 mse=np.array(mse_list) mse=np.transpose(mse.reshape(40,40)) fig = plt.figure() ax =fig.add_axes(Axes3D(fig)) ax.plot_surface(w,b,mse,cmap=plt.get_cmap('rainbow')) plt.show()- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

结果

二、梯度下降算法

课程

初始梯度下降算法

代码

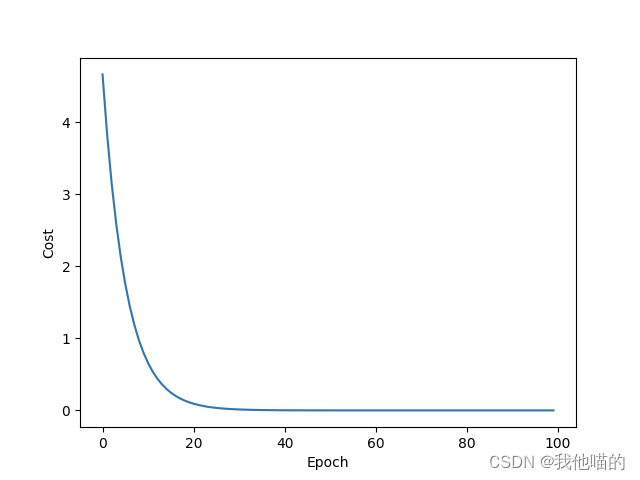

import numpy as np import matplotlib.pyplot as plt from mpl_toolkits.mplot3d import Axes3D #令y=2x+1 x_data=[1.0,2.0,3.0] y_data=[2.0,4.0,6.0] w=1.0 def forward(x): return x*w def cost(xs,ys): cost=0 for x,y in zip(xs,ys): cost+=(forward(x)-y)**2 return cost/len(xs) def gradient(xs,ys): grad=0 for x,y in zip(xs,ys): grad+=2*x*(forward(x)-y) return grad/len(xs) print("Predict (before training)",4,forward(4)) cost_list=[] epoch_list=[] for epoch in range(100): cost_v=cost(x_data,y_data) grad_v=gradient(x_data,y_data) w-=0.01*grad_v cost_list.append(cost_v) epoch_list.append(epoch) print("Epoch:",epoch,"w=:",w,"cost=",cost_v) print("Predict (after training)",4,forward(4)) plt.plot(epoch_list,cost_list) plt.xlabel("Epoch") plt.ylabel("Cost") plt.show()- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

结果

Predict (before training) 4 4.0

Epoch: 0 w=: 1.0933333333333333 cost= 4.666666666666667

Epoch: 1 w=: 1.1779555555555554 cost= 3.8362074074074086

Epoch: 2 w=: 1.2546797037037036 cost= 3.1535329869958857

Epoch: 3 w=: 1.3242429313580246 cost= 2.592344272332262

Epoch: 4 w=: 1.3873135910979424 cost= 2.1310222071581117

Epoch: 5 w=: 1.4444976559288012 cost= 1.7517949663820642

Epoch: 6 w=: 1.4963445413754464 cost= 1.440053319920117

Epoch: 7 w=: 1.5433523841804047 cost= 1.1837878313441108

Epoch: 8 w=: 1.5859728283235668 cost= 0.9731262101573632

Epoch: 9 w=: 1.6246153643467005 cost= 0.7999529948031382

Epoch: 10 w=: 1.659651263674342 cost= 0.6575969151946154

Epoch: 11 w=: 1.6914171457314033 cost= 0.5405738908195378

Epoch: 12 w=: 1.7202182121298057 cost= 0.44437576375991855

Epoch: 13 w=: 1.7463311789976905 cost= 0.365296627844598

Epoch: 14 w=: 1.7700069356245727 cost= 0.3002900634939416

Epoch: 15 w=: 1.7914729549662791 cost= 0.2468517784170642

Epoch: 16 w=: 1.8109354791694263 cost= 0.2029231330489788

Epoch: 17 w=: 1.8285815011136133 cost= 0.16681183417217407

Epoch: 18 w=: 1.8445805610096762 cost= 0.1371267415488235

Epoch: 19 w=: 1.8590863753154396 cost= 0.11272427607497944

Epoch: 20 w=: 1.872238313619332 cost= 0.09266436490145864

Epoch: 21 w=: 1.8841627376815275 cost= 0.07617422636521683

Epoch: 22 w=: 1.8949742154979183 cost= 0.06261859959338009

Epoch: 23 w=: 1.904776622051446 cost= 0.051475271914629306

Epoch: 24 w=: 1.9136641373266443 cost= 0.04231496130368814

Epoch: 25 w=: 1.9217221511761575 cost= 0.03478477885657844

Epoch: 26 w=: 1.9290280837330496 cost= 0.02859463421027894

Epoch: 27 w=: 1.9356521292512983 cost= 0.023506060193480772

Epoch: 28 w=: 1.9416579305211772 cost= 0.01932302619282764

Epoch: 29 w=: 1.9471031903392007 cost= 0.015884386331668398

Epoch: 30 w=: 1.952040225907542 cost= 0.01305767153735723

Epoch: 31 w=: 1.9565164714895047 cost= 0.010733986344664803

Epoch: 32 w=: 1.9605749341504843 cost= 0.008823813841374291

Epoch: 33 w=: 1.9642546069631057 cost= 0.007253567147113681

Epoch: 34 w=: 1.9675908436465492 cost= 0.005962754575689583

Epoch: 35 w=: 1.970615698239538 cost= 0.004901649272531298

Epoch: 36 w=: 1.9733582330705144 cost= 0.004029373553099482

Epoch: 37 w=: 1.975844797983933 cost= 0.0033123241439168096

Epoch: 38 w=: 1.9780992835054327 cost= 0.0027228776607060357

Epoch: 39 w=: 1.980143350378259 cost= 0.002238326453885249

Epoch: 40 w=: 1.9819966376762883 cost= 0.001840003826269386

Epoch: 41 w=: 1.983676951493168 cost= 0.0015125649231412608

Epoch: 42 w=: 1.9852004360204722 cost= 0.0012433955919298103

Epoch: 43 w=: 1.9865817286585614 cost= 0.0010221264385926248

Epoch: 44 w=: 1.987834100650429 cost= 0.0008402333603648631

Epoch: 45 w=: 1.9889695845897222 cost= 0.0006907091659248264

Epoch: 46 w=: 1.9899990900280147 cost= 0.0005677936325753796

Epoch: 47 w=: 1.9909325082920666 cost= 0.0004667516012495216

Epoch: 48 w=: 1.9917788075181404 cost= 0.000383690560742734

Epoch: 49 w=: 1.9925461188164473 cost= 0.00031541069384432885

Epoch: 50 w=: 1.9932418143935788 cost= 0.0002592816085930997

Epoch: 51 w=: 1.9938725783835114 cost= 0.0002131410058905752

Epoch: 52 w=: 1.994444471067717 cost= 0.00017521137977565514

Epoch: 53 w=: 1.9949629871013967 cost= 0.0001440315413480261

Epoch: 54 w=: 1.9954331083052663 cost= 0.0001184003283899171

Epoch: 55 w=: 1.9958593515301082 cost= 9.733033217332803e-05

Epoch: 56 w=: 1.9962458120539648 cost= 8.000985883901657e-05

Epoch: 57 w=: 1.9965962029289281 cost= 6.57716599593935e-05

Epoch: 58 w=: 1.9969138906555615 cost= 5.406722767150764e-05

Epoch: 59 w=: 1.997201927527709 cost= 4.444566413387458e-05

Epoch: 60 w=: 1.9974630809584561 cost= 3.65363112808981e-05

Epoch: 61 w=: 1.9976998600690001 cost= 3.0034471708953996e-05

Epoch: 62 w=: 1.9979145397958935 cost= 2.4689670610172655e-05

Epoch: 63 w=: 1.9981091827482769 cost= 2.0296006560253656e-05

Epoch: 64 w=: 1.9982856590251044 cost= 1.6684219437262796e-05

Epoch: 65 w=: 1.9984456641827613 cost= 1.3715169898293847e-05

Epoch: 66 w=: 1.9985907355257035 cost= 1.1274479219506377e-05

Epoch: 67 w=: 1.9987222668766378 cost= 9.268123006398985e-06

Epoch: 68 w=: 1.9988415219681517 cost= 7.61880902783969e-06

Epoch: 69 w=: 1.9989496465844576 cost= 6.262999634617916e-06

Epoch: 70 w=: 1.9990476795699081 cost= 5.1484640551938914e-06

Epoch: 71 w=: 1.9991365628100501 cost= 4.232266273994499e-06

Epoch: 72 w=: 1.999217150281112 cost= 3.479110977946351e-06

Epoch: 73 w=: 1.999290216254875 cost= 2.859983851026929e-06

Epoch: 74 w=: 1.9993564627377531 cost= 2.3510338359374262e-06

Epoch: 75 w=: 1.9994165262155628 cost= 1.932654303533636e-06

Epoch: 76 w=: 1.999470983768777 cost= 1.5887277332523938e-06

Epoch: 77 w=: 1.9995203586170245 cost= 1.3060048068548734e-06

Epoch: 78 w=: 1.9995651251461022 cost= 1.0735939958924364e-06

Epoch: 79 w=: 1.9996057134657994 cost= 8.825419799121559e-07

Epoch: 80 w=: 1.9996425135423248 cost= 7.254887315754342e-07

Epoch: 81 w=: 1.999675878945041 cost= 5.963839812987369e-07

Epoch: 82 w=: 1.999706130243504 cost= 4.902541385825727e-07

Epoch: 83 w=: 1.9997335580874436 cost= 4.0301069098738336e-07

Epoch: 84 w=: 1.9997584259992822 cost= 3.312926995781724e-07

Epoch: 85 w=: 1.9997809729060159 cost= 2.723373231729343e-07

Epoch: 86 w=: 1.9998014154347876 cost= 2.2387338352920307e-07

Epoch: 87 w=: 1.9998199499942075 cost= 1.8403387118941732e-07

Epoch: 88 w=: 1.9998367546614149 cost= 1.5128402140063082e-07

Epoch: 89 w=: 1.9998519908930161 cost= 1.2436218932547864e-07

Epoch: 90 w=: 1.9998658050763347 cost= 1.0223124683409346e-07

Epoch: 91 w=: 1.9998783299358769 cost= 8.403862850836479e-08

Epoch: 92 w=: 1.9998896858085284 cost= 6.908348768398496e-08

Epoch: 93 w=: 1.9998999817997325 cost= 5.678969725349543e-08

Epoch: 94 w=: 1.9999093168317574 cost= 4.66836551287917e-08

Epoch: 95 w=: 1.9999177805941268 cost= 3.8376039345125727e-08

Epoch: 96 w=: 1.9999254544053418 cost= 3.154680994333735e-08

Epoch: 97 w=: 1.9999324119941766 cost= 2.593287985380858e-08

Epoch: 98 w=: 1.9999387202080534 cost= 2.131797981222471e-08

Epoch: 99 w=: 1.9999444396553017 cost= 1.752432687141379e-08

Predict (after training) 4 7.999777758621207随机梯度下降算法

在梯度下降中可能遇到导数为0的鞍点,使得w无法进行迭代,采用随机梯度下降可以利用噪声使迭代继续进行

代码

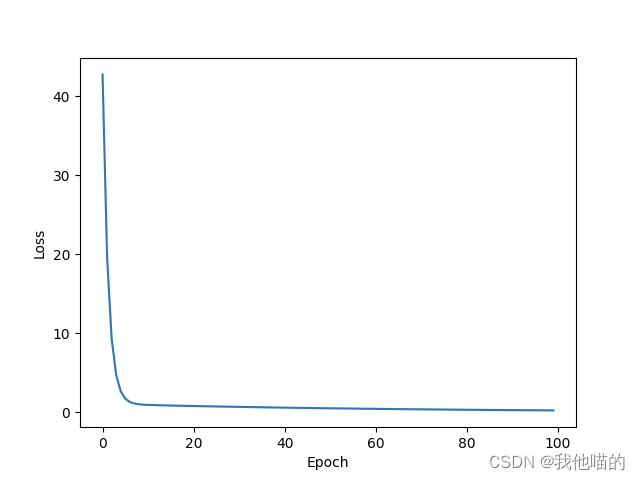

import numpy as np import matplotlib.pyplot as plt x_data=[1.0,2.0,3.0] y_data=[2.0,4.0,6.0] w=1.0 #前馈函数 def forward(x): return x*w #损失函数 def loss(x,y): y_pred=forward(x) return (y_pred-y)*(y_pred-y) def gradient(x,y): return 2*x*(forward(x)-y) cost_list=[] epoch_list=[] print("Predict (before training)",4,forward(4)) for epoch in range(100): cost_v=0 for x,y in zip(x_data,y_data): grad=gradient(x,y) w-=0.01*grad print("\tgrad:",x,y,grad) cost_v+=loss(x,y) cost_list.append(cost_v) epoch_list.append(epoch) print("Epoch:",epoch,"w=:",w,"cost=",cost_v) print("Predict (after training)",4,forward(4)) plt.plot(epoch_list,cost_list) plt.xlabel("Epoch") plt.ylabel("Cost") plt.show()- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

结果

Predict (before training) 4 4.0

grad: 1.0 2.0 -2.0650148258027912e-13

grad: 2.0 4.0 -8.100187187665142e-13

grad: 3.0 6.0 -1.6786572132332367e-12

Epoch: 99 w=: 1.9999999999999236 cost= 9.755053953963032e-26

Predict (after training) 4 7.9999999999996945

注:随机梯度下降算法最终结果更加精确,因为虽然Epoch相同,但经历了更多次更新三、反向传播

课程

代码

import torch import numpy as np import matplotlib.pyplot as plt from mpl_toolkits.mplot3d import Axes3D #令y=2x+1 x_data=[1.0,2.0,3.0] y_data=[2.0,4.0,6.0] w=torch.Tensor([1.0]) w.requires_grad=True #结果为Tensor类型 def forward(x): return x*w #构建计算图 def loss(x,y): y_pred=forward(x) return (y_pred-y)**2 print("Predict (before training)",4,forward(4).item()) for epoch in range(100): for x,y in zip(x_data,y_data): #前向传播求损失 loss_v=loss(x,y) #反向传播求梯度 loss_v.backward() print("\tgrad:",x,y,w.grad.item()) #更新参数 w.data=w.data-0.01*w.grad.data #清空梯度,否则会累加 w.grad.data.zero_() print("progress:",epoch,loss_v.item()) print("Predict (after training)",4,forward(4).item())- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

结果

Predict (before training) 4 4.0

grad: 1.0 2.0 -7.152557373046875e-07

grad: 2.0 4.0 -2.86102294921875e-06

grad: 3.0 6.0 -5.7220458984375e-06

progress: 99 9.094947017729282e-13

Predict (after training) 4 7.999998569488525作业

1.

2. 3.画出下式的计算图,损失函数为loss=(ŷ-y)²。手动推导反向传播的过程,并且用pytorch的代码实现

3.画出下式的计算图,损失函数为loss=(ŷ-y)²。手动推导反向传播的过程,并且用pytorch的代码实现

y = w 1 x 2 + w 2 x + b y=w_1x²+w_2x+b y=w1x2+w2x+b

代码

import torch import numpy as np import matplotlib.pyplot as plt from mpl_toolkits.mplot3d import Axes3D #令y=2x+1 x_data=[1.0,2.0,3.0] y_data=[2.0,4.0,6.0] w1=torch.Tensor([1.0]) w2=torch.Tensor([1.0]) b=torch.Tensor([1.0]) w1.requires_grad=True w2.requires_grad=True b.requires_grad=True #结果为Tensor类型 def forward(x): return w1*x*x+w2*x+b #构建计算图 def loss(x,y): y_pred=forward(x) return (y_pred-y)**2 print("Predict (before training)",4,forward(4).item()) for epoch in range(100): for x,y in zip(x_data,y_data): #前向传播求损失 loss_v=loss(x,y) #反向传播求梯度 loss_v.backward() print("\tgrad:",x,y,w1.grad.item(),w2.grad.item(),b.grad.item()) #更新参数 w1.data=w1.data-0.01*w1.grad.data w2.data = w2.data-0.01*w2.grad.data b.data=b.data-0.01*b.grad.data #清空梯度,否则会累加 w1.grad.data.zero_() w2.grad.data.zero_() b.grad.data.zero_() print("progress:",epoch,loss_v.item()) print("Predict (after training)",4,forward(4).item())- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

结果

训练100次的结果为:

grad: 1.0 2.0 0.31661415100097656 0.31661415100097656 0.31661415100097656

grad: 2.0 4.0 -1.7297439575195312 -0.8648719787597656 -0.4324359893798828

grad: 3.0 6.0 1.4307546615600586 0.47691822052001953 0.15897274017333984

Epoch: 99 0.00631808303296566

Predict(after training) 4 8.544171333312988

注:当一次函数数据使用二次函数作为模型时,同样可以收敛,但需要更多次迭代,或者修改学习率达成快速收敛的目的四、用PyTorch实现逻辑回归

课程

代码

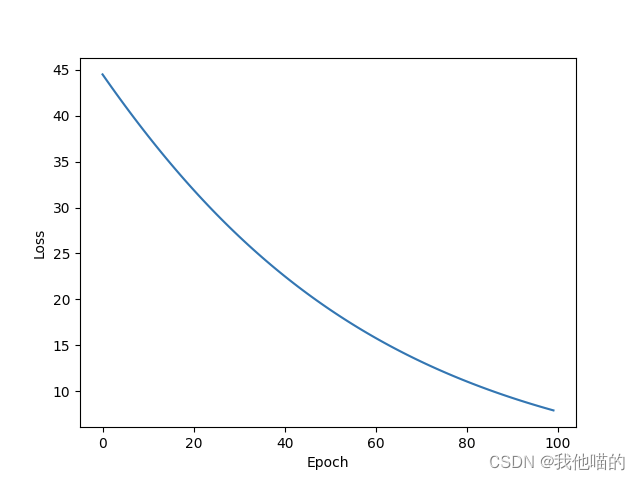

import torch import numpy as np import matplotlib.pyplot as plt from mpl_toolkits.mplot3d import Axes3D x_data = torch.Tensor([[1.0],[2.0],[3.0]]) y_data = torch.Tensor([[2.0],[4.0],[6.0]]) class LinearModel(torch.nn.Module): #构造函数 def __init__(self): super(LinearModel,self).__init__() #构造对象,说明输入和输出的维度,第三个参数可以设置是否需要偏移量,默认为True self.linear=torch.nn.Linear(1,1) def forward(self,x): #调用线性模型,计算wx+b y_pred=self.linear(x) return y_pred #实例化,传入参数时调用类中的forward函数 model=LinearModel() #使用MSE计算Loss,size_average选择是否计算平均值(新版本使用reduction) criterion=torch.nn.MSELoss(reduction='sum') #model.parameters()可以查找需要迭代的参数,lr可以设置学习率 optimizer=torch.optim.SGD(model.parameters(),lr=0.01) epoch_list=[] loss_list=[] for epoch in range(100): #前向传播 y_pred=model(x_data) loss=criterion(y_pred,y_data) print(epoch,loss.item()) # 反向传播 optimizer.zero_grad() loss.backward() #更新 optimizer.step() epoch_list.append(epoch) loss_list.append(loss.item()) #输出权重和偏移量 print("w=",model.linear.weight.item()) print("b=",model.linear.bias.item()) #测试结果 x_test=torch.Tensor([4.0]) y_test=model(x_test) print("y_pred=",y_test.data) plt.plot(epoch_list,loss_list) plt.xlabel("Epoch") plt.ylabel("Loss") plt.show()- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

结果

似乎训练出的结果会产生变化,所以就不列出输出信息了,1000次左右可以获得比较准确的结果

作业

使用不同的优化器对性能产生的影响

结果

1.torch.optim.Adagrad

100次时还远没有收敛

2.torch.optim.Ada

3.optimizer=torch.optim.Adamax

4.torch.optim.ASGD

5.torch.optim.RMSprop

6.torch.optim.Rprop

五、逻辑斯蒂回归

课程

逻辑斯蒂回归实际上属于分类,主要用于解决二分类问题,其相较于线性回归的区别如下式:

y ^ liner = w x + b \hat{y}_{\text{liner}}=wx+b y^liner=wx+b

y ^ logistic = s i g m o i d ( w x + b ) \hat{y}_{\text{logistic}}=sigmoid(wx+b) y^logistic=sigmoid(wx+b)

其中,

s i g m o i d = 1 1 + e − x sigmoid=\frac{1}{1+e^{-x}} sigmoid=1+e−x1

这样就可以将原本的输出变成0-1范围内的概率,此时损失函数由原来的MSE变为BCE,即

l o s s = − ( y l o g y ^ + ( 1 − y ) l o g ( 1 − y ^ ) ) loss=-(ylog\hat y+(1-y)log(1-\hat y)) loss=−(ylogy^+(1−y)log(1−y^))代码

import torch import numpy as np import matplotlib.pyplot as plt import torch.nn.functional as fun from mpl_toolkits.mplot3d import Axes3D x_data = torch.Tensor([[1.0],[2.0],[3.0]]) y_data = torch.Tensor([[0],[0],[1]]) class LinearModel(torch.nn.Module): #构造函数 def __init__(self): super(LinearModel,self).__init__() #构造对象,说明输入和输出的维度,第三个参数可以设置是否需要偏移量,默认为True self.linear=torch.nn.Linear(1,1) def forward(self,x): #调用sigmoid函数计算结果 y_pred=fun.sigmoid(self.linear(x)) return y_pred #实例化,传入参数时调用类中的forward函数 model=LinearModel() #使用BCE计算Loss,size_average选择是否计算平均值(新版本使用reduction) criterion=torch.nn.BCELoss(reduction='sum') #model.parameters()可以查找需要迭代的参数,lr可以设置学习率 optimizer=torch.optim.SGD(model.parameters(),lr=0.01) for epoch in range(1000): #前向传播 y_pred=model(x_data) loss=criterion(y_pred,y_data) print(epoch,loss.item()) # 反向传播 optimizer.zero_grad() loss.backward() #更新 optimizer.step() #范围从0-10,采取200个点 x=np.linspace(0,10,200) #相当于reshape x_t=torch.Tensor(x).view((200,1)) y_t=model(x_t) y=y_t.data.numpy() plt.plot(x,y) #绘制分割线 plt.plot([0,10],[0.5,0.5],c='r') plt.xlabel("Hours") plt.ylabel("Probability of Pass") plt.grid() plt.show()- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

结果

训练出的Loss会发生改变,训练1000次后大约在1.0左右

六、处理多维特征的输入

课程

代码

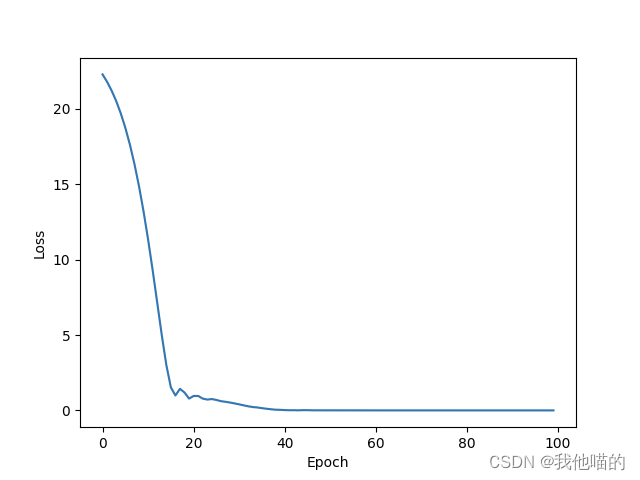

import torch import numpy as np import matplotlib.pyplot as plt import torch.nn.functional as fun from mpl_toolkits.mplot3d import Axes3D test=np.loadtxt('test.csv',delimiter=',',dtype=np.float32) train=np.loadtxt('train.csv',delimiter=',',dtype=np.float32) x_data = torch.from_numpy(train[:,:-1]) y_data = torch.from_numpy(train[:,[-1]]) x_test = torch.from_numpy(test[:,:-1]) y_test = torch.from_numpy(test[:,[-1]]) class Model(torch.nn.Module): #构造函数 def __init__(self): super(Model,self).__init__() #构造对象,说明输入和输出的维度,第三个参数可以设置是否需要偏移量,默认为True self.linear1=torch.nn.Linear(8,6) self.linear2=torch.nn.Linear(6,4) self.linear3=torch.nn.Linear(4,1) self.active=torch.nn.Sigmoid() def forward(self,x): #调用sigmoid函数计算结果 x=self.active(self.linear1(x)) x=self.active(self.linear2(x)) x=self.active(self.linear3(x)) return x #实例化,传入参数时调用类中的forward函数 loss_list=[] epoch_list=[] model=Model() #使用BCE计算Loss,size_average选择是否计算平均值(新版本使用reduction) criterion=torch.nn.BCELoss(reduction='sum') #model.parameters()可以查找需要迭代的参数,lr可以设置学习率 optimizer=torch.optim.Adam(model.parameters(),lr=0.01) for epoch in range(10000): #前向传播 y_pred=model(x_data) loss=criterion(y_pred,y_data) epoch_list.append(epoch) loss_list.append(loss.item()) # 反向传播 optimizer.zero_grad() loss.backward() #更新 optimizer.step() y_pred_label=torch.where(y_pred>=0.5,torch.tensor([1.0]),torch.tensor([0.0])) acc=torch.eq(y_pred_label,y_data).sum().item()/y_data.size(0) print(loss.data,acc) y_res=model(x_test) y_pred_label=torch.where(y_res>=0.5,torch.tensor([1.0]),torch.tensor([0.0])) acc=torch.eq(y_pred_label,y_test).sum().item()/y_test.size(0) print(acc) plt.plot(epoch_list,loss_list) plt.xlabel("Epoch") plt.ylabel("Loss") plt.show()- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

结果

tensor(118.9754) 0.9096045197740112

0.7533039647577092

注:似乎有方法可以进一步降低损失,试验后虽然在训练集中的acc接近100%,但在测试集中的表现却比较平庸 -

相关阅读:

分割集合list成为多个子list

(论文阅读40-45)图像描述1

Java并发(1)--线程,进程,以及缓存

IDEA安装配置SceneBuilder

无线WiFi安全渗透与攻防(N.4)WPA-hashcat渗透

使用magic-api构建迅速开发平台的成功案例分享

三大数据库 sequence 之华山论剑 (下篇)

1. Collection,List, Map, Queue

我,90后,有点想住养老院

[Git][多人协作][下]详细讲解

- 原文地址:https://blog.csdn.net/WTMDNM_/article/details/133839561